Introduction: Mathematical optimization

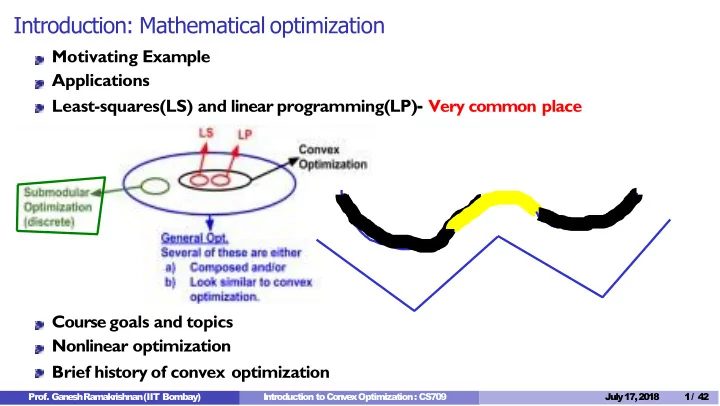

Motivating Example Applications Least-squares(LS) and linear programming(LP)- Very common place Course goals and topics Nonlinear optimization Brief history of convex optimization

- Prof. G

a n e s h Ramakrishnan (IIT Bombay) Introduction to C

- n

v e x Optimization : CS709 July 17,2018 1/ 42