Integratingdeeplearningandlogic DeepLearning No constraints on - PowerPoint PPT Presentation

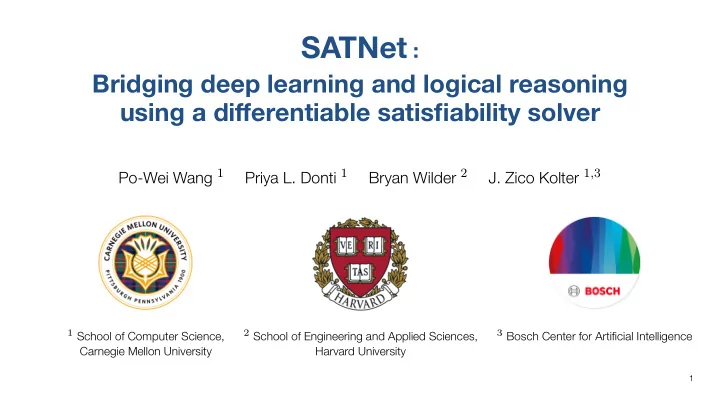

SATNet : Bridgingdeeplearningandlogicalreasoning usingadifferentiablesatisfiabilitysolver Po-Wei Wang 1 Priya L. Donti 1 Bryan Wilder 2 J. Zico Kolter 1 , 3 1 School of Computer Science, 2 School of Engineering and Applied Sciences, 3

SATNet : Bridging deep learning and logical reasoning using a differentiable satisfiability solver Po-Wei Wang 1 Priya L. Donti 1 Bryan Wilder 2 J. Zico Kolter 1 , 3 1 School of Computer Science, 2 School of Engineering and Applied Sciences, 3 Bosch Center for Artificial Intelligence Carnegie Mellon University Harvard University 1

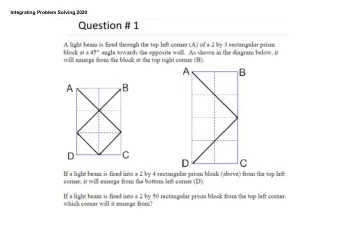

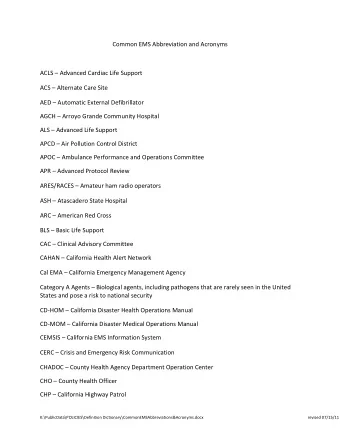

Integrating deep learning and logic Deep Learning No constraints on output Differentiable Solved via gradient optimizers 2 Sudoku image: ”12 Jan 2006” by SudoFlickr is licensed under CC BY-SA 2.0

Integrating deep learning and logic Deep Learning Logical Inference No constraints on output Rich constraints on output Differentiable Discrete input/output Solved via gradient optimizers Solved via tree search 2 Sudoku image: ”12 Jan 2006” by SudoFlickr is licensed under CC BY-SA 2.0

Integrating deep learning and logic Deep Learning Logical Inference No constraints on output Rich constraints on output → Differentiable Discrete input/output Solved via gradient optimizers Solved via tree search 2 Sudoku image: ”12 Jan 2006” by SudoFlickr is licensed under CC BY-SA 2.0

Integrating deep learning and logic Deep Learning Logical Inference No constraints on output + Rich constraints on output Differentiable Discrete input/output Solved via gradient optimizers Solved via tree search 2 Sudoku image: ”12 Jan 2006” by SudoFlickr is licensed under CC BY-SA 2.0

Integrating deep learning and logic Deep Learning Logical Inference No constraints on output + Rich constraints on output Differentiable Discrete input/output Solved via gradient optimizers Solved via tree search 2 Sudoku image: ”12 Jan 2006” by SudoFlickr is licensed under CC BY-SA 2.0

Not about learning to find SAT solutions [Selsam et al. 2019] - but about learning both constraints and solution from examples Not about using DL and SAT in a multi-staged manner - doing so requires prior knowledge on the stucture and constraints - further, current SAT solvers cannot accept probability inputs This talk is not about . . . 3

Not about using DL and SAT in a multi-staged manner - doing so requires prior knowledge on the stucture and constraints - further, current SAT solvers cannot accept probability inputs This talk is not about . . . Not about learning to find SAT solutions [Selsam et al. 2019] - but about learning both constraints and solution from examples 3

This talk is not about . . . Not about learning to find SAT solutions [Selsam et al. 2019] - but about learning both constraints and solution from examples Not about using DL and SAT in a multi-staged manner - doing so requires prior knowledge on the stucture and constraints - further, current SAT solvers cannot accept probability inputs 3

- A smoothed differentiable (maximum) satisfiability solver that can be integrated into the loop of deep learning systems. This talk is about - A layer that enables end-to-end learning of both the constraints and solutions of logic problems within deep networks... 4

This talk is about - A layer that enables end-to-end learning of both the constraints and solutions of logic problems within deep networks... - A smoothed differentiable (maximum) satisfiability solver that can be integrated into the loop of deep learning systems. 4

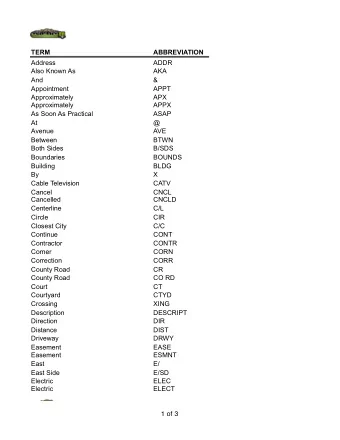

Typical SAT: Clause matrix given, find satisfying assignment Our setting: Clause matrix is parameters of the layer (to be learned) Review of SAT problems Example SAT problem: v 2 ∧ ( v 1 ∨ ¬ v 2 ) ∧ ( v 2 ∨ ¬ v 3 ) 5

Typical SAT: Clause matrix given, find satisfying assignment Our setting: Clause matrix is parameters of the layer (to be learned) Review of SAT problems Example SAT problem: v 2 ∧ ( v 1 ∨ ¬ v 2 ) ∧ ( v 2 ∨ ¬ v 3 ) ⇓ 0 1 0 v 2 S = 1 − 1 0 v 1 ∨ ¬ v 2 0 1 − 1 v 2 ∨ ¬ v 3 5

Review of SAT problems Example SAT problem: v 2 ∧ ( v 1 ∨ ¬ v 2 ) ∧ ( v 2 ∨ ¬ v 3 ) ⇓ 0 1 0 v 2 S = 1 − 1 0 v 1 ∨ ¬ v 2 0 1 − 1 v 2 ∨ ¬ v 3 Typical SAT: Clause matrix given, find satisfying assignment Our setting: Clause matrix is parameters of the layer (to be learned) 5

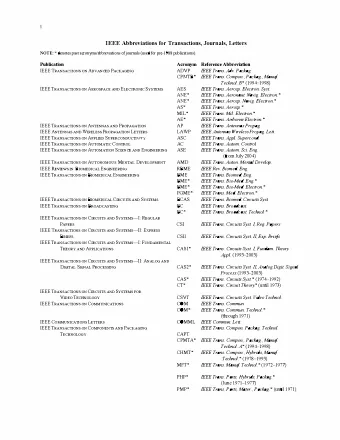

Relax the binary variables to smooth & continuous spheres Semidefinite relaxation (Goemans-Williamson, 1995), s.t. minimize diag MAXSAT Problem MAXSAT is the optimization variant of SAT solving SAT: Find feasible v i s.t. v 2 ∧ ( v 1 ∨ ¬ v 2 ) ∧ ( v 2 ∨ ¬ v 3 ) MAXSAT: maximize # of satisfiable clauses 6

Semidefinite relaxation (Goemans-Williamson, 1995), s.t. minimize diag MAXSAT Problem MAXSAT is the optimization variant of SAT solving SAT: Find feasible v i s.t. v 2 ∧ ( v 1 ∨ ¬ v 2 ) ∧ ( v 2 ∨ ¬ v 3 ) MAXSAT: maximize # of satisfiable clauses Relax the binary variables to smooth & continuous spheres equiv → | v i | = 1 , v i ∈ R 1 relax → ∥ v i ∥ = 1 , v i ∈ R k v i ∈ { +1 , − 1 } − − − − − 6

MAXSAT Problem MAXSAT is the optimization variant of SAT solving SAT: Find feasible v i s.t. v 2 ∧ ( v 1 ∨ ¬ v 2 ) ∧ ( v 2 ∨ ¬ v 3 ) MAXSAT: maximize # of satisfiable clauses Relax the binary variables to smooth & continuous spheres equiv → | v i | = 1 , v i ∈ R 1 relax → ∥ v i ∥ = 1 , v i ∈ R k v i ∈ { +1 , − 1 } − − − − − Semidefinite relaxation (Goemans-Williamson, 1995), X = V T V minimize ⟨ S T S , X ⟩ , s.t. X ⪰ 0 , diag ( X ) = 1 . 6

SATNet: MAXSAT SDP as a layer 7

SATNet: MAXSAT SDP as a layer 7

SATNet: MAXSAT SDP as a layer 7

SATNet: MAXSAT SDP as a layer 7

SATNet: MAXSAT SDP as a layer 7

SATNet: MAXSAT SDP as a layer 7

SATNet: MAXSAT SDP as a layer 7

For , the non-convex iterates are guaranteed to converge to global optima of SDP [Wang et al., 2018; Erdogdu et al., 2018] Complexity reduced from of interior point methods to of our method, where is #clauses. log log log Fast solution to MAXSAT SDP approximation Efficiently solve via low-rank factorization X = V T V , V ∈ R k × n , ∥ v i ∥ = 1 (a.k.a. Burer-Monteiro method), and block coordinate descent iters v i = − normalize ( VS T s i − ∥ s i ∥ 2 v i ) . 8

Complexity reduced from of interior point methods to of our method, where is #clauses. log log log Fast solution to MAXSAT SDP approximation Efficiently solve via low-rank factorization X = V T V , V ∈ R k × n , ∥ v i ∥ = 1 (a.k.a. Burer-Monteiro method), and block coordinate descent iters v i = − normalize ( VS T s i − ∥ s i ∥ 2 v i ) . √ For k > 2 n , the non-convex iterates are guaranteed to converge to global optima of SDP [Wang et al., 2018; Erdogdu et al., 2018] 8

Fast solution to MAXSAT SDP approximation Efficiently solve via low-rank factorization X = V T V , V ∈ R k × n , ∥ v i ∥ = 1 (a.k.a. Burer-Monteiro method), and block coordinate descent iters v i = − normalize ( VS T s i − ∥ s i ∥ 2 v i ) . √ For k > 2 n , the non-convex iterates are guaranteed to converge to global optima of SDP [Wang et al., 2018; Erdogdu et al., 2018] Complexity reduced from O ( n 6 log log 1 ϵ ) of interior point methods to ϵ ) of our method, where m is #clauses. O ( n 1 . 5 m log 1 8

Differentiate through the optimization problem 9

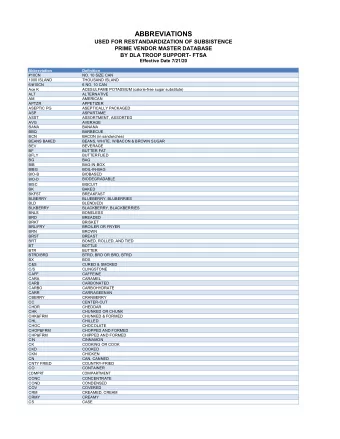

The fixed-point equation of the block coordinate descent provides an implicit function definition of the solution [Amos et al. 2017] normalize Thus, can apply implicit function theorem on the total derivatives Solve the above linear system of to backprop = Differentiate through the optimization problem When converged, the procedure satisfies the fixed-point equation v i = − normalize ( VS T s i − ∥ s i ∥ 2 v i ) , ∀ i 10

Thus, can apply implicit function theorem on the total derivatives Solve the above linear system of to backprop = Differentiate through the optimization problem When converged, the procedure satisfies the fixed-point equation v i = − normalize ( VS T s i − ∥ s i ∥ 2 v i ) , ∀ i The fixed-point equation of the block coordinate descent provides an implicit function definition of the solution [Amos et al. 2017] F i ( S , V ( S )) = v i + normalize ( VS T s i − ∥ s i ∥ 2 v i ) = 0 , ∀ i 10

Differentiate through the optimization problem When converged, the procedure satisfies the fixed-point equation v i = − normalize ( VS T s i − ∥ s i ∥ 2 v i ) , ∀ i The fixed-point equation of the block coordinate descent provides an implicit function definition of the solution [Amos et al. 2017] F i ( S , V ( S )) = v i + normalize ( VS T s i − ∥ s i ∥ 2 v i ) = 0 , ∀ i Thus, can apply implicit function theorem on the total derivatives ∂⃗ F ( ⃗ S , ⃗ ⇒ ∂⃗ F ( ⃗ S , ⃗ + ∂⃗ F ( ⃗ S , ⃗ · ∂ ⃗ V ( S )) V ) V ) V = 0 = = 0 ∂⃗ ∂⃗ ∂ ⃗ ∂⃗ S S V S Solve the above linear system of ∂ ⃗ V / ∂⃗ S to backprop 10

SATNet: MAXSAT SDP as a layer 11

- Only SDP with diagonal constraints, limiting representation - Adding auxiliary variable (gadget) increases representation power - Low-rank Regularize the complexity through number of clauses Auxiliary variable (hidden nodes) Other ingredients in SATNet Low-rank regularization on S - Doubly-exponentially many possible Boolean functions! 12

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.