SLIDE 1

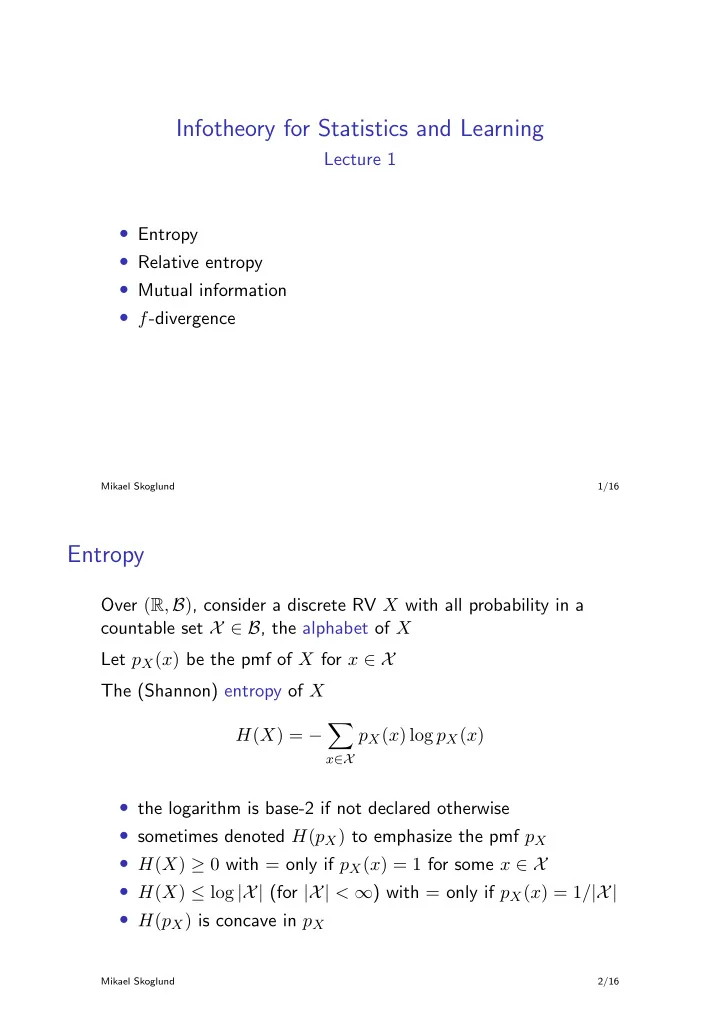

Infotheory for Statistics and Learning

Lecture 1

- Entropy

- Relative entropy

- Mutual information

- f-divergence

Mikael Skoglund 1/16

Entropy

Over (R, B), consider a discrete RV X with all probability in a countable set X ∈ B, the alphabet of X Let pX(x) be the pmf of X for x ∈ X The (Shannon) entropy of X H(X) = −

- x∈X

pX(x) log pX(x)

- the logarithm is base-2 if not declared otherwise

- sometimes denoted H(pX) to emphasize the pmf pX

- H(X) ≥ 0 with = only if pX(x) = 1 for some x ∈ X

- H(X) ≤ log |X| (for |X| < ∞) with = only if pX(x) = 1/|X|

- H(pX) is concave in pX

Mikael Skoglund 2/16