- P. Marquez-Neila, M. Salzmann, and P. Fua

EPFL Switzerland

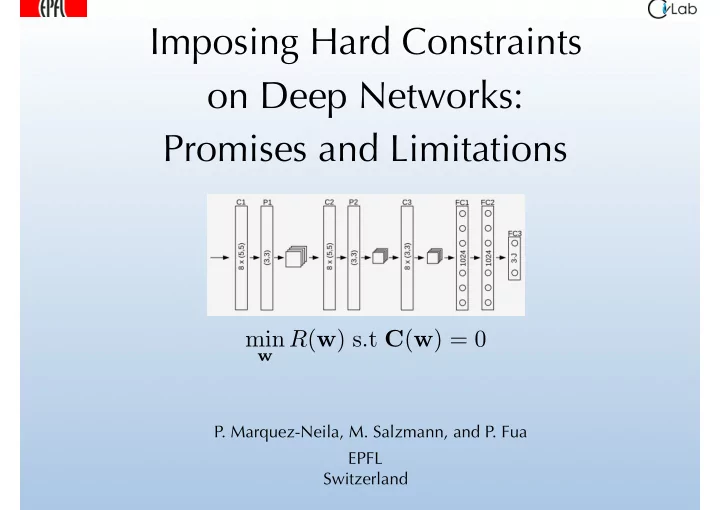

Imposing Hard Constraints

- n Deep Networks:

Promises and Limitations

min

w R(w) s.t C(w) = 0

Imposing Hard Constraints on Deep Networks: Promises and - - PowerPoint PPT Presentation

Imposing Hard Constraints on Deep Networks: Promises and Limitations min w R ( w ) s.t C ( w ) = 0 P. Marquez-Neila, M. Salzmann, and P. Fua EPFL Switzerland Motivation: 3D Pose Estimation Given a CNN trained to predict the 3D locations of

EPFL Switzerland

w R(w) s.t C(w) = 0

2

Given a CNN trained to predict the 3D locations of the person’s joints:

limbs are of the same length?

Constraining a Gaussian Latent Variable Model to preserve limb lengths:

3 Varol et al. CVPR’12

Regression from PHOG features.

50 100 150 200 250 10 20 30 40 V = 75 N Reconstruction Error Yao11 Ours 50 100 150 200 250 0.1 0.2 0.3 V = 75 N Constraint Violation Yao11 Ours

4

Given

vectors, xi and yi, find w∗ = arg minwRS(w), with RS(w) = 1 N X

i

L(φ(xi, w), yi) .

5

Hard Constraints:

Given a set of unlabeled points U = {x0

k}|U| k=1, find

min

w RS(w)

s.t Cjk(w) = 0 ∀j ≤ NC, ∀k ≤ |U| , where Cjk(w) = Cj(φ(x0

k; w)).

Soft Constraints:

Minimize min

w RS(w) +

X

j

λj X

k

Cjk(w)2 ! , where the λj parameters are positive scalars that control the relative contribution of each constraint.

In many “classical” optimization problems, hard constraints are preferred because they remove the need to adjust the values.

6

Karush-Kuhn-Tucker (KKT) conditions:

w max Λ

Iterative minimization scheme:

—> When there are millions of unknown these linear systems are HUGE!

∂C ∂w T ∂C ∂w

Solve when the dimension of v so large that B cannot be stored in memory.

7

subspace spanned by

∂w and vT ∂f ∂w

8

68 69 70 71 72 73 74 75 76Prediction error (mm)

1.0 1.5 2.0 2.5 3.0 3.5 4.0 4.5 5.0 5.5Median constraint violation (mm)

Soft-SGD ( = 10, = 1e 07, hm = 1) Soft-SGD ( = 1, = 1e 06, hm = 1) Soft-SGD ( = 1, = 1e 07, hm = 1) Soft-Adam ( = 1, hm = 0) Soft-Adam ( = 10, hm = 0) Soft-Adam ( = 100, hm = 0) Soft-Adam ( = 1, hm = 1) Soft-Adam ( = 10, hm = 1) Soft-Adam ( = 100, hm = 1) Hard-SGD ( = 10000, subiters= 1, hm = 1) Hard-SGD ( = 100000, subiters= 5, hm = 0) Hard-SGD ( = 100000, subiters= 5, hm = 1) Hard-Adam (subiters= 1, hm = 1) Hard-Adam (subiters= 5, hm = 1)

… but soft constraints help even more! Hard constraints help … Hard Constraints No Constraints Soft Constraints

9

Let x and ci for 1 i 200 be vectors of dimension d and let w∗ be either min

w

1 2kw x0k2 s.t kw cik 10 = 0, 1 i 200 (Hard Constraints)

min

w

1 2kw x0k2 + λ X

1≤i≤200

(kw cik 10)2 (Soft Constraints)

10

∂C ∂w T ∂C ∂w

be adjusted.

large if they are all active.

Hard constraints can be imposed on the output of Deep Nets but they are no more effective than soft ones:

results in ill-conditioned matrices.

means we do not keep a consistent set of them. —> We might still present this work at a positive result workshop.

11

12

Typical approach:

13

We choose to go to the Moon in this decade and do the other things, not because they are easy, but because they are hard. J.F. Kennedy, 1962 In the context of Deep Learning: