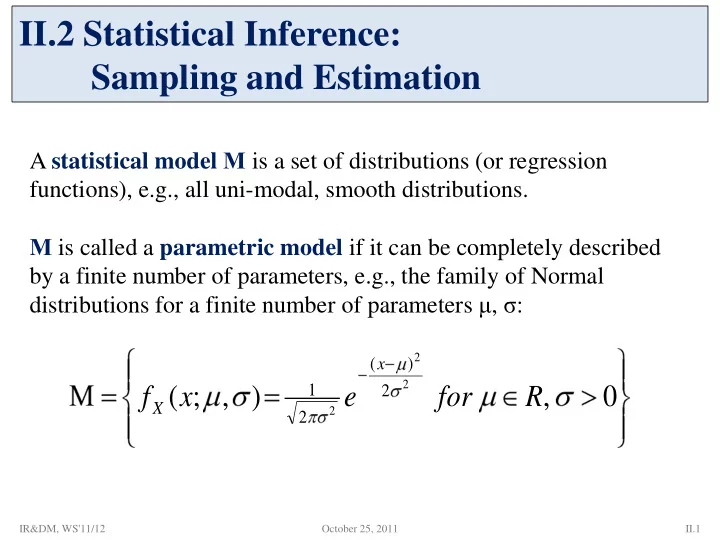

A statistical model Μ is a set of distributions (or regression functions), e.g., all uni-modal, smooth distributions. Μ is called a parametric model if it can be completely described by a finite number of parameters, e.g., the family of Normal distributions for a finite number of parameters μ, σ:

II.2 Statistical Inference: Sampling and Estimation

, ) , ; (

2 2 2

2 ) ( 2 1

R for e x f

x X

October 25, 2011 II.1 IR&DM, WS'11/12