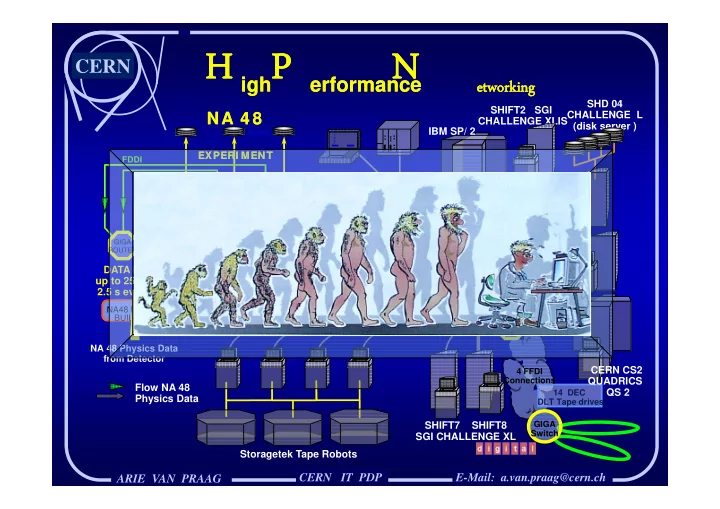

H P N H P N H P N H P N

CERN IT PDP

CERN

ARIE VAN PRAAG

igh erformance etworking etworking igh erformance etworking etworking

E-Mail: a.van.praag@cern.ch

Long Wavelength Serial-HIPPI ( 10 Km )

HIPPI SWITCH GIGA ROUTER

GIGA Switch 4 FFDI Connections 14 DEC DLT Tape drives

SHIFT7 SHIFT8 SGI CHALLENGE XL

HIPPI SWITCH

Long Wavelength Serial-HIPPI ( 10 Km )

HIPPI SWITCH

NA 4 8

EXPERI MENT

NA 4 8

EXPERI MENT DATA RATE: up to 250 MB in 2.5 s every 15 s

HIPPI

DISKS HIPPI-TC HIPPI-TC HIPPI-TC Turbo- channel HIPPI

NA48 EVENT BUILDER

NA 48 Physics Data from Detector

Flow NA 48 Physics Data

FDDI

GIGA ROUTER HIPPI SWITCH

SHIFT2 SGI CHALLENGE XLIS SHD 04 CHALLENGE L (disk server ) IBM SP/ 2 CERN CS2 QUADRICS QS 2

ALPHA 50

I O S C. . . . . . . . . . . . . .

HIPPI SWITCH

ALPHA 400

Long Wavelength Serial-HIPPI 500 m

Storagetek Tape Robots Short Wavelength Serial-HIPPI

HIPPI SWITCH