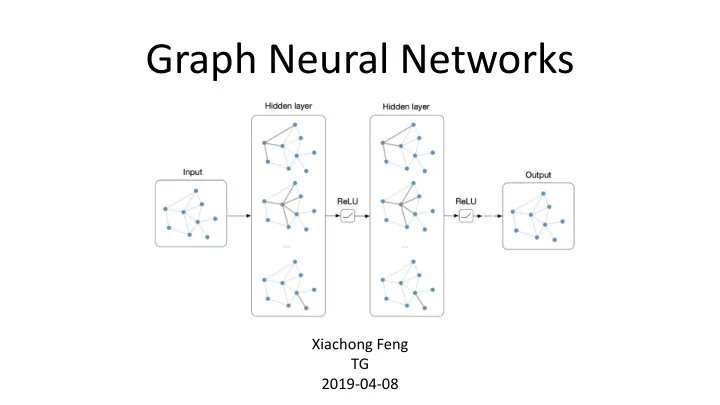

Graph Neural Networks

Xiachong Feng TG 2019-04-08

Graph Neural Networks Xiachong Feng TG 2019-04-08 Relies heavily - - PowerPoint PPT Presentation

Graph Neural Networks Xiachong Feng TG 2019-04-08 Relies heavily on A Gentle Introduction to Graph Neural Networks (Basics, DeepWalk, and GraphSage) Structured deep models: Deep learning on graphs and beyond Representation Learning

Xiachong Feng TG 2019-04-08

and GraphSage)

1. Basic && Overview 2. Graph Neural Networks 1. Original Graph Neural Networks (GNNs) 2. Graph Convolutional Networks (GCNs) && Graph SAGE 3. Gated Graph Neural Networks (GGNNs) 4. Graph Neural Networks With Attention (GAT) 5. Sub-Graph Embeddings 3. Message Passing Neural Networks (MPNN) 4. GNN In NLP (AMR、SQL、Summarization) 5. Tools 6. Conclusion

𝐻 = (𝑊, 𝐹)

directional dependencies between vertices.

A Gentle Introduction to Graph Neural Networks (Basics, DeepWalk, and GraphSage)

Adjacency matrix

Structured deep models: Deep learning on graphs and beyond

Representation Learning on Networks Graph neural networks: Variations and applications

(e.g., dot product) approximates similarity in the original network.

http://snap.stanford.edu/proj/embeddings-www/

embeddings of nodes in a graph by looking at its nearby nodes.

http://snap.stanford.edu/proj/embeddings-www/

Structured deep models: Deep learning on graphs and beyond

Structured deep models: Deep learning on graphs and beyond

handle the graph input properly in that they stack the feature of nodes by a specific order. To solve this problem, GNNs propagate on each node respectively, ignoring the input order of nodes.

Generally, GNNs update the hidden state of nodes by a weighted sum

structural data like scene pictures and story documents, which can be a powerful neural model for further high-level AI.

Graph Neural Networks: A Review of Methods and Applications

1. Basic && Overview 2. Graph Neural Networks 1. Original Graph Neural Networks (GNNs) 2. Graph Convolutional Networks (GCNs) && Graph SAGE 3. Gated Graph Neural Networks (GGNNs) 4. Graph Neural Networks With Attention (GAT) 5. Sub-Graph Embeddings 3. Message Passing Neural Networks (MPNN) 4. GNN In NLP (AMR、SQL、Summarization) 5. Tools 6. Conclusion

http://snap.stanford.edu/proj/embeddings-www/

http://snap.stanford.edu/proj/embeddings-www/

features of v features of edges neighborhood states neighborhood features local transition function local output function Banach`s fixed point theorem

f and g can be interpreted as the feedforward neural networks.

http://snap.stanford.edu/proj/embeddings-www/

Need to define a loss function on the embeddings, L(z)!

http://snap.stanford.edu/proj/embeddings-www/

http://snap.stanford.edu/proj/embeddings-www/

Gradient-descent strategy

They approach the fixed point solution of H(T) ≈ H.

Graph Neural Networks: A Review of Methods and Applications

Representation Learning on Networks, snap.stanford.edu/proj/embeddings-www, WWW 2018

http://snap.stanford.edu/proj/embeddings-www/

http://snap.stanford.edu/proj/embeddings-www/

train on one graph generalize to new graph

Limitations

assumption of fixed point is relaxed, it is possible to leverage Multi-layer Perceptron to learn a more stable representation, and removing the iterative update process. This is because, in the

while the different parameters in different layers of MLP allow for hierarchical feature extraction.

different relationship between nodes)

for learning to represent nodes.

A Gentle Introduction to Graph Neural Networks (Basics, DeepWalk, and GraphSage)

http://snap.stanford.edu/proj/embeddings-www/

1. Basic && Overview 2. Graph Neural Networks 1. Original Graph Neural Networks (GNNs) 2. Graph Convolutional Networks (GCNs) && Graph SAGE 3. Gated Graph Neural Networks (GGNNs) 4. Graph Neural Networks With Attention (GAT) 5. Sub-Graph Embeddings 3. Message Passing Neural Networks (MPNN) 4. GNN In NLP (AMR、SQL、Summarization) 5. Tools 6. Conclusion

Graph Neural Networks: A Review of Methods and Applications

Structured deep models: Deep learning on graphs and beyond

http://snap.stanford.edu/proj/embeddings-www/

Structured deep models: Deep learning on graphs and beyond

Convolutional networks on graphs for learning molecular fingerprints NIPS 2015

Inductive Representation Learning on Large Graphs NIPS17

Mean aggregator. LSTM aggregator. Pooling aggregator.

Inductive Representation Learning on Large Graphs NIPS17

init K iters For every node K-th func

1. Basic && Overview 2. Graph Neural Networks 1. Original Graph Neural Networks (GNNs) 2. Graph Convolutional Networks (GCNs) && Graph SAGE 3. Gated Graph Neural Networks (GGNNs) 4. Graph Neural Networks With Attention (GAT) 5. Sub-Graph Embeddings 3. Message Passing Neural Networks (MPNN) 4. GNN In NLP (AMR、SQL、Summarization) 5. Tools 6. Conclusion

INPUT GRAPH T ARGET NODE

B D E F C A A D B C

…..

10+ layer ers! s!?

http://snap.stanford.edu/proj/embeddings-www/

http://snap.stanford.edu/proj/embeddings-www/

are tied and gating mechanisms are added.

1. Basic && Overview 2. Graph Neural Networks 1. Original Graph Neural Networks (GNNs) 2. Graph Convolutional Networks (GCNs) && Graph SAGE 3. Gated Graph Neural Networks (GGNNs) 4. Graph Neural Networks With Attention (GAT) 5. Sub-Graph Embeddings 3. Message Passing Neural Networks (MPNN) 4. GNN In NLP (AMR、SQL、Summarization) 5. Tools 6. Conclusion

Structured deep models: Deep learning on graphs and beyond

1. Basic && Overview 2. Graph Neural Networks 1. Original Graph Neural Networks (GNNs) 2. Graph Convolutional Networks (GCNs) && Graph SAGE 3. Gated Graph Neural Networks (GGNNs) 4. Graph Neural Networks With Attention (GAT) 5. Sub-Graph Embeddings 3. Message Passing Neural Networks (MPNN) 4. GNN In NLP (AMR、SQL、Summarization) 5. Tools 6. Conclusion

http://snap.stanford.edu/proj/embeddings-www/

virtual node

http://snap.stanford.edu/proj/embeddings-www/

1. Basic && Overview 2. Graph Neural Networks 1. Original Graph Neural Networks (GNNs) 2. Graph Convolutional Networks (GCNs) && Graph SAGE 3. Gated Graph Neural Networks (GGNNs) 4. Graph Neural Networks With Attention (GAT) 5. Sub-Graph Embeddings 3. Message Passing Neural Networks (MPNN) 4. GNN In NLP (AMR、SQL、Summarization) 5. Tools 6. Conclusion

approaches.

𝑓𝑤𝑥 represents features of the edge from node 𝑤 to 𝑥

Graph Neural Networks: A Review of Methods and Applications

Graph Neural Networks: A Review of Methods and Applications

1. Basic && Overview 2. Graph Neural Networks 1. Original Graph Neural Networks (GNNs) 2. Graph Convolutional Networks (GCNs) && Graph SAGE 3. Gated Graph Neural Networks (GGNNs) 4. Graph Neural Networks With Attention (GAT) 5. Sub-Graph Embeddings 3. Message Passing Neural Networks (MPNN) 4. GNN In NLP (AMR、SQL、Summarization) 5. Tools 6. Conclusion

relations between them

graph.

abstracted away

children and siblings can be far away after serialization.

Edge Node

Graph Decoder

A Graph-to-Sequence Model for AMR-to-Text Generation ACL 18

LSTM 𝑦𝑘

𝑗

𝑦𝑘

𝑝

ℎ𝑘

𝑝

ℎ𝑘

𝑗

𝑑𝑢

𝑘

ℎ𝑢

𝑘

LSTM 𝑦𝑘

𝑗

𝑦𝑘

𝑝

ℎ𝑘

𝑝

ℎ𝑘

𝑗

𝑑𝑢−1

𝑘

ℎ𝑢−1

𝑘

LSTM 𝑦𝑘

𝑗

𝑦𝑘

𝑝

ℎ𝑘

𝑝

ℎ𝑘

𝑗

𝑑𝑢+1

𝑘

ℎ𝑢+1

𝑘

T T-1 T+1 Can not learn Edge representations!

A Graph-to-Sequence Model for AMR-to-Text Generation ACL 18

Graph-to-Sequence Learning using Gated Graph Neural Networks ACL 18

edge-wise parameters The boy wants the girl to believe him

Levi Graph Transformation

Graph-to-Sequence Learning using Gated Graph Neural Networks ACL 18

{default, reverse, self }

Graph-to-Sequence Learning using Gated Graph Neural Networks ACL 18

reset update

Structural Neural Encoders for AMR-to-text Generation NAACL 19

dir(j, i) indicates the direction of the edge between xjand xi

Structural Neural Encoders for AMR-to-text Generation NAACL 19

Graph neural networks: Variations and applications

interpreting the meaning of a given structured query language (SQL) query .

SQL-to-Text Generation with Graph-to-Sequence Model EMNLP18

model to better learn the correlation between this graph pattern and the interpretation “...both X and Y higher than Z...”

SQL-to-Text Generation with Graph-to-Sequence Model EMNLP18

Graph-based Neural Multi-Document Summarization CoNLL 2017

Graph-based Neural Multi-Document Summarization CoNLL 2017

indicators such as deverbal noun references, event / entity continuations, discourse markers, and coreferent mentions. These features allow characterization of sentence relationships, rather than simply their similarity.

Graph-based Neural Multi-Document Summarization CoNLL 2017

adjacency matrix input node feature matrix high-level hidden features for each node 𝑌 = 𝐼0 𝐼1 Z=𝐼2

Structured Neural Summarization ICLR 19

Toward Abstractive Summarization Using Semantic Representations NAACL15

1. Basic && Overview 2. Graph Neural Networks 1. Original Graph Neural Networks (GNNs) 2. Graph Convolutional Networks (GCNs) && Graph SAGE 3. Gated Graph Neural Networks (GGNNs) 4. Graph Neural Networks With Attention (GAT) 5. Sub-Graph Embeddings 3. Message Passing Neural Networks (MPNN) 4. GNN In NLP (AMR、SQL、Summarization) 5. Tools 6. Conclusion

1. Basic && Overview 2. Graph Neural Networks 1. Original Graph Neural Networks (GNNs) 2. Graph Convolutional Networks (GCNs) && Graph SAGE 3. Gated Graph Neural Networks (GGNNs) 4. Graph Neural Networks With Attention (GAT) 5. Sub-Graph Embeddings 3. Message Passing Neural Networks (MPNN) 4. GNN In NLP (AMR、SQL、Summarization) 5. Tools 6. Conclusion

(1) (2) (3) (4)

Graph neural networks: Variations and applications

Xiachong Feng TG 2019-04