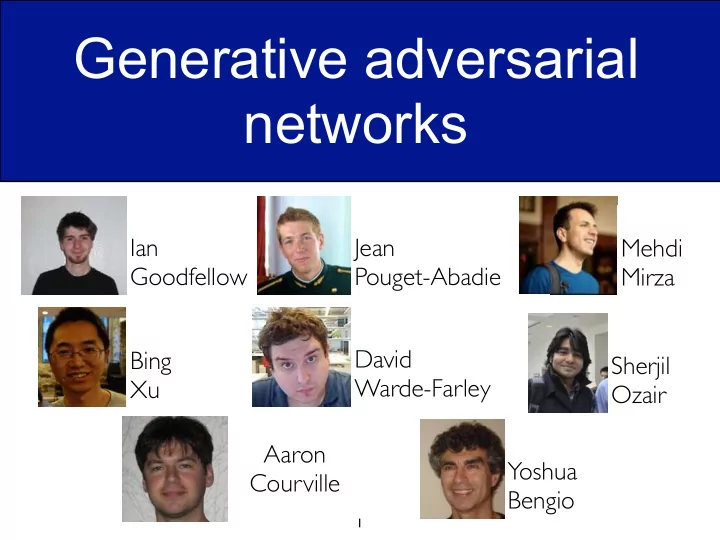

Generative adversarial networks

1

Ian Goodfellow Jean Pouget-Abadie Mehdi Mirza Bing Xu David Warde-Farley Sherjil Ozair Aaron Courville Yoshua Bengio

Generative adversarial networks Ian Jean Mehdi Goodfellow - - PowerPoint PPT Presentation

Generative adversarial networks Ian Jean Mehdi Goodfellow Pouget-Abadie Mirza David Bing Sherjil Warde-Farley Xu Ozair Aaron Yoshua Courville Bengio 1 Discriminative deep learning Recipe for success x 2014 NIPS Workshop on

1

Ian Goodfellow Jean Pouget-Abadie Mehdi Mirza Bing Xu David Warde-Farley Sherjil Ozair Aaron Courville Yoshua Bengio

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

2

x

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

into the ImageNet 1K competition (with extra data).

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

4

into the ImageNet 1K competition (with extra data).

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

5

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

6

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

7

θ

m

i=1

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

8

h(1) h(2) h(3) x

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

9

h(1) h(2) h(3) x d dθi log p(x) = d dθi " log X

h

˜ p(h, x) − log Z(θ) # d dθi log Z(θ) =

d dθi Z(θ)

Z(θ)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

correlated ⇒ leads to divergence of learning.

10

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow 11

MNIST dataset 1st layer features (RBM)

Coordinated flipping of low- level features

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

12

p(x, h) = p(x | h(1))p(h(1) | h(2)) . . . p(h(L−1) | h(L))p(h(L))

h(1) h(2) h(3) x

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

13

Conference on Learning Representations (ICLR) 2014.

variational inference in deep latent Gaussian models. ArXiv.

with gradient backpropagation.

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

14

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

directly.

15

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

16

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

circumstances.

their opponent’s strategy.

17

1 1

1

You Your opponent Rock Paper Scissors Rock Paper Scissors

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

18

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

19

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

20

Input noise Z Differentiable function G x sampled from model Differentiable function D D tries to

x sampled from data Differentiable function D D tries to

x x z

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

21

min

G max D V (D, G) = Ex∼pdata(x)[log D(x)] + Ez∼pz(z)[log(1 − D(G(z)))].

G Ez∼pz(z)[log D(G(z))]

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

22

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

23

...

Poorly fit model After updating D After updating G Mixed strategy equilibrium Data distribution Model distribution

pD(data)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

24

...

Poorly fit model After updating D After updating G Mixed strategy equilibrium Data distribution Model distribution

pD(data)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

25

...

Poorly fit model After updating D After updating G Mixed strategy equilibrium Data distribution Model distribution

pD(data)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

26

...

Poorly fit model After updating D After updating G Mixed strategy equilibrium Data distribution Model distribution

pD(data)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

27

min

G max D V (D, G) = Ex∼pdata(x)[log D(x)] + Ez∼pz(z)[log(1 − D(G(z)))].

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

28

Model MNIST TFD DBN [3] 138 ± 2 1909 ± 66 Stacked CAE [3] 121 ± 1.6 2110 ± 50 Deep GSN [6] 214 ± 1.1 1890 ± 29 Adversarial nets 225 ± 2 2057 ± 26

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

29

MNIST TFD CIFAR-10 (fully connected) CIFAR-10 (convolutional)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

30

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

along the path between A and B

desired.

31

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

32

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow 33

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

34

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

35

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

36

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

37

38