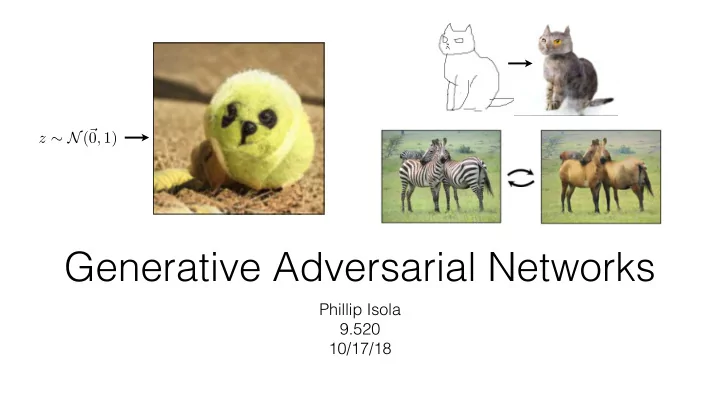

Generative Adversarial Networks

Phillip Isola 9.520 10/17/18

z ∼ N(~ 0, 1)

Generative Adversarial Networks Phillip Isola 9.520 10/17/18 - - PowerPoint PPT Presentation

z N ( ~ 0 , 1) Generative Adversarial Networks Phillip Isola 9.520 10/17/18 Image classification Classifier Fish image X label Y Image generation Generator Fish label Y image X <latexit

Phillip Isola 9.520 10/17/18

z ∼ N(~ 0, 1)

“Fish”

“Fish”

Useful for lots of problems beyond sampling random images! Model of high-dimensional unobserved variables P(X|Y = y)

<latexit sha1_base64="N2H5Z6Nqpk1692m+ZrozY3y9bk=">AClnicfVHbitRAEO2JtzVedlZfBF8aB3EVGRIR9EVZvKAv4gjORabD0OmpZJrtS+iuqEPMJ/g1vuqH+Dd2ZmdFd8WChlOnTlXJa+U9JgkP3vRmbPnzl/YuRhfunzl6m5/79rE29oJGAurJvl3IOSBsYoUcGscsB1rmCaHz7v4tOP4Ly05j2uK8g0L40spOAYqEX/zmifaY6rvGhmLf1Cj50PLX3y21m3dxf9QTJMNkZPg3QLBmRro8Veb8qWVtQaDArFvZ+nSYVZwx1KoaCNWe2h4uKQlzAP0HANPms2E7X0dmCWtLAuPIN0w/6Z0XDt/VrnQdn16E/GOvJfsXmNxeOskaqEYw4+qioFUVLu/XQpXQgUK0D4MLJ0CsVK+64wLDEOGYvIAzj4E0o/LYCx9G6ew3jrtT8cxuGK9n9Dv1PKM2xMKBQ0sAnYbXmZtkwY51u52nWMAUFMjUBh4OUOVmukLnOa+NwivTk4k+DyYNhmgzTdw8HB8+2R9khN8ktsk9S8ogckNdkRMZEkK/kG/lOfkQ3oqfRy+jVkTqbXOuk78sGv0CUDXMwg=</latexit><latexit sha1_base64="N2H5Z6Nqpk1692m+ZrozY3y9bk=">AClnicfVHbitRAEO2JtzVedlZfBF8aB3EVGRIR9EVZvKAv4gjORabD0OmpZJrtS+iuqEPMJ/g1vuqH+Dd2ZmdFd8WChlOnTlXJa+U9JgkP3vRmbPnzl/YuRhfunzl6m5/79rE29oJGAurJvl3IOSBsYoUcGscsB1rmCaHz7v4tOP4Ly05j2uK8g0L40spOAYqEX/zmifaY6rvGhmLf1Cj50PLX3y21m3dxf9QTJMNkZPg3QLBmRro8Veb8qWVtQaDArFvZ+nSYVZwx1KoaCNWe2h4uKQlzAP0HANPms2E7X0dmCWtLAuPIN0w/6Z0XDt/VrnQdn16E/GOvJfsXmNxeOskaqEYw4+qioFUVLu/XQpXQgUK0D4MLJ0CsVK+64wLDEOGYvIAzj4E0o/LYCx9G6ew3jrtT8cxuGK9n9Dv1PKM2xMKBQ0sAnYbXmZtkwY51u52nWMAUFMjUBh4OUOVmukLnOa+NwivTk4k+DyYNhmgzTdw8HB8+2R9khN8ktsk9S8ogckNdkRMZEkK/kG/lOfkQ3oqfRy+jVkTqbXOuk78sGv0CUDXMwg=</latexit><latexit sha1_base64="N2H5Z6Nqpk1692m+ZrozY3y9bk=">AClnicfVHbitRAEO2JtzVedlZfBF8aB3EVGRIR9EVZvKAv4gjORabD0OmpZJrtS+iuqEPMJ/g1vuqH+Dd2ZmdFd8WChlOnTlXJa+U9JgkP3vRmbPnzl/YuRhfunzl6m5/79rE29oJGAurJvl3IOSBsYoUcGscsB1rmCaHz7v4tOP4Ly05j2uK8g0L40spOAYqEX/zmifaY6rvGhmLf1Cj50PLX3y21m3dxf9QTJMNkZPg3QLBmRro8Veb8qWVtQaDArFvZ+nSYVZwx1KoaCNWe2h4uKQlzAP0HANPms2E7X0dmCWtLAuPIN0w/6Z0XDt/VrnQdn16E/GOvJfsXmNxeOskaqEYw4+qioFUVLu/XQpXQgUK0D4MLJ0CsVK+64wLDEOGYvIAzj4E0o/LYCx9G6ew3jrtT8cxuGK9n9Dv1PKM2xMKBQ0sAnYbXmZtkwY51u52nWMAUFMjUBh4OUOVmukLnOa+NwivTk4k+DyYNhmgzTdw8HB8+2R9khN8ktsk9S8ogckNdkRMZEkK/kG/lOfkQ3oqfRy+jVkTqbXOuk78sGv0CUDXMwg=</latexit><latexit sha1_base64="N2H5Z6Nqpk1692m+ZrozY3y9bk=">AClnicfVHbitRAEO2JtzVedlZfBF8aB3EVGRIR9EVZvKAv4gjORabD0OmpZJrtS+iuqEPMJ/g1vuqH+Dd2ZmdFd8WChlOnTlXJa+U9JgkP3vRmbPnzl/YuRhfunzl6m5/79rE29oJGAurJvl3IOSBsYoUcGscsB1rmCaHz7v4tOP4Ly05j2uK8g0L40spOAYqEX/zmifaY6rvGhmLf1Cj50PLX3y21m3dxf9QTJMNkZPg3QLBmRro8Veb8qWVtQaDArFvZ+nSYVZwx1KoaCNWe2h4uKQlzAP0HANPms2E7X0dmCWtLAuPIN0w/6Z0XDt/VrnQdn16E/GOvJfsXmNxeOskaqEYw4+qioFUVLu/XQpXQgUK0D4MLJ0CsVK+64wLDEOGYvIAzj4E0o/LYCx9G6ew3jrtT8cxuGK9n9Dv1PKM2xMKBQ0sAnYbXmZtkwY51u52nWMAUFMjUBh4OUOVmukLnOa+NwivTk4k+DyYNhmgzTdw8HB8+2R9khN8ktsk9S8ogckNdkRMZEkK/kG/lOfkQ3oqfRy+jVkTqbXOuk78sGv0CUDXMwg=</latexit>Gaussian noise

z ∼ N(~ 0, 1)

Synthesized image

Synthesized image

“bird”

Synthesized image

“A yellow bird on a branch”

Synthesized image

Object labeling

[Long et al. 2015, …]

Edge Detection

[Xie et al. 2015, …] [Reed et al. 2014, …]

Text-to-photo

“this small bird has a pink breast and crown…”

Future frame prediction

[Mathieu et al. 2016, …]

possible outputs

Objective function (loss) Neural Network

F Ex,y[L(F(x), y)]

Training data

n

n

n

… x y Input x Output y

“What should I do” “How should I do it?”

F Ex,y[L(F(x), y)]

Input x Output y

Input Output Ground truth

Color distribution cross-entropy loss with colorfulness enhancing term. Zhang et al. 2016

Input Ground truth

Image colorization

L2 regression Super-resolution

[Johnson, Alahi, Li, ECCV 2016]

L2 regression

[Zhang, Isola, Efros, ECCV 2016]

Image colorization

Cross entropy objective, with colorfulness term Deep feature covariance matching objective

[Johnson, Alahi, Li, ECCV 2016]

Super-resolution

[Zhang, Isola, Efros, ECCV 2016]

(classifier)

[Goodfellow, Pouget-Abadie, Mirza, Xu, Warde-Farley, Ozair, Courville, Bengio 2014]

Real photos Generated images

…

Generator

G(x)

G tries to synthesize fake images that fool D D tries to identify the fakes

Generator Discriminator

G(x)

real or fake?

D

fake (0.9)

G(x)

+

real (0.1)

G tries to synthesize fake images that fool D:

+

real or fake?

G

G(x)

G tries to synthesize fake images that fool the best D:

+

real or fake?

G(x)

arg min

G max D

Loss Function

G(x)

real or fake?

G(x)

+

arg min

G max D

real!

G(x)

+

arg min

G max D

(“Aquarius”)

real or fake pair ?

arg min

G max D

+

G(x)

arg min

G max D

log D(x, G(x)) + log(1 − D(x, y))

real or fake pair ?

G(x)

arg min

G max D

log D(x, G(x)) + log(1 − D(x, y))

G(x)

fake pair

arg min

G max D

log D(x, G(x)) + log(1 − D(x, y))

G(x)

real pair

G(x)

arg min

G max D

log D(x, G(x)) + log(1 − D(x, y))

real or fake pair ?

Conditional GAN

Conditional GAN Stable training + fast convergence

G(x)

Input Output Input Output Input Output

Data from [Russakovsky et al. 2015]

Ivy Tasi @ivymyt Vitaly Vidmirov @vvid

Target Input

Output

Each pixel treated as independent

Y

i

p(yi|x)

1 Z Y

i,j

p(yi, yj|x)

Models at pairwise configuration

CRF

Model joint configuration

A GAN, with sufficient capacity, samples from the full joint distribution when perfectly optimized. Most generative models have this property! Give them sufficient capacity and infinite data, and they are the complete solution to prediction problems.

1/0

N pixels N pixels

Rather than penalizing if output image looks fake, penalize if each

[Li & Wand 2016] [Shrivastava et al. 2017] [Isola et al. 2017]

Input 1x1 Discriminator

Data from [Tylecek, 2013]

Input 16x16 Discriminator

Data from [Tylecek, 2013]

Input 70x70 Discriminator

Data from [Tylecek, 2013]

Input Full image Discriminator

Data from [Tylecek, 2013]

1/0

N pixels N pixels

Rather than penalizing if output image looks fake, penalize if each

possible outputs

—> Use a deep net, D, to model output!

Can we generate images from scratch?

Gaussian noise Synthesized image

z ∼ N(~ 0, 1) z ∼

Generator

G(x)

[Goodfellow et al., 2014]

G tries to synthesize fake images that fool D D tries to identify the fakes

Generator Discriminator

G(x)

real or fake?

[Goodfellow et al., 2014]

GANs are implicit generative models

“generative model” of the data x

z ⇠ N(0, 1)

Noise distribution

G(z) ⇠ p(x)

Samples from a perfectly optimized GAN are samples from the data distribution

x ⇠ p(x) GAN

Data distribution

G(z)

Progressive GAN [Karras et al., 2018]

Progressive GAN [Karras et al., 2018]

Proof Proposition 1. For G fixed, the optimal discriminator D is D∗

G(x) =

pdata(x) pdata(x) + pg(x)

(G, D) V (G, D) = Z

x

pdata(x) log(D(x))dx + Z

z

pz(z) log(1 D(g(z)))dz = Z

x

pdata(x) log(D(x)) + pg(x) log(1 D(x))dx

Z For any (a, b) 2 R2 \ {0, 0}, the function y ! a log(y) + b log(1 y) achieves its maximum in [0, 1] at

a a+b. The discriminator does not need to be defined outside of Supp(pdata) [ Supp(pg),

concluding the proof.

C(G) = max

D V (G, D)

=Ex∼pdata[log D∗

G(x)] + Ez∼pz[log(1 D∗ G(G(z)))]

=Ex∼pdata[log D∗

G(x)] + Ex∼pg[log(1 D∗ G(x))]

=Ex∼pdata log pdata(x) Pdata(x) + pg(x)

log pg(x) pdata(x) + pg(x)

is achieved if

G

C(G) = log(4) + KL ✓ pdata

2 ◆ + KL ✓ pg

2 ◆ is the Kullback–Leibler divergence. We recognize in the previous expression

between the model’s distribution and the data generating C(G) = log(4) + 2 · JSD (pdata kpg )

Proof

pg = pdata

<latexit sha1_base64="hOert/YFld76Co4Ny75oRLEwVo=">ACe3icfVHbitRAEO2JtzXedvXRl8YgyLIMiSjuy8KiPvgiruDMLEziUOnUZJrtS+iuqEPIf/iqf+XHCHZmR9BdsaDh1KlT1XUpGyU9pemPUXTl6rXrN3Zuxrdu37l7b3fv/tTb1gmcCKusOy3Bo5IGJyRJ4WnjEHSpcFaevRris0/ovLTmA60bLDTURi6lArUx2ZR8yPeLoKCPrFbpKO043xyDbgoRt7WSxN5rlRWtRkNCgfzLG2o6MCRFAr7OG89NiDOoMZ5gAY0+qLbtN3zx4Gp+NK68AzxDftnRgfa+7Uug1IDrfzF2ED+KzZvaXlYdNI0LaER5x8tW8XJ8mEHvJIOBal1ACcDL1ysQIHgsKm4jh/jWEYh29D4XcNOiDr9rscXK3hSx+Gq/ODAf1PKM1vYUChpMHPwmoNpupyY53u51nR5QqXlKspOkqy3Ml6RbkbvD4Op8guLv4ymD4dZ+k4e/8sOX65PcoOe8gesScsYy/YMXvDTtiECebYV/aNfR/9jJoPzo4l0ajbc4D9pdFz38Bb0vDVA=</latexit><latexit sha1_base64="hOert/YFld76Co4Ny75oRLEwVo=">ACe3icfVHbitRAEO2JtzXedvXRl8YgyLIMiSjuy8KiPvgiruDMLEziUOnUZJrtS+iuqEPIf/iqf+XHCHZmR9BdsaDh1KlT1XUpGyU9pemPUXTl6rXrN3Zuxrdu37l7b3fv/tTb1gmcCKusOy3Bo5IGJyRJ4WnjEHSpcFaevRris0/ovLTmA60bLDTURi6lArUx2ZR8yPeLoKCPrFbpKO043xyDbgoRt7WSxN5rlRWtRkNCgfzLG2o6MCRFAr7OG89NiDOoMZ5gAY0+qLbtN3zx4Gp+NK68AzxDftnRgfa+7Uug1IDrfzF2ED+KzZvaXlYdNI0LaER5x8tW8XJ8mEHvJIOBal1ACcDL1ysQIHgsKm4jh/jWEYh29D4XcNOiDr9rscXK3hSx+Gq/ODAf1PKM1vYUChpMHPwmoNpupyY53u51nR5QqXlKspOkqy3Ml6RbkbvD4Op8guLv4ymD4dZ+k4e/8sOX65PcoOe8gesScsYy/YMXvDTtiECebYV/aNfR/9jJoPzo4l0ajbc4D9pdFz38Bb0vDVA=</latexit><latexit sha1_base64="hOert/YFld76Co4Ny75oRLEwVo=">ACe3icfVHbitRAEO2JtzXedvXRl8YgyLIMiSjuy8KiPvgiruDMLEziUOnUZJrtS+iuqEPIf/iqf+XHCHZmR9BdsaDh1KlT1XUpGyU9pemPUXTl6rXrN3Zuxrdu37l7b3fv/tTb1gmcCKusOy3Bo5IGJyRJ4WnjEHSpcFaevRris0/ovLTmA60bLDTURi6lArUx2ZR8yPeLoKCPrFbpKO043xyDbgoRt7WSxN5rlRWtRkNCgfzLG2o6MCRFAr7OG89NiDOoMZ5gAY0+qLbtN3zx4Gp+NK68AzxDftnRgfa+7Uug1IDrfzF2ED+KzZvaXlYdNI0LaER5x8tW8XJ8mEHvJIOBal1ACcDL1ysQIHgsKm4jh/jWEYh29D4XcNOiDr9rscXK3hSx+Gq/ODAf1PKM1vYUChpMHPwmoNpupyY53u51nR5QqXlKspOkqy3Ml6RbkbvD4Op8guLv4ymD4dZ+k4e/8sOX65PcoOe8gesScsYy/YMXvDTtiECebYV/aNfR/9jJoPzo4l0ajbc4D9pdFz38Bb0vDVA=</latexit><latexit sha1_base64="hOert/YFld76Co4Ny75oRLEwVo=">ACe3icfVHbitRAEO2JtzXedvXRl8YgyLIMiSjuy8KiPvgiruDMLEziUOnUZJrtS+iuqEPIf/iqf+XHCHZmR9BdsaDh1KlT1XUpGyU9pemPUXTl6rXrN3Zuxrdu37l7b3fv/tTb1gmcCKusOy3Bo5IGJyRJ4WnjEHSpcFaevRris0/ovLTmA60bLDTURi6lArUx2ZR8yPeLoKCPrFbpKO043xyDbgoRt7WSxN5rlRWtRkNCgfzLG2o6MCRFAr7OG89NiDOoMZ5gAY0+qLbtN3zx4Gp+NK68AzxDftnRgfa+7Uug1IDrfzF2ED+KzZvaXlYdNI0LaER5x8tW8XJ8mEHvJIOBal1ACcDL1ysQIHgsKm4jh/jWEYh29D4XcNOiDr9rscXK3hSx+Gq/ODAf1PKM1vYUChpMHPwmoNpupyY53u51nR5QqXlKspOkqy3Ml6RbkbvD4Op8guLv4ymD4dZ+k4e/8sOX65PcoOe8gesScsYy/YMXvDTtiECebYV/aNfR/9jJoPzo4l0ajbc4D9pdFz38Bb0vDVA=</latexit>is the unique global minimizer of the GAN objective.

≥ 0, 0 ⇐ ⇒ pg = pdata

<latexit sha1_base64="q9QynLOGAUsJcUg4ZSq4HGXK5U=">ACknicfVFtaxQxEM6tL63rW6t+80vwETKsSuCRCq9YMfFCt4d4XLcsxlZ/dCk+w2mVWPZX+Av8av+lP8N2avJ2grDiQ8eaZmczMotbKU5L8HESXLl+5urV9Lb5+4+at2zu7dya+apzEsax05Y4X4FEri2NSpPG4dghmoXG6ODns/dNP6Lyq7Eda1ZgZK0qlAQK1HxnKEo85ckeF6cN5DzhQhUFr+clfxHuNgeCLqiSUbI2fhGkGzBkGzua7w6mIq9kY9CS1OD9LE1qylpwpKTGLhaNxrkCZQ4C9CQZ+16246/jAwOS8qF4lvmb/jGjBeL8yi6A0QEt/3teT/LNGir2s1bZuiG08qxQ0WhOFe9Hw3PlUJeBQDSqfBXLpfgQFIYByL1xiacfguJH5fowOq3ONWgCsNfOlCc6XY69H/hMr+FgYUlr8LCtjwOatsJUz3SzNWqGxIKEn6GiYCqfKJQnXv7o4rCI9P/iLYPJklCaj9MPT4cGrzVK2X32gD1iKXvGDtgbdsTGTLKv7Bv7zn5E96Ln0cvo8EwaDTYxd9lfFr39BX0IybU=</latexit><latexit sha1_base64="q9QynLOGAUsJcUg4ZSq4HGXK5U=">ACknicfVFtaxQxEM6tL63rW6t+80vwETKsSuCRCq9YMfFCt4d4XLcsxlZ/dCk+w2mVWPZX+Av8av+lP8N2avJ2grDiQ8eaZmczMotbKU5L8HESXLl+5urV9Lb5+4+at2zu7dya+apzEsax05Y4X4FEri2NSpPG4dghmoXG6ODns/dNP6Lyq7Eda1ZgZK0qlAQK1HxnKEo85ckeF6cN5DzhQhUFr+clfxHuNgeCLqiSUbI2fhGkGzBkGzua7w6mIq9kY9CS1OD9LE1qylpwpKTGLhaNxrkCZQ4C9CQZ+16246/jAwOS8qF4lvmb/jGjBeL8yi6A0QEt/3teT/LNGir2s1bZuiG08qxQ0WhOFe9Hw3PlUJeBQDSqfBXLpfgQFIYByL1xiacfguJH5fowOq3ONWgCsNfOlCc6XY69H/hMr+FgYUlr8LCtjwOatsJUz3SzNWqGxIKEn6GiYCqfKJQnXv7o4rCI9P/iLYPJklCaj9MPT4cGrzVK2X32gD1iKXvGDtgbdsTGTLKv7Bv7zn5E96Ln0cvo8EwaDTYxd9lfFr39BX0IybU=</latexit><latexit sha1_base64="q9QynLOGAUsJcUg4ZSq4HGXK5U=">ACknicfVFtaxQxEM6tL63rW6t+80vwETKsSuCRCq9YMfFCt4d4XLcsxlZ/dCk+w2mVWPZX+Av8av+lP8N2avJ2grDiQ8eaZmczMotbKU5L8HESXLl+5urV9Lb5+4+at2zu7dya+apzEsax05Y4X4FEri2NSpPG4dghmoXG6ODns/dNP6Lyq7Eda1ZgZK0qlAQK1HxnKEo85ckeF6cN5DzhQhUFr+clfxHuNgeCLqiSUbI2fhGkGzBkGzua7w6mIq9kY9CS1OD9LE1qylpwpKTGLhaNxrkCZQ4C9CQZ+16246/jAwOS8qF4lvmb/jGjBeL8yi6A0QEt/3teT/LNGir2s1bZuiG08qxQ0WhOFe9Hw3PlUJeBQDSqfBXLpfgQFIYByL1xiacfguJH5fowOq3ONWgCsNfOlCc6XY69H/hMr+FgYUlr8LCtjwOatsJUz3SzNWqGxIKEn6GiYCqfKJQnXv7o4rCI9P/iLYPJklCaj9MPT4cGrzVK2X32gD1iKXvGDtgbdsTGTLKv7Bv7zn5E96Ln0cvo8EwaDTYxd9lfFr39BX0IybU=</latexit><latexit sha1_base64="q9QynLOGAUsJcUg4ZSq4HGXK5U=">ACknicfVFtaxQxEM6tL63rW6t+80vwETKsSuCRCq9YMfFCt4d4XLcsxlZ/dCk+w2mVWPZX+Av8av+lP8N2avJ2grDiQ8eaZmczMotbKU5L8HESXLl+5urV9Lb5+4+at2zu7dya+apzEsax05Y4X4FEri2NSpPG4dghmoXG6ODns/dNP6Lyq7Eda1ZgZK0qlAQK1HxnKEo85ckeF6cN5DzhQhUFr+clfxHuNgeCLqiSUbI2fhGkGzBkGzua7w6mIq9kY9CS1OD9LE1qylpwpKTGLhaNxrkCZQ4C9CQZ+16246/jAwOS8qF4lvmb/jGjBeL8yi6A0QEt/3teT/LNGir2s1bZuiG08qxQ0WhOFe9Hw3PlUJeBQDSqfBXLpfgQFIYByL1xiacfguJH5fowOq3ONWgCsNfOlCc6XY69H/hMr+FgYUlr8LCtjwOatsJUz3SzNWqGxIKEn6GiYCqfKJQnXv7o4rCI9P/iLYPJklCaj9MPT4cGrzVK2X32gD1iKXvGDtgbdsTGTLKv7Bv7zn5E96Ln0cvo8EwaDTYxd9lfFr39BX0IybU=</latexit>g

[Theis et al. 2016]

[Larsen et al. 2016]

D

G(x)

+

[Goodfellow et al., 2014]

real (0.1)

fake (0.9)

log D(G(z))

low score

G(x)

+

EBGAN, WGAN, LSGAN, etc

high score

f

G(x)

Input Possible outputs ? ? ? ? ?

G(x)

z ∼ N(~ 0, 1)

InfoGAN [Chen et al. 2016] BiCycleGAN [Zhu et al., NIPS 2017]

y

Encourages z to relay information about the target.

Labels Randomly generated facades

[BiCycleGAN, Zhu et al., NIPS 2017]

possible outputs

—> Use a deep net, D, to model output! —> Generator is stochastic, learns to match data distribution

Jun-Yan Zhu Taesung Park

G(x)

arg min

G max D

log D(x, G(x)) + log(1 − D(x, y))

real or fake pair ?

real or fake pair ?

G(x)

arg min

G max D

log D(x, G(x)) + log(1 − D(x, y))

real or fake?

G(x)

arg min

G max D

+

Usually loss functions check if output matches a target instance GAN loss checks if output is part of an admissible set

Gaussian Target distribution

Horses Zebras

G(x)

G(x)

[Zhu et al. 2017], [Yi et al. 2017], [Kim et al. 2017]

Slide credit: Ming-Yu Liu

Slide credit: Ming-Yu Liu

[Galanti, Wolf, Benaim, 2018]

Conditional Entropy

“ALICE: Towards Understanding Adversarial Learning for Joint Distribution Matching” [Li et

High Conditional Entropy Low Conditional Entropy

Conditional Entropy

“ALICE: Towards Understanding Adversarial Learning for Joint Distribution Matching” [Li et

[Tzeng et al. 2014]

Simulated data

Real data

[Richter*, Vineet* et al. 2016] [Krähenbühl et al. 2018]

CycleGAN

Training data

[Hoffman, Tzeng, Park, Zhu, Isola, Saenko, Darrell, Efros, 2018]

Training data

CycleGAN

[Hoffman, Tzeng, Park, Zhu, Isola, Saenko, Darrell, Efros, 2018]

Training data

CycleGAN FCN

[Hoffman, Tzeng, Park, Zhu, Isola, Saenko, Darrell, Efros, 2018]

Input MR Generated CT Ground truth CT