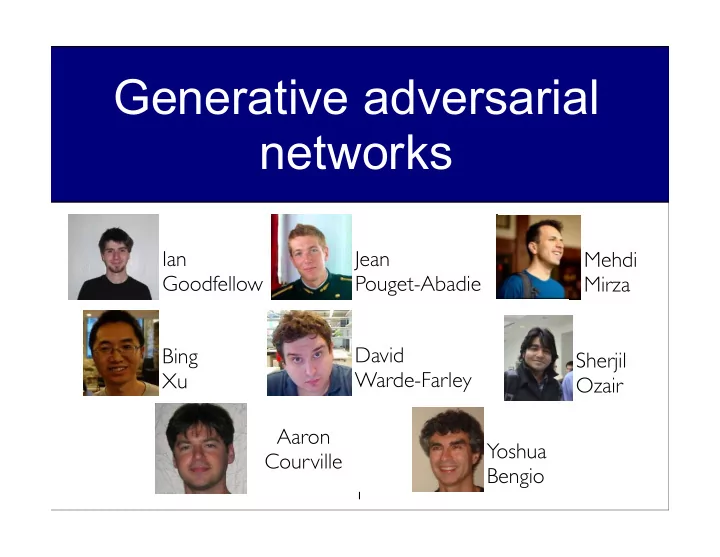

Generative adversarial networks

1

Ian Goodfellow Jean Pouget-Abadie Mehdi Mirza Bing Xu David Warde-Farley Sherjil Ozair Aaron Courville Yoshua Bengio

Generative adversarial networks Ian Jean Mehdi Goodfellow - - PowerPoint PPT Presentation

Generative adversarial networks Ian Jean Mehdi Goodfellow Pouget-Abadie Mirza David Bing Sherjil Warde-Farley Xu Ozair Aaron Yoshua Courville Bengio 1 Discriminative deep learning Recipe for success x 2014 NIPS Workshop on

1

Ian Goodfellow Jean Pouget-Abadie Mehdi Mirza Bing Xu David Warde-Farley Sherjil Ozair Aaron Courville Yoshua Bengio

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

2

x

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

into the ImageNet 1K competition (with extra data).

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

4

into the ImageNet 1K competition (with extra data).

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

5

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

6

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

7

θ

m

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

8

h(1) h(2) h(3) x

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

9

h(1) h(2) h(3) x d dθi log p(x) = d dθi

˜ p(h, x) − log Z(θ)

dθi log Z(θ) =

d dθi Z(θ)

Z(θ)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

correlated ⇒ leads to divergence of learning.

10

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow 11

MNIST dataset 1st layer features (RBM)

Coordinated flipping of low- level features

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

12

p(x, h) = p(x | h(1))p(h(1) | h(2)) . . . p(h(L−1) | h(L))p(h(L))

h(1) h(2) h(3) x

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

13

Conference on Learning Representations (ICLR) 2014.

variational inference in deep latent Gaussian models. ArXiv.

with gradient backpropagation.

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

14

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

directly.

15

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

16

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

circumstances.

their opponent’s strategy.

17

1 1

1

You Your opponent Rock Paper Scissors Rock Paper Scissors

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

18

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

19

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

20

Input noise Z Differentiable function G x sampled from model Differentiable function D D tries to

x sampled from data Differentiable function D D tries to

x x

z

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

21

min

G max D V (D, G) = Ex∼pdata(x)[log D(x)] + Ez∼pz(z)[log(1 − D(G(z)))].

G Ez∼pz(z)[log D(G(z))]

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

22

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

23

. . .

Poorly fit model After updating D After updating G Mixed strategy equilibrium Data distribution Model distribution

pD(data)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

24

. . .

Poorly fit model After updating D After updating G Mixed strategy equilibrium Data distribution Model distribution

pD(data)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

25

. . .

Poorly fit model After updating D After updating G Mixed strategy equilibrium Data distribution Model distribution

pD(data)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

26

. . .

Poorly fit model After updating D After updating G Mixed strategy equilibrium Data distribution Model distribution

pD(data)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

27

min

G max D V (D, G) = Ex∼pdata(x)[log D(x)] + Ez∼pz(z)[log(1 − D(G(z)))].

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

28

Model MNIST TFD DBN [3] 138 ± 2 1909 ± 66 Stacked CAE [3] 121 ± 1.6 2110 ± 50 Deep GSN [6] 214 ± 1.1 1890 ± 29 Adversarial nets 225 ± 2 2057 ± 26

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

29

MNIST TFD CIFAR-10 (fully connected) CIFAR-10 (convolutional)

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

30

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

along the path between A and B

desired.

31

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

32

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow 33

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

34

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

35

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

36

2014 NIPS Workshop on Perturbations, Optimization, and Statistics --- Ian Goodfellow

37

Emily Denton1∗, Soumith Chintala2∗, Arthur Szlam2, Rob Fergus2

1New York University 2Facebook AI Research ∗Denotes equal contribution

December 16, 2015

Laplacian Pyramid of Generative Adversarial Nets

Parametric generative model of natural images Difficult to generate large natural images in one shot, but we can exploit their multi-scale structure We combine the power of generative adversarial networks (GAN) with a multi-scale image representation (Laplacian pyramid) → → → →

Laplacian Pyramid of Generative Adversarial Nets

Have access to x ∼ pdata(x) through training set Want to learn a model x ∼ pmodel(x) Want pmodel to be similar to pdata

Samples drawn from pmodel reflect structure of pdata Samples from true data distribution have high likelihood under pmodel

Laplacian Pyramid of Generative Adversarial Nets

Unsupervised representation learning

Can transfer learned representation so discriminative tasks, retrieval, clustering, etc.

Train network with both discriminative and generative criterion

Very little labeled data Regularization

Understand data Density estimation ...

Laplacian Pyramid of Generative Adversarial Nets

Goodfellow et al. (2014): Sohl-Dickstein et al. (2015): Gregor et al. (2015):

Laplacian Pyramid of Generative Adversarial Nets

Generative model G: captures data distribution Discriminative model D: trained to distinguish between real and fake samples , defines loss function for G

Laplacian Pyramid of Generative Adversarial Nets

D is trained to estimate the probability that a sample came from data distribution rather than G G is trained to maximize the probability of D making a mistake min

G max D Ex∼pdata(x)[log D(x)] + Ez∼pnoise(z)[log(1 − D(G(z)))]

Laplacian Pyramid of Generative Adversarial Nets

Condition generation on additional info y (e.g. class label, another image) D has to determine if samples are realistic given y

[Mirza and Osindero (2014); Gauthier (2014)]

Laplacian Pyramid of Generative Adversarial Nets

Laplacian Pyramid of Generative Adversarial Nets

Laplacian Pyramid of Generative Adversarial Nets

Train conditional GAN for each level of Laplacian pyramid G learns to generate high frequency structure consistent with low frequency image

Laplacian Pyramid of Generative Adversarial Nets

Each level of Laplacian pyramid trained independently

Laplacian Pyramid of Generative Adversarial Nets

G2

~ I3

G3

z2 ~ h2 z3

G1

z1

G0

z0 ~ I2 l2 ~ I0 h0 ~ I1 ~ ~ h1 l1 l0

Laplacian Pyramid of Generative Adversarial Nets

Small dataset 32x32 images of objects, 50k images, 10 classes

Laplacian Pyramid of Generative Adversarial Nets

Laplacian Pyramid of Generative Adversarial Nets

Laplacian Pyramid of Generative Adversarial Nets

Laplacian Pyramid of Generative Adversarial Nets

Laplacian Pyramid of Generative Adversarial Nets

Humans randomly presented with real or generated image and asked to determine if real of fake Humans think LAPGAN generations are real ∼40% of the time

50 75 100 150 200 300 400 650 1000 2000 10 20 30 40 50 60 70 80 90 100

Presentation time (ms) % classified real Real CC−LAPGAN LAPGAN GAN

Laplacian Pyramid of Generative Adversarial Nets

Large dataset of scenes, ∼10M images, 10 classes.

Laplacian Pyramid of Generative Adversarial Nets

Laplacian Pyramid of Generative Adversarial Nets

Laplacian Pyramid of Generative Adversarial Nets

Laplacian Pyramid of Generative Adversarial Nets

Laplacian Pyramid of Generative Adversarial Nets

Radford, Metz and Chintala (2015) propose several tricks to make GAN training more stable

http://arxiv.org/pdf/1511.06434v1.pdf

Future work: apply same tricks to training of LAPGAN model to potenitally improve samples and produce higher resolution images

Laplacian Pyramid of Generative Adversarial Nets

Proposed a simple generative model that can produce decent quality samples of natural images Potential to be used as a decoder in autoencoder framework for unsupervised learning GAN framework is difficult to train, no clear objective function to track Code & demo: http://soumith.ch/eyescream

Laplacian Pyramid of Generative Adversarial Nets

Code & demo: http://soumith.ch/eyescream

Laplacian Pyramid of Generative Adversarial Nets