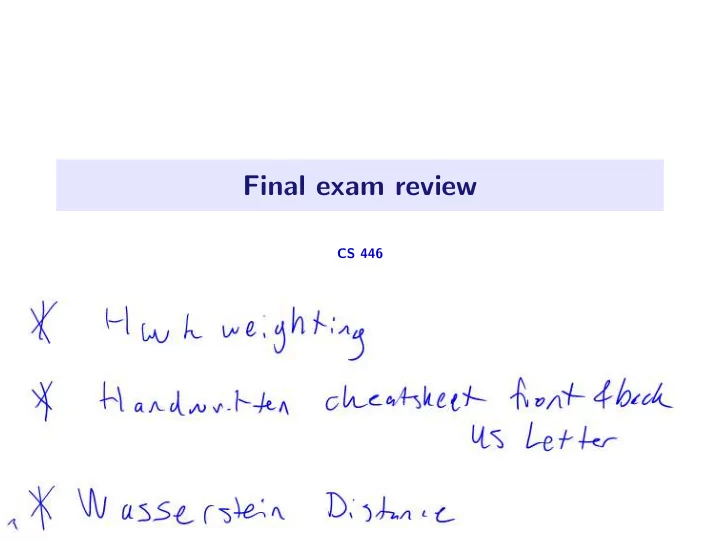

Final exam review

CS 446

Final exam review CS 446 Selected lecture slides 1 / 61 - - PowerPoint PPT Presentation

Final exam review CS 446 Selected lecture slides 1 / 61 Hoeffdings inequality Theorem (Hoeffdings inequality). Given IID Z i [ a, b ] , 2 n 2 1 exp Pr Z i E Z 1 . ( b a ) 2 n

CS 446

1 / 61

n

2 / 61

n

2 / 61

i=1,

i=1,

3 / 61

4 / 61

4 / 61

4 / 61

4 / 61

f∈F

n

2).

5 / 61

f∈F

n

2).

5 / 61

f∈F

n

2).

5 / 61

f∈F

n

2).

5 / 61

f∈F

n

2).

5 / 61

6 / 61

6 / 61

6 / 61

6 / 61

6 / 61

6 / 61

6 / 61

f∈F

n

2).

7 / 61

f∈F

n

2).

Tw : w ≤ W}) ≤ RW

7 / 61

f∈F

n

2).

Tw : w ≤ W}) ≤ RW

7 / 61

i=1, and the goal is. . . ?

8 / 61

i=1, and the goal is. . . ?

8 / 61

i=1, and the goal is. . . ?

8 / 61

9 / 61

Tu = sv. 9 / 61

Tu = sv.

i=1 siuivT i.

9 / 61

Tu = sv.

i=1 siuivT i.

T. 9 / 61

Tu = sv.

i=1 siuivT i.

T.

⊤

9 / 61

Tu = sv.

i=1 siuivT i.

T.

⊤

9 / 61

Tu = sv.

i=1 siuivT i.

T.

⊤

9 / 61

T and integer k ≤ r be

D∈Rk×d E∈Rd×k

F =

D∈Rd×k DTD=I

T

F

T

k

F =

r

i .

D∈Rd×k DTD=I

T

F =X2 F −

D∈Rd×k DTD=I

F

F −XV k2 F =X2 F −

k

i .

10 / 61

T and integer k ≤ r be

D∈Rk×d E∈Rd×k

F =

D∈Rd×k DTD=I

T

F

T

k

F =

r

i .

D∈Rd×k DTD=I

T

F =X2 F −

D∈Rd×k DTD=I

F

F −XV k2 F =X2 F −

k

i .

i=1 s2 i unique.

10 / 61

T and integer k ≤ r be

D∈Rk×d E∈Rd×k

F =

D∈Rd×k DTD=I

T

F

T

k

F =

r

i .

D∈Rd×k DTD=I

T

F =X2 F −

D∈Rd×k DTD=I

F

F −XV k2 F =X2 F −

k

i .

i=1 s2 i unique.

10 / 61

T and integer k ≤ r be

D∈Rk×d E∈Rd×k

F =

D∈Rd×k DTD=I

T

F

T

k

F =

r

i .

D∈Rd×k DTD=I

T

F =X2 F −

D∈Rd×k DTD=I

F

F −XV k2 F =X2 F −

k

i .

i=1 s2 i unique.

10 / 61

n

11 / 61

n

TX ∈ Rd×d is data covariance; 11 / 61

n

TX ∈ Rd×d is data covariance;

T(XD) is data covariance after projection; 11 / 61

n

TX ∈ Rd×d is data covariance;

T(XD) is data covariance after projection;

F = 1

T(XD)

k

T(XDei),

11 / 61

12 / 61

12 / 61

13 / 61

A∈A φ(Ct; A) ≤ φ(Ct, At−1),

C∈C φ(C; At) ≤ φ(Ct, At),

i=1.

A∈A φ(Ct; A) ≤ φ(Ct, At−1),

C∈C φ(C; At) ≤ φ(Ct, At),

i=1.

13 / 61

14 / 61

14 / 61

14 / 61

i=1 be given.

i=1 with (µ(xi))n i=1.

15 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

100 200 300 400 500 100 200 300 400 500

16 / 61

2.

17 / 61

18 / 61

i=1, pick the model that maximized the likelihood

θ∈Θ L(θ) = max θ∈Θ ln n

θ∈Θ n

18 / 61

19 / 61

i xi and T := i(1 − xi) = n − H for convenience,

n

19 / 61

i xi and T := i(1 − xi) = n − H for convenience,

n

H T +H = H N .

19 / 61

2σ2

20 / 61

2σ2

n

n

20 / 61

y∈Y

21 / 61

y∈Y

21 / 61

y∈Y

j=1 p(Xj = xj|Y = y)

y∈Y

y∈Y

d

21 / 61

y∈Y

y∈Y

d

22 / 61

y∈Y

y∈Y

d

22 / 61

23 / 61

j=1) at

k

k

n

k

TΣ−1(xi − µj)

23 / 61

0.58 0.60 0.62 0.64 0.66 0.68 5 10 15 20 25

24 / 61

0.58 0.60 0.62 0.64 0.66 0.68 5 10 15 20 25

24 / 61

0.58 0.60 0.62 0.64 0.66 0.68 5 10 15 20 25

25 / 61

0.58 0.60 0.62 0.64 0.66 0.68 5 10 15 20 25

25 / 61

i=1 ln k j=1 πjpµj,Σj(xi) with n

k

26 / 61

i=1 ln k j=1 πjpµj,Σj(xi) with n

k

i=1 Rij

i=1

l=1 Ril

i=1 Rij

i=1 Rijxi

i=1 Rij

i=1 Rijxi

i=1 Rij(xi − µj)(xi − µj)T

26 / 61

j

27 / 61

j

n

k

n

j

27 / 61

j

n

k

n

j

27 / 61

n

k

28 / 61

n

k

28 / 61

j=1 given

l=1 πlpµl,Σl(xi)

i=1 Rij

i=1

l=1 Ril

i=1 Rij

i=1 Rijxi

i=1 Rij

i=1 Rijxi

i=1 Rij(xi − µj)(xi − µj)T

29 / 61

5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 10.0 30 / 61

5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 10.0 30 / 61

5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 10.0 30 / 61

5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 10.0 30 / 61

5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 10.0 30 / 61

5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 10.0 30 / 61

5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 10.0 30 / 61

5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 10.0 30 / 61

5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 10.0 30 / 61

5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 10.0 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

10 5 5 10 15 10.0 7.5 5.0 2.5 0.0 2.5 5.0 7.5 30 / 61

θ∈Θ

R∈Rn×k L(θt; R) = L(θt; Rt+1) = L(θt)

31 / 61

θ∈Θ

R∈Rn×k L(θt; R) = L(θt; Rt+1) = L(θt)

31 / 61

32 / 61

1, . . . , (σj)2 d) where

l :=

i=1 Rij(xi − µj)2 l

32 / 61

1, . . . , (σj)2 d) where

l :=

i=1 Rij(xi − µj)2 l

32 / 61

33 / 61

34 / 61

34 / 61

34 / 61

35 / 61

... jn∈{1,...,k} n

n

k

35 / 61

36 / 61

37 / 61

38 / 61

38 / 61

39 / 61

T and k ≤ r,

E∈Rd×k D∈Rk×d

F =

T

k

F . 39 / 61

f∈E g∈D

n

2.

40 / 61

f∈E g∈D

n

2.

40 / 61

i=1.

n

i=1 k

h

41 / 61

i=1.

n

i=1 k

h

41 / 61

f

map

g

map

1 n

i=1 ℓ(xi, ˆ

42 / 61

f

map

g

map

1 n

i=1 ℓ(xi, ˆ

f

map

g

pushforward

1 n

i=1

42 / 61

i=1 ∼ ν.

i=1 ≈ (xi)n i=1, where (zi)n i=1 ∼ µ.

43 / 61

i=1 ∼ ν.

i=1 ≈ (xi)n i=1, where (zi)n i=1 ∼ µ.

i=1 and (xi)n i=1) and pick g to minimize that!

43 / 61

44 / 61

i=1 with ˆ

i=1

i=1.

44 / 61

2 + pg 2 .

45 / 61

2 + pg 2 .

45 / 61

2 + pg 2 .

g∈G

f∈F f:X→(0,1)

n

m

45 / 61

g∈G

f∈F f:X→(0,1)

n

m

j=1 = (g(zj))m j=1, and approximately optimize

f∈F f:X→(0,1)

n

m

j=1 and

g∈G

n

m

46 / 61

j=1 = (g(zj))m j=1, and approximately optimize

f∈F f:X→(0,1)

n

m

j=1 and

g∈G

n

m

47 / 61

g∈G

f∈F f:X→(0,1)

n

m

48 / 61

g∈G

f∈F f:X→(0,1)

n

m

g∈G

f∈F fLip≤1

n

m

48 / 61

g∈G

f∈F fLip≤1

n

m

49 / 61

g∈G

f∈F fLip≤1

n

m

49 / 61

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.05 0.10 0.15 0.20 0.25

#classifiers = n = 10, fraction green = 0.366897

i yi ≥ 0,

i yi < 0.

n

50 / 61

0.0 0.2 0.4 0.6 0.8 1.0 0.000 0.025 0.050 0.075 0.100 0.125 0.150 0.175

#classifiers = n = 20, fraction green = 0.244663

i yi ≥ 0,

i yi < 0.

n

50 / 61

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12 0.14

#classifiers = n = 30, fraction green = 0.175369

i yi ≥ 0,

i yi < 0.

n

50 / 61

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12

#classifiers = n = 40, fraction green = 0.129766

i yi ≥ 0,

i yi < 0.

n

50 / 61

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12

#classifiers = n = 50, fraction green = 0.0978074

i yi ≥ 0,

i yi < 0.

n

50 / 61

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10

#classifiers = n = 60, fraction green = 0.0746237

i yi ≥ 0,

i yi < 0.

n

50 / 61

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10

#classifiers = n = 60, fraction green = 0.0746237

51 / 61

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10

#classifiers = n = 60, fraction green = 0.0746237

n

51 / 61

i , y(t) i ))n i=1,

52 / 61

i , y(t) i ))n i=1,

52 / 61

i , y(t) i ))n i=1,

53 / 61

i , y(t) i ))n i=1,

53 / 61

i=1.

54 / 61

i=1.

54 / 61

55 / 61

n→∞

55 / 61

n→∞

55 / 61

56 / 61

i=1 and classifiers (h1, . . . , hT ).

n

T

n

Tzi

j=1 wjhj(x).

57 / 61

i=1 and classifiers (h1, . . . , hT ).

n

T

n

Tzi

j=1 wjhj(x).

57 / 61

sepal length/width 1.5 2 2.5 3 petal length/width 2 2.5 3 3.5 4 4.5 5 5.5 6

58 / 61

sepal length/width 1.5 2 2.5 3 petal length/width 2 2.5 3 3.5 4 4.5 5 5.5 6

ˆ y = 2

58 / 61

sepal length/width 1.5 2 2.5 3 petal length/width 2 2.5 3 3.5 4 4.5 5 5.5 6

x1 > 1.7

58 / 61

sepal length/width 1.5 2 2.5 3 petal length/width 2 2.5 3 3.5 4 4.5 5 5.5 6

x1 > 1.7 ˆ y = 1 ˆ y = 3

58 / 61

sepal length/width 1.5 2 2.5 3 petal length/width 2 2.5 3 3.5 4 4.5 5 5.5 6

x1 > 1.7 ˆ y = 1 ˆ y = 3

58 / 61

n

i=1 ℓ

j=1 wjhj(xi)

1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

0.000 0.000 1.500 1.500 3.000 4.500 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

.

0.000 1 . 2 . 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

. 2

. 4

. 6

0.000 0.800 1.600

59 / 61

n

i=1 ℓ

j=1 wjhj(xi)

1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

0.000 . 2.000 2.000 4.000 6.000 8.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 5

. 1.500 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. . 2 .

59 / 61

n

i=1 ℓ

j=1 wjhj(xi)

1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 5

. 5 0.000 . 2.500 5.000 7.500 10.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

.

. . . 2 . 4.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

. 0.000 0.000 3.000 6 .

59 / 61

n

i=1 ℓ

j=1 wjhj(xi)

1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

. 5

. 5 0.000 0.000 2.500 5.000 7.500 10.000 12.500 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 5 0.000 . 2 . 5 5 . 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

6 .

0.000 . 4.000 8 .

59 / 61

n

i=1 ℓ

j=1 wjhj(xi)

1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 0.000 0.000 3.000 6.000 9.000 12.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 0.000 0.000 3 . 6 . 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

0.000 . 5.000 10.000

59 / 61

60 / 61

61 / 61