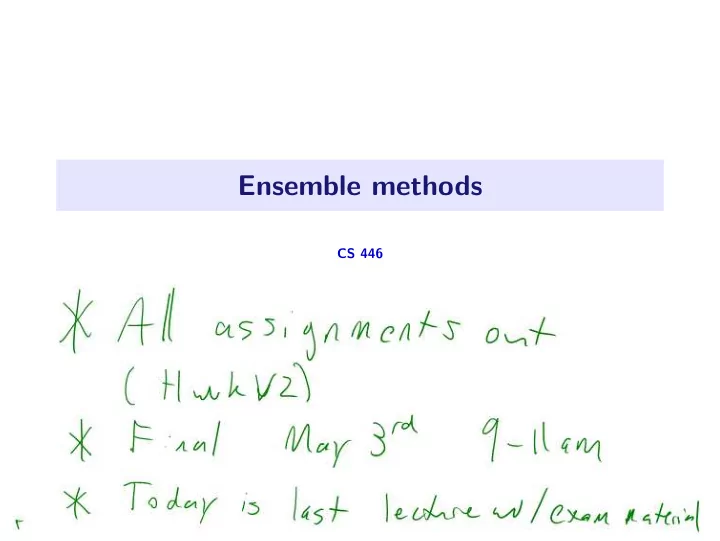

Ensemble methods

CS 446

Ensemble methods CS 446 Why ensembles? Standard machine learning - - PowerPoint PPT Presentation

Ensemble methods CS 446 Why ensembles? Standard machine learning setup: We have some data. We train 10 predictors (3-nn, least squares, SVM, ResNet, . . . ). We output the best on a validation set. 1 / 27 Why ensembles? Standard

CS 446

1 / 27

1 / 27

1 / 27

1 / 27

2 / 27

3 / 27

i=1 (thus E(Zi) = 0.4).

4 / 27

i=1 (thus E(Zi) = 0.4).

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.05 0.10 0.15 0.20 0.25

#classifiers = n = 10

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.000 0.025 0.050 0.075 0.100 0.125 0.150 0.175

#classifiers = n = 20

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12 0.14

#classifiers = n = 30

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12

#classifiers = n = 40

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12

#classifiers = n = 50

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10

#classifiers = n = 60

4 / 27

i=1 (thus E(Zi) = 0.4).

0.2 0.0 0.2 0.4 0.6 0.8 1.0 1.2 0.0 0.1 0.2 0.3 0.4 0.5

#classifiers = n = 2, fraction red = 0.16

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.1 0.2 0.3 0.4

#classifiers = n = 3, fraction red = 0.064

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.05 0.10 0.15 0.20 0.25 0.30 0.35

#classifiers = n = 4, fraction red = 0.0256

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.05 0.10 0.15 0.20 0.25 0.30 0.35

#classifiers = n = 5, fraction red = 0.01024

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.05 0.10 0.15 0.20 0.25 0.30

#classifiers = n = 6, fraction red = 0.004096

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.05 0.10 0.15 0.20 0.25 0.30

#classifiers = n = 7, fraction red = 0.0016384

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.05 0.10 0.15 0.20 0.25

#classifiers = n = 10, fraction green = 0.366897

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.000 0.025 0.050 0.075 0.100 0.125 0.150 0.175

#classifiers = n = 20, fraction green = 0.244663

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12 0.14

#classifiers = n = 30, fraction green = 0.175369

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12

#classifiers = n = 40, fraction green = 0.129766

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12

#classifiers = n = 50, fraction green = 0.0978074

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10

#classifiers = n = 60, fraction green = 0.0746237

4 / 27

i=1 (thus E(Zi) = 0.4).

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10

#classifiers = n = 60, fraction green = 0.0746237

4 / 27

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.05 0.10 0.15 0.20 0.25

#classifiers = n = 10, fraction green = 0.366897

i yi ≥ 0,

i yi < 0.

n

5 / 27

0.0 0.2 0.4 0.6 0.8 1.0 0.000 0.025 0.050 0.075 0.100 0.125 0.150 0.175

#classifiers = n = 20, fraction green = 0.244663

i yi ≥ 0,

i yi < 0.

n

5 / 27

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12 0.14

#classifiers = n = 30, fraction green = 0.175369

i yi ≥ 0,

i yi < 0.

n

5 / 27

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12

#classifiers = n = 40, fraction green = 0.129766

i yi ≥ 0,

i yi < 0.

n

5 / 27

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10 0.12

#classifiers = n = 50, fraction green = 0.0978074

i yi ≥ 0,

i yi < 0.

n

5 / 27

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10

#classifiers = n = 60, fraction green = 0.0746237

i yi ≥ 0,

i yi < 0.

n

5 / 27

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10

#classifiers = n = 60, fraction green = 0.0746237

6 / 27

0.0 0.2 0.4 0.6 0.8 1.0 0.00 0.02 0.04 0.06 0.08 0.10

#classifiers = n = 60, fraction green = 0.0746237

n

6 / 27

i , y(t) i ))n i=1,

7 / 27

i , y(t) i ))n i=1,

7 / 27

i=1.

8 / 27

i=1.

8 / 27

9 / 27

n→∞

9 / 27

n→∞

9 / 27

i=1.

10 / 27

i=1.

10 / 27

11 / 27

12 / 27

i=1 and classifiers (h1, . . . , hT ).

n

T

n

Tzi

j=1 wjhj(x).

13 / 27

i=1 and classifiers (h1, . . . , hT ).

n

T

n

Tzi

j=1 wjhj(x).

13 / 27

sepal length/width 1.5 2 2.5 3 petal length/width 2 2.5 3 3.5 4 4.5 5 5.5 6

14 / 27

sepal length/width 1.5 2 2.5 3 petal length/width 2 2.5 3 3.5 4 4.5 5 5.5 6

ˆ y = 2

14 / 27

sepal length/width 1.5 2 2.5 3 petal length/width 2 2.5 3 3.5 4 4.5 5 5.5 6

x1 > 1.7

14 / 27

sepal length/width 1.5 2 2.5 3 petal length/width 2 2.5 3 3.5 4 4.5 5 5.5 6

x1 > 1.7 ˆ y = 1 ˆ y = 3

14 / 27

sepal length/width 1.5 2 2.5 3 petal length/width 2 2.5 3 3.5 4 4.5 5 5.5 6

x1 > 1.7 ˆ y = 1 ˆ y = 3

14 / 27

n

i=1 ℓ

j=1 wjhj(xi)

1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

0.000 0.000 1.500 1.500 3.000 4.500 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

.

0.000 1 . 2 . 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

. 2

. 4

. 6

0.000 0.800 1.600

15 / 27

n

i=1 ℓ

j=1 wjhj(xi)

1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

0.000 . 2.000 2.000 4.000 6.000 8.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 5

. 1.500 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. . 2 .

15 / 27

n

i=1 ℓ

j=1 wjhj(xi)

1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 5

. 5 0.000 . 2.500 5.000 7.500 10.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

.

. . . 2 . 4.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

. 0.000 0.000 3.000 6 .

15 / 27

n

i=1 ℓ

j=1 wjhj(xi)

1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

. 5

. 5 0.000 0.000 2.500 5.000 7.500 10.000 12.500 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 5 0.000 . 2 . 5 5 . 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

6 .

0.000 . 4.000 8 .

15 / 27

n

i=1 ℓ

j=1 wjhj(xi)

1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 0.000 0.000 3.000 6.000 9.000 12.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 0.000 0.000 3 . 6 . 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

0.000 . 5.000 10.000

15 / 27

16 / 27

j

n

j

j

j

j hj(xi)yi).

17 / 27

j

j

18 / 27

j

j

18 / 27

19 / 27

γ2 ln( 1 ǫ )) iterations for accuracy ǫ > 0.

19 / 27

20 / 27

20 / 27

21 / 27

21 / 27

21 / 27

21 / 27

+ + – –

21 / 27

+ + – – + + – –

21 / 27

+ + – –

22 / 27

+ + – –

22 / 27

10 100 1000 5 10 15 20

23 / 27

t=1 αtft(x)

t=1 |αt|

24 / 27

t=1 αtft(x)

t=1 |αt|

24 / 27

i=1 and f, plot unnormalized margin distribution

y=yi f(xi)y.

25 / 27

i=1 and f, plot unnormalized margin distribution

y=yi f(xi)y.

1 1 2 3 4 5 0.0 0.2 0.4 0.6 0.8 1.0 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

0.000 0.000 1.500 1.500 3.000 4.500

25 / 27

i=1 and f, plot unnormalized margin distribution

y=yi f(xi)y.

1 2 3 4 5 6 7 0.0 0.2 0.4 0.6 0.8 1.0 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

0.000 . 2.000 2.000 4.000 6.000 8.000

25 / 27

i=1 and f, plot unnormalized margin distribution

y=yi f(xi)y.

2 4 6 8 0.0 0.2 0.4 0.6 0.8 1.0 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 5

. 5 0.000 . 2.500 5.000 7.500 10.000

25 / 27

i=1 and f, plot unnormalized margin distribution

y=yi f(xi)y.

2 4 6 8 10 0.0 0.2 0.4 0.6 0.8 1.0 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

. 5

. 5 0.000 0.000 2.500 5.000 7.500 10.000 12.500

25 / 27

i=1 and f, plot unnormalized margin distribution

y=yi f(xi)y.

2 4 6 8 10 12 0.0 0.2 0.4 0.6 0.8 1.0 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 0.000 0.000 3.000 6.000 9.000 12.000

25 / 27

i=1 and f, plot unnormalized margin distribution

y=yi f(xi)y.

1 1 2 3 4 5 0.0 0.2 0.4 0.6 0.8 1.0 2 1 1 2 3 4 0.0 0.2 0.4 0.6 0.8 1.0 2 1 1 2 3 0.0 0.2 0.4 0.6 0.8 1.0 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

0.000 0.000 1.500 1.500 3.000 4.500 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

.

0.000 1 . 2 . 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

. 2

. 4

. 6

0.000 0.800 1.600

25 / 27

i=1 and f, plot unnormalized margin distribution

y=yi f(xi)y.

1 2 3 4 5 6 7 0.0 0.2 0.4 0.6 0.8 1.0 2 4 6 0.0 0.2 0.4 0.6 0.8 1.0 2 4 6 8 10 0.0 0.2 0.4 0.6 0.8 1.0 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

0.000 . 2.000 2.000 4.000 6.000 8.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 5

. 1.500 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. . 2 .

25 / 27

i=1 and f, plot unnormalized margin distribution

y=yi f(xi)y.

2 4 6 8 0.0 0.2 0.4 0.6 0.8 1.0 2 4 6 8 10 0.0 0.2 0.4 0.6 0.8 1.0 2 4 6 8 10 12 14 16 0.0 0.2 0.4 0.6 0.8 1.0 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 5

. 5 0.000 . 2.500 5.000 7.500 10.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

.

. . . 2 . 4.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

. 0.000 0.000 3.000 6 .

25 / 27

i=1 and f, plot unnormalized margin distribution

y=yi f(xi)y.

2 4 6 8 10 0.0 0.2 0.4 0.6 0.8 1.0 2 4 6 8 10 12 0.0 0.2 0.4 0.6 0.8 1.0 0.0 2.5 5.0 7.5 10.0 12.5 15.0 17.5 20.0 0.0 0.2 0.4 0.6 0.8 1.0 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

. 5

. 5 0.000 0.000 2.500 5.000 7.500 10.000 12.500 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 5 0.000 . 2 . 5 5 . 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

6 .

0.000 . 4.000 8 .

25 / 27

i=1 and f, plot unnormalized margin distribution

y=yi f(xi)y.

2 4 6 8 10 12 0.0 0.2 0.4 0.6 0.8 1.0 2 4 6 8 10 12 14 0.0 0.2 0.4 0.6 0.8 1.0 5 10 15 20 0.0 0.2 0.4 0.6 0.8 1.0 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 0.000 0.000 3.000 6.000 9.000 12.000 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

.

. 0.000 0.000 3 . 6 . 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00

0.000 . 5.000 10.000

25 / 27

26 / 27

27 / 27