*

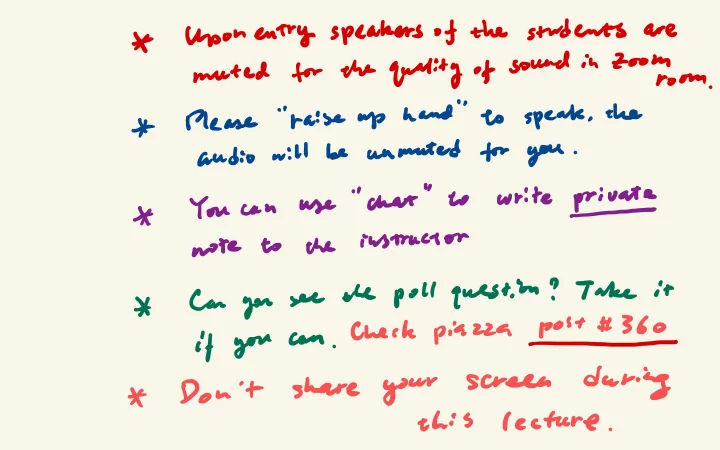

Upon entry speakers of the students

are

muted

for the quality of sound in Zoom

room .

*

Please

' ' raise uphand

' 'to

speak , the

audio will

be

anmuted

for you

.*

You can

use

'' chat"

to

write private

note

to

the instructor

*

Can you

see

the poll question ? T

ake it

check piazza post # 360

if

you

can

.- *

Don 't

share your

screen

during

this

lecture

.