2/23/09 1

Distributed Algorithms PART III SHARED MEMORY

1 Bertinoro, March 2009

Distributed Algorithms

Part III

Shared memory

- Shared memory distributed system

– Set of processes – Set of shared objects (variables)

- access to a shared variable is a single (indivisible) event

- CommunicaCon through shared variables

– No channels

- We use a single automaton to model the

enCre system

– using several automata and the composiCon

- peraCon leads to some (technical) difficulCes

2

Bertinoro, March 2009

Distributed Algorithms

Part III

Shared memory

3

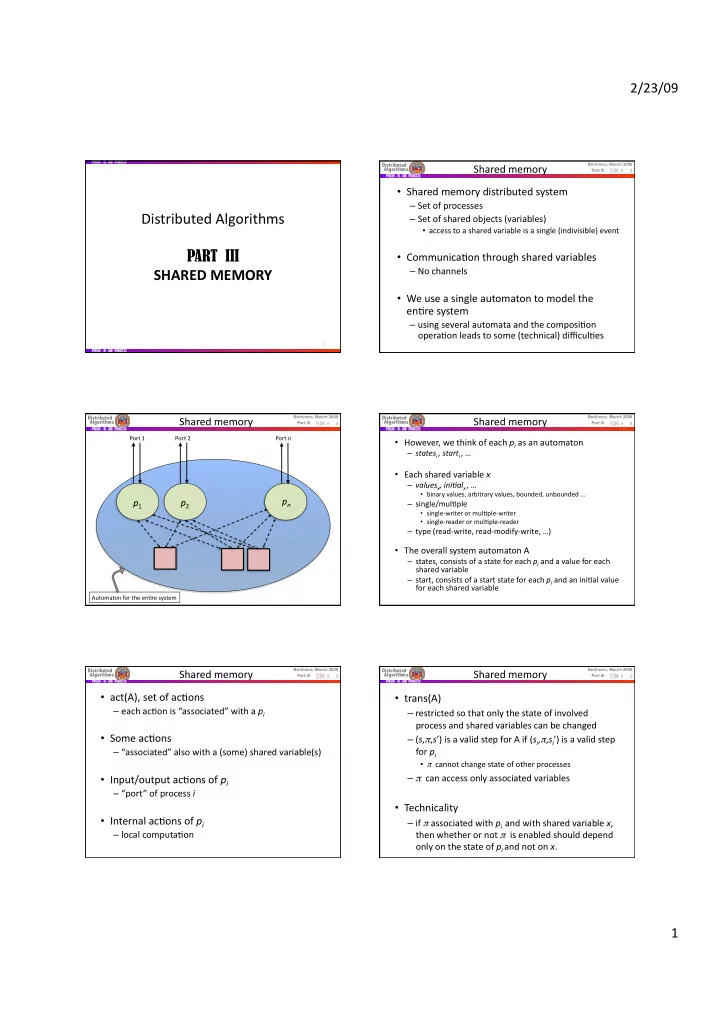

p1 p2 pn

Port 1 Port 2 Port n Automaton for the enCre system

Bertinoro, March 2009

Distributed Algorithms

Part III

Shared memory

- However, we think of each pi as an automaton

– statesi , starti , …

- Each shared variable x

– valuesx, ini0alx , …

- binary values, arbitrary values, bounded, unbounded …

– single/mulCple

- single‐writer or mulCple‐writer

- single‐reader or mulCple‐reader

– type (read‐write, read‐modify‐write, …)

- The overall system automaton A

– states, consists of a state for each pi and a value for each shared variable – start, consists of a start state for each pi and an iniCal value for each shared variable

4

Bertinoro, March 2009

Distributed Algorithms

Part III

Shared memory

- act(A), set of acCons

– each acCon is “associated” with a pi

- Some acCons

– “associated” also with a (some) shared variable(s)

- Input/output acCons of pi

– “port” of process i

- Internal acCons of pi

– local computaCon

5

Bertinoro, March 2009

Distributed Algorithms

Part III

Shared memory

- trans(A)

– restricted so that only the state of involved process and shared variables can be changed – (s,π,s’) is a valid step for A if (si,π,si’) is a valid step for pi

- π cannot change state of other processes

– π can access only associated variables

- Technicality

– if π associated with pi and with shared variable x, then whether or not π is enabled should depend

- nly on the state of pi and not on x.

6