3/4/09 1

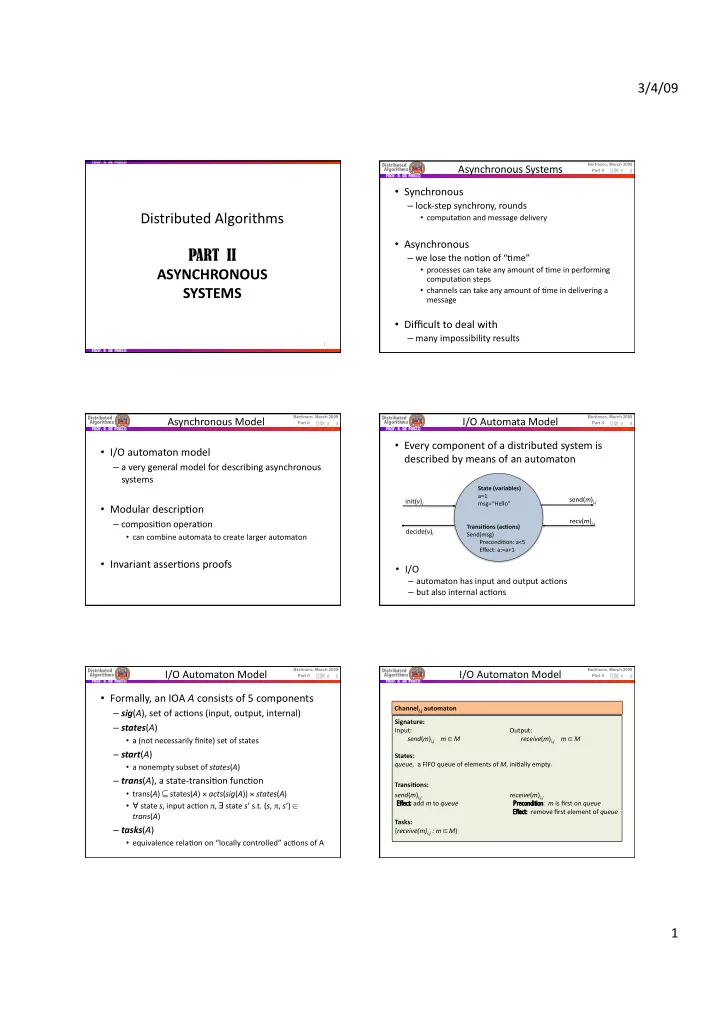

Distributed Algorithms PART II ASYNCHRONOUS SYSTEMS

1 Bertinoro, March 2009

Distributed Algorithms

Part II

Asynchronous Systems

- Synchronous

– lock‐step synchrony, rounds

- computa@on and message delivery

- Asynchronous

– we lose the no@on of “@me”

- processes can take any amount of @me in performing

computa@on steps

- channels can take any amount of @me in delivering a

message

- Difficult to deal with

– many impossibility results

2

Bertinoro, March 2009

Distributed Algorithms

Part II

Asynchronous Model

- I/O automaton model

– a very general model for describing asynchronous systems

- Modular descrip@on

– composi@on opera@on

- can combine automata to create larger automaton

- Invariant asser@ons proofs

3

Bertinoro, March 2009

Distributed Algorithms

Part II

I/O Automata Model

- Every component of a distributed system is

described by means of an automaton

4

- I/O

– automaton has input and output ac@ons – but also internal ac@ons

State (variables) a=1 msg=“Hello” Transi:ons (ac:ons) Send(msg) Precondi@on: a<5 Effect: a:=a+1

init(v)i decide(v)i send(m)i,j recv(m)j,i

Bertinoro, March 2009

Distributed Algorithms

Part II

I/O Automaton Model

- Formally, an IOA A consists of 5 components

– sig(A), set of ac@ons (input, output, internal) – states(A)

- a (not necessarily finite) set of states

– start(A)

- a nonempty subset of states(A)

– trans(A), a state‐transi@on func@on

- trans(A) ⊆ states(A) × acts(sig(A)) × states(A)

- ∀ state s, input ac@on π, ∃ state s’ s.t. (s, π, s’) ∈

trans(A)

– tasks(A)

- equivalence rela@on on “locally controlled” ac@ons of A

5

Bertinoro, March 2009

Distributed Algorithms

Part II

I/O Automaton Model

Signature: Input: Output: send(m)i,j m ∈ M receive(m)i,j m ∈ M States: queue, a FIFO queue of elements of M, ini@ally empty. Transi:ons: send(m)i,j

receive(m)i,j Ef

Effect: fect: add m to queue

Pr

Precondition econdition: m is first on queue Ef Effect: fect: remove first element of queue Tasks: {receive(m)i,j : m ∈ M}

6

Channeli,j automaton