Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

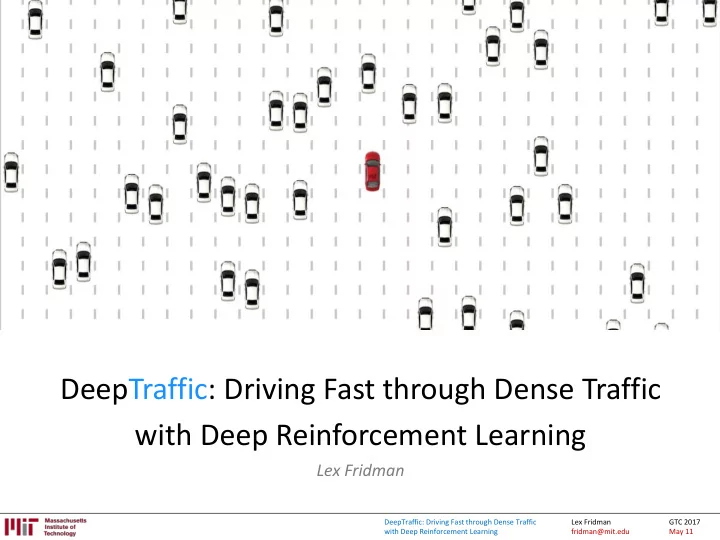

DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman

DeepTraffic: Driving Fast through Dense Traffic with Deep - - PowerPoint PPT Presentation

DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning Lex Fridman DeepTraffic: Driving Fast through Dense Traffic Lex Fridman GTC 2017 with Deep Reinforcement Learning fridman@mit.edu May 11 DeepTraffic: Driving

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

* estimated time to discover globally optimal solution

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Memorization Understanding

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Teslas instrumented: 18 Hours of data: 6,000+ hours Distance traveled: 140,000+ miles Video frames: 2+ billion Autopilot: ~12%

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Paden B, Čáp M, Yong SZ, Yershov D, Frazzoli E. "A Survey of Motion Planning and Control Techniques for Self- driving Urban Vehicles." IEEE Transactions on Intelligent Vehicles 1.1 (2016): 33-55.

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Reference: http://www.traffic-simulation.de

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Takeaway from Supervised Learning: Neural networks are great at memorization and not (yet) great at reasoning. Hope for Reinforcement Learning: Brute-force propagation of outcomes to knowledge about states and

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

References: [80]

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

state action reward Terminal state

References: [84]

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

state action reward Terminal state

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

START

actions: UP , DOWN, LEFT , RIGHT UP 80% move UP 10% move LEFT 10% move RIGHT

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

𝑢 + 𝑠 𝑢+1 + 𝑠 𝑢+2 + ⋯ + 𝑠 𝑜

References: [84]

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

performing a, and following

s a s’ r

New State Old State Reward Learning Rate Discount Factor

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

References: [84]

A1 A2 A3 A4 S1 +1 +2

S2 +2 +1

S3

+1

S4

+1 +1

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

𝟑𝟔𝟕𝟗𝟓×𝟗𝟓×𝟓 rows in theQ-table!

References: [83, 84]

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

References: [83]

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Mnih et al. "Playing atari with deep reinforcement learning." 2013.

References: [83]

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

References: [85]

After 120 Minutes

After 10 Minutes

After 240 Minutes

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

References: [83]

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Given a transition < s, a, r, s’ >, the Q-table update rule in the previous algorithm must be replaced with the following:

predicted Q-values for all actions

maximum overall network outputs max a’ Q(s’, a’)

the max calculated in step 2).

References: [83]

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

References: (Karaman RRT*)

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Soccer is harder than Chess

References: [8, 9]

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Example: https://github.com/matthiasplappert/keras-rl

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Example: https://github.com/matthiasplappert/keras-rl

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

http://cars.mit.edu

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

http://cars.mit.edu

1st place: Titan XP 2nd place: GeForce GTX 1080 Ti 3rd place: Jetson TX2

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

http://cars.mit.edu

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Lex Fridman fridman@mit.edu GTC 2017 May 11 DeepTraffic: Driving Fast through Dense Traffic with Deep Reinforcement Learning

Slides available at http://cars.mit.edu/gtc