De-anonymizing Data

CompSci 590.03 Instructor: Ashwin Machanavajjhala

1 Lecture 2 : 590.03 Fall 12

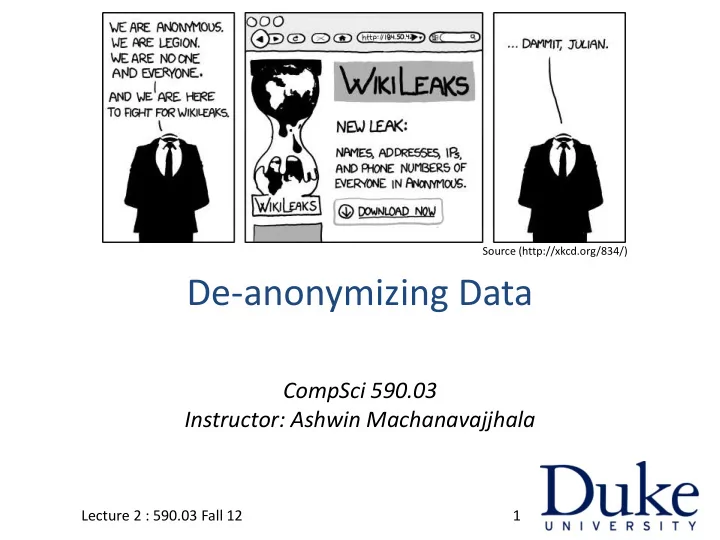

Source (http://xkcd.org/834/)

De-anonymizing Data CompSci 590.03 Instructor: Ashwin - - PowerPoint PPT Presentation

Source (http://xkcd.org/834/) De-anonymizing Data CompSci 590.03 Instructor: Ashwin Machanavajjhala Lecture 2 : 590.03 Fall 12 1 Announcements Project ideas will be posted on the site by Friday. You are welcome to send me (or talk to

CompSci 590.03 Instructor: Ashwin Machanavajjhala

1 Lecture 2 : 590.03 Fall 12

Source (http://xkcd.org/834/)

– You are welcome to send me (or talk to me about) your own ideas.

Lecture 2 : 590.03 Fall 12 2

– Passive Attacks – Active Attacks

Lecture 2 : 590.03 Fall 12 3

– Passive Attacks – Active Attacks

Lecture 2 : 590.03 Fall 12 4

DB

Person 1

r1

Person 2

r2

Person 3

r3

Person N

rN

Census

DB

Hospital

DB

Doctors Medical Researchers Economists Information Retrieval Researchers Recommen- dation Algorithms

5 Lecture 2 : 590.03 Fall 12

Registered

affiliation

voted

date

Medical Data Voter List

uniquely identified using ZipCode, Birth Date, and Sex. Name linked to Diagnosis

6 Lecture 2 : 590.03 Fall 12

Registered

affiliation

voted

date

Medical Data Voter List

uniquely identified using ZipCode, Birth Date, and Sex.

Quasi Identifier

87 % of US population

7 Lecture 2 : 590.03 Fall 12

Statistical Privacy (Trusted Collector) Problem

8

Individual 1 r1 Individual 2 r2 Individual 3 r3 Individual N rN

Server

DB

Utility: Privacy: No breach about any individual

Lecture 2 : 590.03 Fall 12

Statistical Privacy (Untrusted Collector) Problem

9

Individual 1 r1 Individual 2 r2 Individual 3 r3 Individual N rN

Server

DB

Lecture 2 : 590.03 Fall 12

– heads with probability p, and – tails with probability 1-p (p > ½)

Lecture 2 : 590.03 Fall 12 10

True Answer = Yes True Answer = No Heads Yes No Tails No Yes

Statistical Privacy (Trusted Collector) Problem

11

Individual 1 r1 Individual 2 r2 Individual 3 r3 Individual N rN

Server

DB

Lecture 2 : 590.03 Fall 12

12

Individual 1 r1 Individual 2 r2 Individual 3 r3 Individual N rN

Hospital

DB

Lecture 2 : 590.03 Fall 12

Correlate Genome to disease How many allergy patients?

‘

answering a few questions, server will run out of privacy budget and not be able to answer any more questions.

Lecture 2 : 590.03 Fall 12 13

14

Individual 1 r1 Individual 2 r2 Individual 3 r3 Individual N rN

Hospital

DB

Lecture 2 : 590.03 Fall 12

I wont tell you what questions I am interested in!

writingcenterunderground.wordpress.com

15

Individual 1 r1 Individual 2 r2 Individual 3 r3 Individual N rN

Hospital

DB

Lecture 2 : 590.03 Fall 12

D’B

Answer any # of questions directly on DB’ without any modifications.

publishing (with insufficient sanitization).

Lecture 2 : 590.03 Fall 12 16

– Passive Attacks – Active Attacks

Lecture 2 : 590.03 Fall 12 17

Lecture 2 : 590.03 Fall 12 18

Lecture 2 : 590.03 Fall 12 19

Not heavy tailed. Normal Distribution

Lecture 2 : 590.03 Fall 12 20

Heavy tailed. Laplace Distribution

Lecture 2 : 590.03 Fall 12 21

Heavy tailed. Zipf Distribution

– Problem of recommending new items to a user based on their ratings on previously seen items.

Lecture 2 : 590.03 Fall 12 22

θ

Lecture 2 : 590.03 Fall 12 23

3 4 2 1 5 1 1 1 5 5 1 5 2 2 1 4 2 1 4 3 3 5 4 3 1 3 2 4 Movies Users Rating + TimeStamp Record (r) Column/Attribute

– Set (or number) of non-null attributes in a record or column

Lecture 2 : 590.03 Fall 12 24

Lecture 2 : 590.03 Fall 12 25

Lecture 2 : 590.03 Fall 12 26

ScoreBoard

similarity of an attribute in aux to the same attribute in r’.

OR

Lecture 2 : 590.03 Fall 12 27

Theorem 1: Suppose we use Scoreboard with α = 1 – ε. If Aux contains m randomly chosen attributes s.t. Then Scoreboard returns a record r’ such that

Pr [Sim(m, r’) > 1 – ε – δ ] > 1 – ε

Lecture 2 : 590.03 Fall 12 28

Lecture 2 : 590.03 Fall 12 29

deanonymized.

Lecture 2 : 590.03 Fall 12 30

Lecture 2 : 590.03 Fall 12 31

identify the user’s record from the “anonymized” dataset with high probability

knowledge

Lecture 2 : 590.03 Fall 12 32

– Passive Attacks – Active Attacks

Lecture 2 : 590.03 Fall 12 33

entity, and each edge represents certain relationship between two entities

Facebook, Yahoo! Messenger, etc.

34 Lecture 2 : 590.03 Fall 12

– removes the label of each node and publish only the structure of the network

– Nodes may still be re-identified based on network structure Alice Ed Bob Fred Cathy Grace Diane

35 Lecture 2 : 590.03 Fall 12

– Each node represents an individual – Each edge between two individuals indicates that they have exchanged emails Alice Ed Bob Fred Cathy Grace Diane

36 Lecture 2 : 590.03 Fall 12

Alice Ed Bob Fred Cathy Grace Diane

37 Lecture 2 : 590.03 Fall 12

Alice Ed Bob Fred Cathy Grace Diane

38 Lecture 2 : 590.03 Fall 12

Alice Ed Bob Fred Cathy Grace Diane

39 Lecture 2 : 590.03 Fall 12

Alice Ed Bob Fred Cathy Grace Diane

40 Lecture 2 : 590.03 Fall 12

the anonymized network

Alice Ed Bob Fred Cathy Grace Diane

41 Lecture 2 : 590.03 Fall 12

Alice Ed Bob Fred Cathy Grace Diane

42 Lecture 2 : 590.03 Fall 12

Alice Ed Bob Fred Cathy Grace Diane

43 Lecture 2 : 590.03 Fall 12

Alice Ed Bob Fred Cathy Grace Diane

44 Lecture 2 : 590.03 Fall 12

Alice Ed Bob Fred Cathy Grace Diane

45 Lecture 2 : 590.03 Fall 12

Alice Ed Bob Fred Cathy Grace Diane

46 Lecture 2 : 590.03 Fall 12

[Liu and Terzi, SIGMOD 2008]

knowledge about the network structure, e.g., a subgraph of the network. [Zhou and Pei, ICDE 2008, Hay et al., VLDB 2008, ]

nodes in the anonymized network are labeled. [Pang et al., SIGCOMM CCR 2006]

47 Lecture 2 : 590.03 Fall 12

– Some individuals share the sensitive attribute, while others keep it private

sensitive attributes using

– Links in the social network – Groups that the individuals belong to

profiles as training data. [Zheleva and Getoor, WWW 2009]

48 Lecture 2 : 590.03 Fall 12

public profiles.

49 Lecture 2 : 590.03 Fall 12

node in the social network.

– Feature value Lx[y] = 1 if and only if (x,y) is an edge. – Train a model on all pairs (Lx, sensitive value(x)), for x’s with public sensitive values. – Use learnt model to predict private sensitive values

50 Lecture 2 : 590.03 Fall 12

group in the social network.

– Feature value Gx[y] = 1 if and only if x belongs to group y. – Train a model on all pairs (Gx, sensitive value(x)), where x’s sensitive value is public. – Use model to predict private sensitive values

51 Lecture 2 : 590.03 Fall 12

Flickr (Location) Facebook (Gender) Facebook (Political View) Dogster (Dog Breed) Baseline 27.7% 50% 56.5% 28.6% LINK 56.5% 68.6% 58.1% 60.2% GROUP 83.6% 77.2% 46.6% 82.0%

[Zheleva and Getoor, WWW 2009]

52 Lecture 2 : 590.03 Fall 12

[Backstrom et al., WWW 2007]

– Creates a few ‘fake’ Facebook user accounts.

– Create friends using ‘fake’ accounts.

53 Lecture 2 : 590.03 Fall 12

can be identified in the anonymous data.

54 Lecture 2 : 590.03 Fall 12

55 Lecture 2 : 590.03 Fall 12

special graph structure.

56 Lecture 2 : 590.03 Fall 12

57 Lecture 2 : 590.03 Fall 12

W = {w1, …, wk}

X = {x1, …, xk}

with probability 0.5. Large graph

58 Lecture 2 : 590.03 Fall 12

nodes in S.

– There is a function mapping each node in S to a node in S’ – (u,v) is an edge in G[S] if and only if (f(u), f(v)) is an edge in S’

– Think: permuting the nodes

Lecture 2 : 590.03 Fall 12 59

isomorphic to G[X] (call it H).

Large graph (size N)

60 Lecture 2 : 590.03 Fall 12

Subgraph isomorphism is NP-hard

– i.e., Finding X could be hard.

But since X has a path, with random edges, there is a simple brute force with pruning search algorithm. Run Time: O(N 2O(log log N) ) Large graph (size N)

2

61 Lecture 2 : 590.03 Fall 12

4.4 million nodes, 77 million edges

by adding 10 nodes.

second. Probability of Successful Attack [Backstrom et al., WWW 2007]

62 Lecture 2 : 590.03 Fall 12

knowledge of the structure of the graph

accurately inferring private sensitive values.

successful.

63 Lecture 2 : 590.03 Fall 12

Lecture 2 : 590.03 Fall 12 64

2008

networks, hidden patterns, and structural steganography”, WWW 2007

mixed public and private user profiles”, WWW 2009

Lecture 2 : 590.03 Fall 12 65