02/17/2020 Introduction to Data Mining, 2nd Edition 1

Data Mining Support Vector Machines Introduction to Data Mining, 2nd Edition by Tan, Steinbach, Karpatne, Kumar

02/17/2020 Introduction to Data Mining, 2nd Edition 2

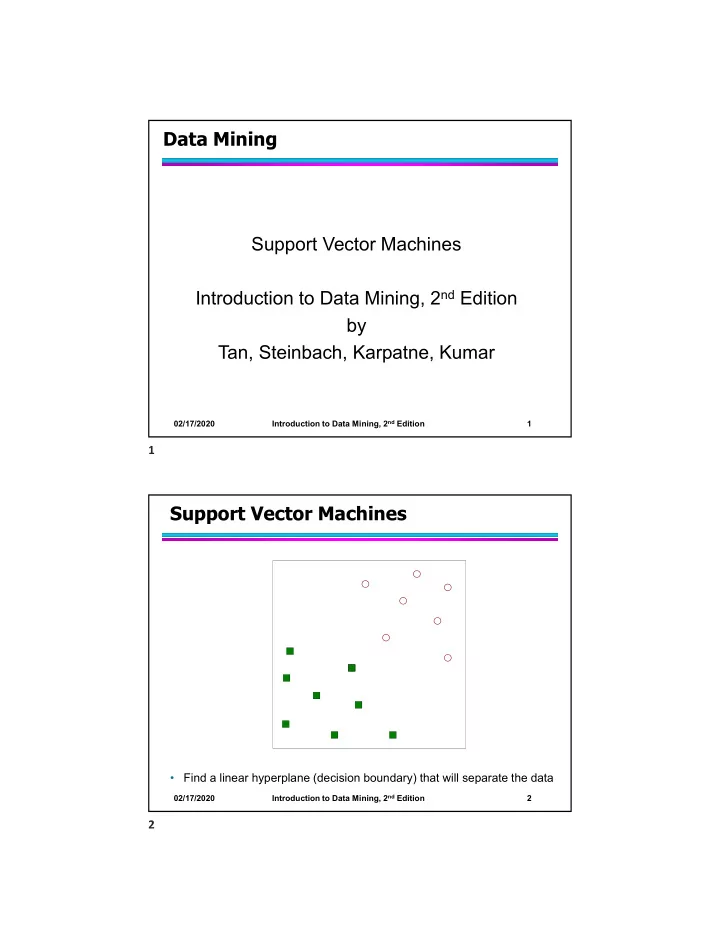

Support Vector Machines

- Find a linear hyperplane (decision boundary) that will separate the data