Data Management Systems

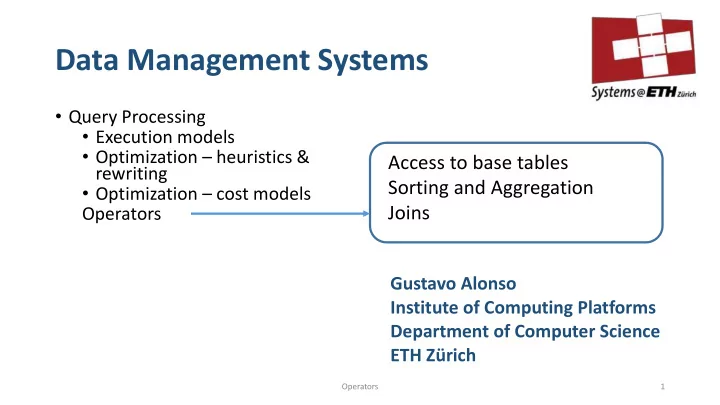

- Query Processing

- Execution models

- Optimization – heuristics &

rewriting

- Optimization – cost models

Operators Gustavo Alonso Institute of Computing Platforms Department of Computer Science ETH Zürich

1 Operators

Access to base tables Sorting and Aggregation Joins