CSE 373: Binary heaps Michael Lee Monday, Feb 5, 2018 1 Course - PowerPoint PPT Presentation

CSE 373: Binary heaps Michael Lee Monday, Feb 5, 2018 1 Course overview The course so far... Coming up next: Divide-and-conquer, sorting Graphs Misc topics (P vs NP, more?) 2 Reviewing manipulating arrays and nodes Algorithm

CSE 373: Binary heaps Michael Lee Monday, Feb 5, 2018 1

Course overview The course so far... Coming up next: Divide-and-conquer, sorting Graphs Misc topics (P vs NP, more?) 2 ◮ Reviewing manipulating arrays and nodes ◮ Algorithm analysis ◮ Dictionaries (tree-based and hash-based)

Course overview The course so far... Coming up next: 2 ◮ Reviewing manipulating arrays and nodes ◮ Algorithm analysis ◮ Dictionaries (tree-based and hash-based) ◮ Divide-and-conquer, sorting ◮ Graphs ◮ Misc topics (P vs NP, more?)

Timeline When are we getting project grades/our midterm back? Tuesday or Wednesday 3

Timeline When are we getting project grades/our midterm back? Tuesday or Wednesday 3

Timeline Do we have something due soon? Project 3 will be released today or tomorrow Due dates: Part 1 due in two weeks (Fri, Feb 16) Full project due in three weeks (Fri, Feb 23) Partner selection Selection form due Fri, Feb 9 You MUST fjnd a new partner... ...unless both partners email me and petition to stay together 4

Timeline Do we have something due soon? Due dates: Part 1 due in two weeks (Fri, Feb 16) Full project due in three weeks (Fri, Feb 23) Partner selection Selection form due Fri, Feb 9 You MUST fjnd a new partner... ...unless both partners email me and petition to stay together 4 ◮ Project 3 will be released today or tomorrow

Timeline Do we have something due soon? Partner selection Selection form due Fri, Feb 9 You MUST fjnd a new partner... ...unless both partners email me and petition to stay together 4 ◮ Project 3 will be released today or tomorrow ◮ Due dates: ◮ Part 1 due in two weeks (Fri, Feb 16) ◮ Full project due in three weeks (Fri, Feb 23)

Timeline Do we have something due soon? You MUST fjnd a new partner... ...unless both partners email me and petition to stay together 4 ◮ Project 3 will be released today or tomorrow ◮ Due dates: ◮ Part 1 due in two weeks (Fri, Feb 16) ◮ Full project due in three weeks (Fri, Feb 23) ◮ Partner selection ◮ Selection form due Fri, Feb 9

Timeline Do we have something due soon? ...unless both partners email me and petition to stay together 4 ◮ Project 3 will be released today or tomorrow ◮ Due dates: ◮ Part 1 due in two weeks (Fri, Feb 16) ◮ Full project due in three weeks (Fri, Feb 23) ◮ Partner selection ◮ Selection form due Fri, Feb 9 ◮ You MUST fjnd a new partner...

Timeline Do we have something due soon? 4 ◮ Project 3 will be released today or tomorrow ◮ Due dates: ◮ Part 1 due in two weeks (Fri, Feb 16) ◮ Full project due in three weeks (Fri, Feb 23) ◮ Partner selection ◮ Selection form due Fri, Feb 9 ◮ You MUST fjnd a new partner... ◮ ...unless both partners email me and petition to stay together

Today Motivating question: Suppose we have a collection of “items”. We want to return whatever item has the smallest “priority”. 5

The Priority Queue ADT Specifjcally, want to implement the Priority Queue ADT: The Priority Queue ADT A priority queue stores elements according to their “priority”. It supports the following operations: removeMin: return the element with the smallest priority peekMin: fjnd (but do not return) the smallest element insert: add a new element to the priority queue 6

The Priority Queue ADT Specifjcally, want to implement the Priority Queue ADT: The Priority Queue ADT A priority queue stores elements according to their “priority”. It supports the following operations: 6 ◮ removeMin: return the element with the smallest priority ◮ peekMin: fjnd (but do not return) the smallest element ◮ insert: add a new element to the priority queue

The Priority Queue ADT An alternative defjnition: instead of yielding the element with the largest priority, yield the one with the largest priority: The Priority Queue ADT, alternative defjnition A priority queue stores elements according to their “priority”. It supports the following operations: removeMax: return the element with the largest priority peekMax: fjnd (but do not return) the largest element insert: add a new element to the priority queue The way we implement both is almost identical – we just tweak how we compare elements In this class, we will focus on implementing a “min” priority queue 7

The Priority Queue ADT An alternative defjnition: instead of yielding the element with the largest priority, yield the one with the largest priority: The Priority Queue ADT, alternative defjnition A priority queue stores elements according to their “priority”. It supports the following operations: The way we implement both is almost identical – we just tweak how we compare elements In this class, we will focus on implementing a “min” priority queue 7 ◮ removeMax: return the element with the largest priority ◮ peekMax: fjnd (but do not return) the largest element ◮ insert: add a new element to the priority queue

Initial implementation ideas n log n log n log n AVL tree log n n n Binary tree n Sorted linked list Sorted array list Fill in this table with the worst-case runtimes: n n Unsorted linked list n n Unsorted array list insert peekMin removeMin Idea 8

Initial implementation ideas Sorted array list log n log n log n AVL tree log n n n Binary tree n Sorted linked list n 8 Fill in this table with the worst-case runtimes: Unsorted linked list Unsorted array list insert peekMin removeMin Idea Θ ( n ) Θ ( n ) Θ (1) Θ ( n ) Θ ( n ) Θ (1)

Initial implementation ideas Fill in this table with the worst-case runtimes: log n log n log n AVL tree log n n n Binary tree Sorted linked list 8 Sorted array list n Idea removeMin peekMin insert Unsorted array list n Unsorted linked list n n Θ (1) Θ (1) Θ ( n ) Θ (1) Θ (1) Θ ( n )

Initial implementation ideas Fill in this table with the worst-case runtimes: log n log n log n AVL tree Binary tree n Sorted linked list n Sorted array list n n Unsorted linked list n n Unsorted array list insert peekMin removeMin Idea 8 Θ ( n ) Θ ( n ) Θ ( log ( n ))

Initial implementation ideas Fill in this table with the worst-case runtimes: AVL tree log n n n Binary tree n Sorted linked list n Sorted array list n n Unsorted linked list n n Unsorted array list insert peekMin removeMin Idea 8 Θ ( log ( n )) Θ ( log ( n )) Θ ( log ( n ))

peekMin is , and insert and remove are still However, insert is Initial implementation ideas We want something optimized both frequent inserts and removes. An AVL tree (or some tree-ish thing) seems good enough... right? Today: learn how to implement a binary heap . log n in the worst case. in the average case! 9

Initial implementation ideas We want something optimized both frequent inserts and removes. An AVL tree (or some tree-ish thing) seems good enough... right? Today: learn how to implement a binary heap . the worst case. 9 peekMin is O (1) , and insert and remove are still O ( log ( n )) in However, insert is O (1) in the average case!

Binary heap invariants Idea: adapt the tree-based method Insight: in a tree, fjnding the min is expensive! Rather then having it to the left, have it on the top! A BST or AVL tree A binary heap 10

Binary heap invariants Idea: adapt the tree-based method Insight: in a tree, fjnding the min is expensive! Rather then having it to the left, have it on the top! A BST or AVL tree A binary heap 10

Binary heap invariants Idea: adapt the tree-based method Insight: in a tree, fjnding the min is expensive! Rather then having it to the left, have it on the top! A BST or AVL tree A binary heap 10

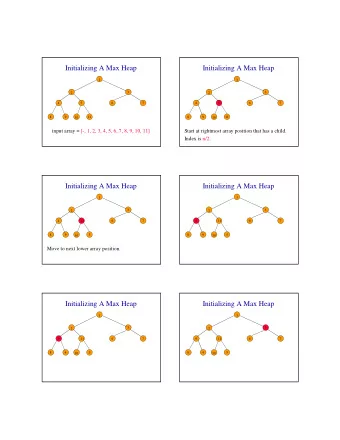

Binary heap invariants We now need to change our invariants... Binary heap invariants A binary heap has three invariants: Heap: Every node is smaller then its children Structure: Every heap is a “complete” tree – it has no “gaps” 11 ◮ Num children: Every node has at most 2 children

Binary heap invariants We now need to change our invariants... Binary heap invariants A binary heap has three invariants: Structure: Every heap is a “complete” tree – it has no “gaps” 11 ◮ Num children: Every node has at most 2 children ◮ Heap: Every node is smaller then its children

Binary heap invariants We now need to change our invariants... Binary heap invariants A binary heap has three invariants: 11 ◮ Num children: Every node has at most 2 children ◮ Heap: Every node is smaller then its children ◮ Structure: Every heap is a “complete” tree – it has no “gaps”

Example of a heap A broken heap 2 4 5 6 11 7 10 9 12

Example of a heap A fjxed heap 2 4 5 6 11 7 7 10 9 13

The heap invariant 4 7 6 8 9 5 4 9 6 8 7 5 5 Are these all heaps? 4 3 2 8 10 9 6 7 5 3 2 4 14

Implementing peekMin 4 . Easy: just return the root. Runtime: 9 10 7 7 11 6 5 2 9 10 7 7 11 6 5 4 2 15 How do we implement peekMin ?

Implementing peekMin 2 4 5 6 11 7 7 10 9 15 How do we implement peekMin ? Easy: just return the root. Runtime: Θ (1) .

Implementing removeMin 4 Problem: Structure invariant is broken – the tree has a gap! 9 10 7 7 11 6 5 9 10 7 7 11 6 5 4 2 Step 1: Just remove it! 16 What about removeMin ?

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.

![Priority Queues Scala Priority Queues In scala.collection.mutable.PriorityQueue[T] , the An](https://c.sambuz.com/1026943/priority-queues-scala-priority-queues-s.webp)