1

Laboratory for Perceptual Robotics – College of Information and Computer Sciences

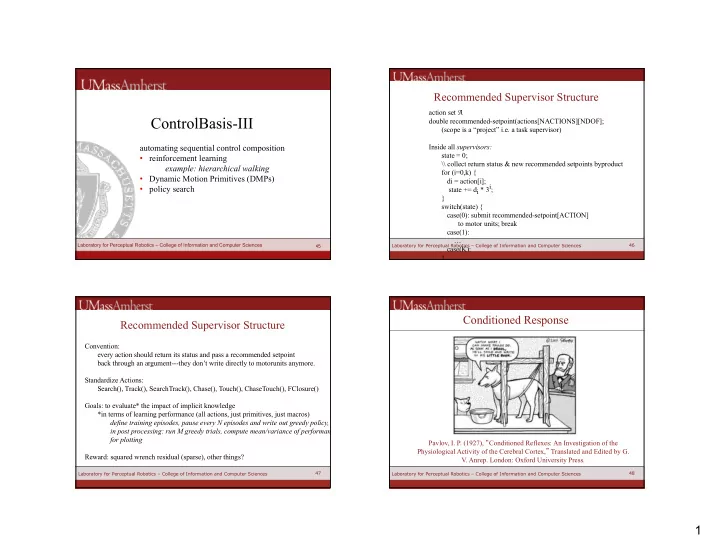

ControlBasis-III

45

automating sequential control composition

- reinforcement learning

example: hierarchical walking

- Dynamic Motion Primitives (DMPs)

- policy search

46 46 Laboratory for Perceptual Robotics – College of Information and Computer Sciences

action set A double recommended-setpoint(actions[NACTIONS][NDOF]; (scope is a “project” i.e. a task supervisor) Inside all supervisors: state = 0; \\ collect return status & new recommended setpoints byproduct for (i=0,k) { di = action[i]; state += di * 3i; } switch(state) { case(0): submit recommended-setpoint[ACTION] to motor units; break case(1): … case(K): }

Recommended Supervisor Structure

47 47 Laboratory for Perceptual Robotics – College of Information and Computer Sciences

Convention: every action should return its status and pass a recommended setpoint back through an argument---they don’t write directly to motorunits anymore. Standardize Actions: Search(), Track(), SearchTrack(), Chase(), Touch(), ChaseTouch(), FClosure() Goals: to evaluate* the impact of implicit knowledge *in terms of learning performance (all actions, just primitives, just macros) define training episodes, pause every N episodes and write out greedy policy, in post processing: run M greedy trials, compute mean/variance of performance for plotting Reward: squared wrench residual (sparse), other things?

Recommended Supervisor Structure

48 48

Conditioned Response

Pavlov, I. P. (1927), Conditioned Reflexes: An Investigation of the Physiological Activity of the Cerebral Cortex, Translated and Edited by G.

- V. Anrep. London: Oxford University Press.

Laboratory for Perceptual Robotics – College of Information and Computer Sciences