SLIDE 1

Concise Introduction to Deep Neural Networks

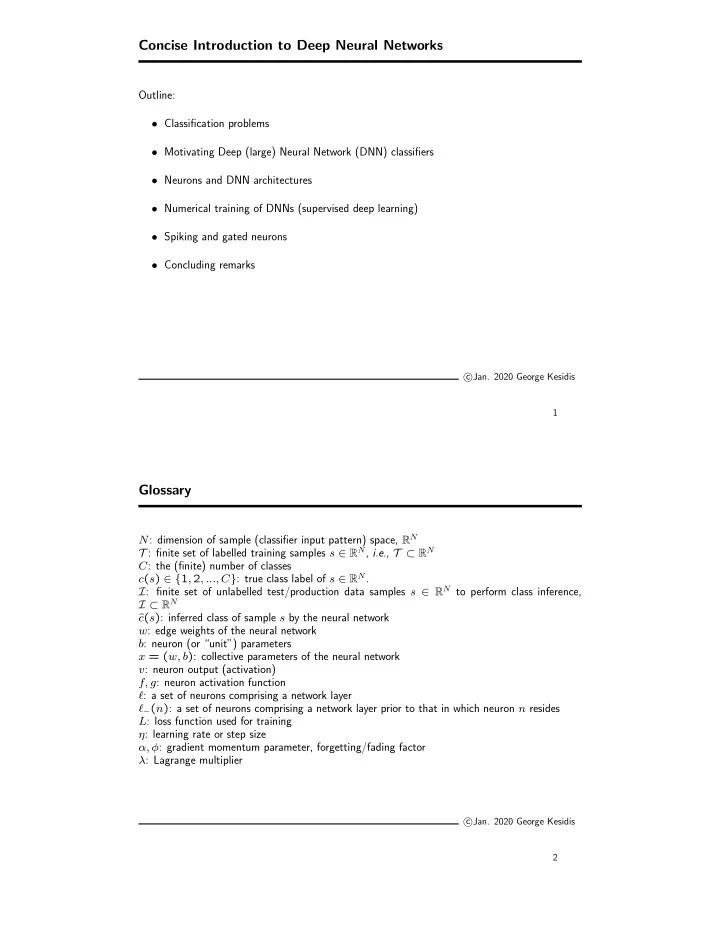

Outline:

- Classification problems

- Motivating Deep (large) Neural Network (DNN) classifiers

- Neurons and DNN architectures

- Numerical training of DNNs (supervised deep learning)

- Spiking and gated neurons

- Concluding remarks

c

- Jan. 2020 George Kesidis

1

Glossary

N: dimension of sample (classifier input pattern) space, RN T : finite set of labelled training samples s 2 RN, i.e., T ⇢ RN C: the (finite) number of classes c(s) 2 {1, 2, ..., C}: true class label of s 2 RN. I: finite set of unlabelled test/production data samples s 2 RN to perform class inference, I ⇢ RN ˆ c(s): inferred class of sample s by the neural network w: edge weights of the neural network b: neuron (or “unit”) parameters x = (w, b): collective parameters of the neural network v: neuron output (activation) f, g: neuron activation function `: a set of neurons comprising a network layer `(n): a set of neurons comprising a network layer prior to that in which neuron n resides L: loss function used for training ⌘: learning rate or step size ↵, : gradient momentum parameter, forgetting/fading factor : Lagrange multiplier

c

- Jan. 2020 George Kesidis