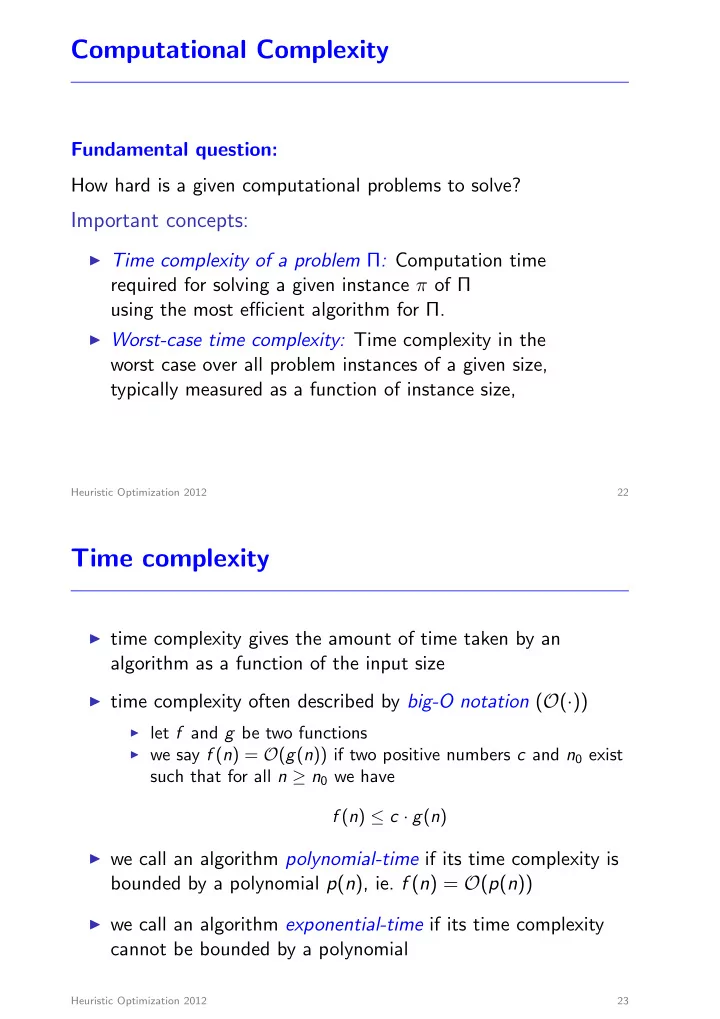

Computational Complexity

Fundamental question: How hard is a given computational problems to solve?

Important concepts:

◮ Time complexity of a problem Π: Computation time

required for solving a given instance π of Π using the most efficient algorithm for Π.

◮ Worst-case time complexity: Time complexity in the

worst case over all problem instances of a given size, typically measured as a function of instance size,

Heuristic Optimization 2012 22

Time complexity

◮ time complexity gives the amount of time taken by an

algorithm as a function of the input size

◮ time complexity often described by big-O notation (O(·))

◮ let f and g be two functions ◮ we say f (n) = O(g(n)) if two positive numbers c and n0 exist

such that for all n ≥ n0 we have f (n) ≤ c · g(n)

◮ we call an algorithm polynomial-time if its time complexity is

bounded by a polynomial p(n), ie. f (n) = O(p(n))

◮ we call an algorithm exponential-time if its time complexity

cannot be bounded by a polynomial

Heuristic Optimization 2012 23