ST 370 Probability and Statistics for Engineers

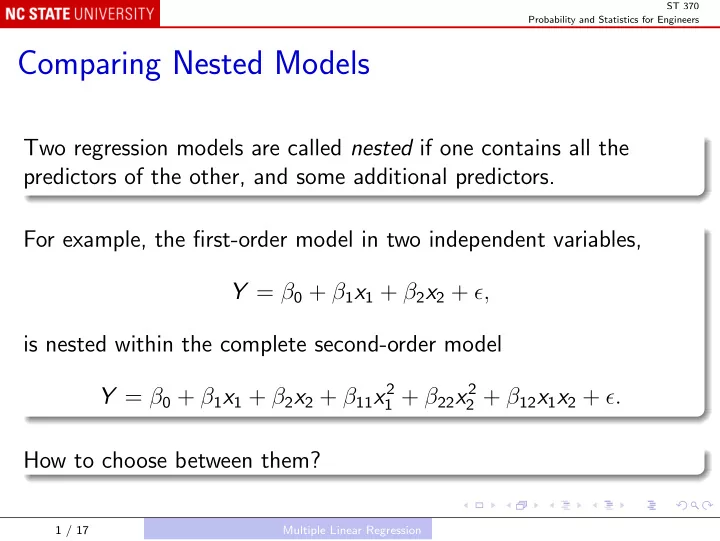

Comparing Nested Models

Two regression models are called nested if one contains all the predictors of the other, and some additional predictors. For example, the first-order model in two independent variables, Y = β0 + β1x1 + β2x2 + ǫ, is nested within the complete second-order model Y = β0 + β1x1 + β2x2 + β11x2

1 + β22x2 2 + β12x1x2 + ǫ.

How to choose between them?

1 / 17 Multiple Linear Regression