SLIDE 1 Review

- f

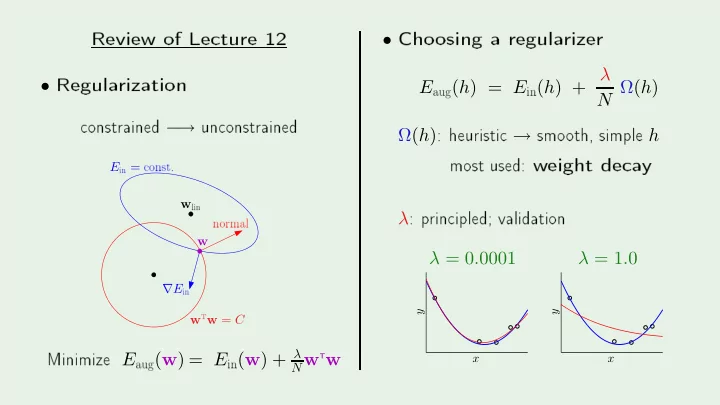

- Regula

- nstrained −

→

un onstrainedw

linw

tw = Cw E

in =- nst.

∇E

in normal Minimize E aug(w) = E in(w) + λNw

Tw- Cho

- sing

E

aug(h) = E in(h) + λN Ω(h) Ω(h)

: heuristi → smo- th,

λ

: p rin ipled; validationλ = 0.0001 λ = 1.0

PSfrag repla ementsx y

- 1

- 0.5

x y

- 1

- 0.5