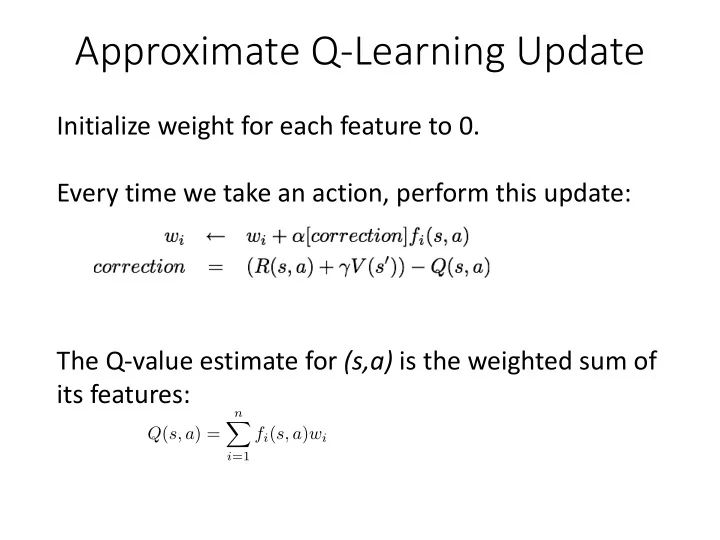

Approximate Q-Learning Update

Initialize weight for each feature to 0. Every time we take an action, perform this update: The Q-value estimate for (s,a) is the weighted sum of its features:

Q(s, a) =

n

X

i=1

fi(s, a)wi

<latexit sha1_base64="cCR4OZ9l/OETB0Xei2CIfbgOzM=">ACDHicbVDLSsNAFJ3UV62vqEs3g1WoICURQV0Uim5ctmBsoY1hMp20QyeTMDNRSsgPuPFX3LhQcesHuPNvnLZaOuBC4dz7uXe/yYUaks69soLCwuLa8UV0tr6xubW+b2zq2MEoGJgyMWibaPJGUE0dRxUg7FgSFPiMtf3g19lv3REga8Rs1iokboj6nAcVIackzD5oVeYyOYA12ZRJ6Ka3Z2V3KMxh4dOo8eNQzy1bVmgDOEzsnZCj4Zlf3V6Ek5BwhRmSsmNbsXJTJBTFjGSlbiJjPAQ9UlHU45CIt108k0GD7XSg0EkdHEFJ+rviRSFUo5CX3eGSA3krDcW/M6iQrO3ZTyOFGE4+miIGFQRXAcDexRQbBiI0QFlTfCvEACYSVDrCkQ7BnX54nzkn1omo3T8v1yzyNItgD+6ACbHAG6uAaNIADMHgEz+AVvBlPxovxbnxMWwtGPrML/sD4/AG0/pmn</latexit><latexit sha1_base64="cCR4OZ9l/OETB0Xei2CIfbgOzM=">ACDHicbVDLSsNAFJ3UV62vqEs3g1WoICURQV0Uim5ctmBsoY1hMp20QyeTMDNRSsgPuPFX3LhQcesHuPNvnLZaOuBC4dz7uXe/yYUaks69soLCwuLa8UV0tr6xubW+b2zq2MEoGJgyMWibaPJGUE0dRxUg7FgSFPiMtf3g19lv3REga8Rs1iokboj6nAcVIackzD5oVeYyOYA12ZRJ6Ka3Z2V3KMxh4dOo8eNQzy1bVmgDOEzsnZCj4Zlf3V6Ek5BwhRmSsmNbsXJTJBTFjGSlbiJjPAQ9UlHU45CIt108k0GD7XSg0EkdHEFJ+rviRSFUo5CX3eGSA3krDcW/M6iQrO3ZTyOFGE4+miIGFQRXAcDexRQbBiI0QFlTfCvEACYSVDrCkQ7BnX54nzkn1omo3T8v1yzyNItgD+6ACbHAG6uAaNIADMHgEz+AVvBlPxovxbnxMWwtGPrML/sD4/AG0/pmn</latexit><latexit sha1_base64="cCR4OZ9l/OETB0Xei2CIfbgOzM=">ACDHicbVDLSsNAFJ3UV62vqEs3g1WoICURQV0Uim5ctmBsoY1hMp20QyeTMDNRSsgPuPFX3LhQcesHuPNvnLZaOuBC4dz7uXe/yYUaks69soLCwuLa8UV0tr6xubW+b2zq2MEoGJgyMWibaPJGUE0dRxUg7FgSFPiMtf3g19lv3REga8Rs1iokboj6nAcVIackzD5oVeYyOYA12ZRJ6Ka3Z2V3KMxh4dOo8eNQzy1bVmgDOEzsnZCj4Zlf3V6Ek5BwhRmSsmNbsXJTJBTFjGSlbiJjPAQ9UlHU45CIt108k0GD7XSg0EkdHEFJ+rviRSFUo5CX3eGSA3krDcW/M6iQrO3ZTyOFGE4+miIGFQRXAcDexRQbBiI0QFlTfCvEACYSVDrCkQ7BnX54nzkn1omo3T8v1yzyNItgD+6ACbHAG6uAaNIADMHgEz+AVvBlPxovxbnxMWwtGPrML/sD4/AG0/pmn</latexit><latexit sha1_base64="cCR4OZ9l/OETB0Xei2CIfbgOzM=">ACDHicbVDLSsNAFJ3UV62vqEs3g1WoICURQV0Uim5ctmBsoY1hMp20QyeTMDNRSsgPuPFX3LhQcesHuPNvnLZaOuBC4dz7uXe/yYUaks69soLCwuLa8UV0tr6xubW+b2zq2MEoGJgyMWibaPJGUE0dRxUg7FgSFPiMtf3g19lv3REga8Rs1iokboj6nAcVIackzD5oVeYyOYA12ZRJ6Ka3Z2V3KMxh4dOo8eNQzy1bVmgDOEzsnZCj4Zlf3V6Ek5BwhRmSsmNbsXJTJBTFjGSlbiJjPAQ9UlHU45CIt108k0GD7XSg0EkdHEFJ+rviRSFUo5CX3eGSA3krDcW/M6iQrO3ZTyOFGE4+miIGFQRXAcDexRQbBiI0QFlTfCvEACYSVDrCkQ7BnX54nzkn1omo3T8v1yzyNItgD+6ACbHAG6uAaNIADMHgEz+AVvBlPxovxbnxMWwtGPrML/sD4/AG0/pmn</latexit>