Università degli Studi di Roma “Tor Vergata” Dipartimento di Ingegneria Civile e Ingegneria Informatica

Apache Spark

Corso di Sistemi e Architetture per Big Data A.A. 2017/18 Valeria Cardellini

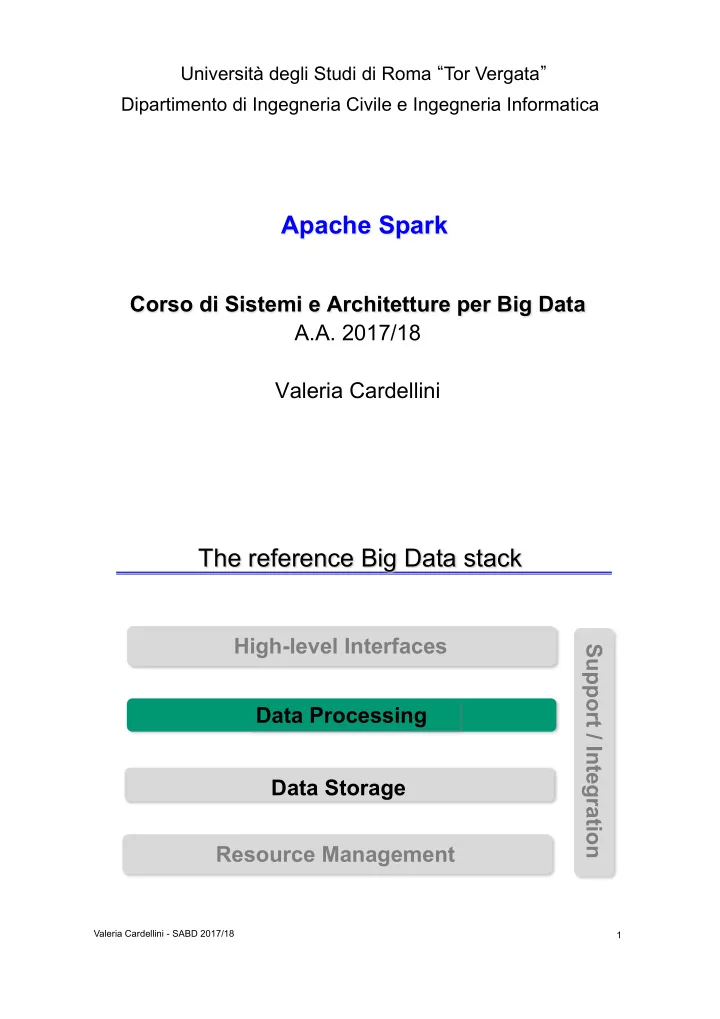

The reference Big Data stack

1

Resource Management Data Storage Data Processing High-level Interfaces Support / Integration

Valeria Cardellini - SABD 2017/18