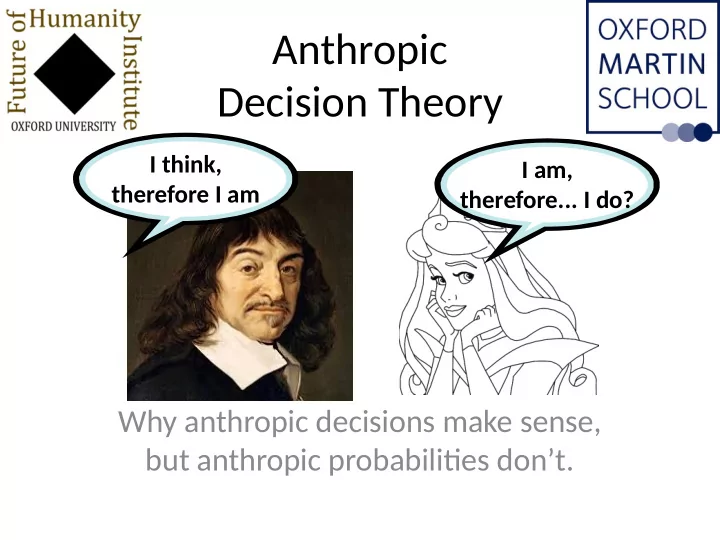

Anthropic Decision Theory

Why anthropic decisions make sense, but anthropic probabilitjes don’t.

I think, therefore I am I am, therefore... I do?

Anthropic Decision Theory I think, I am, therefore I am - - PowerPoint PPT Presentation

Anthropic Decision Theory I think, I am, therefore I am therefore... I do? Why anthropic decisions make sense, but anthropic probabilitjes dont. A n t h r o p i c q u e s tj o n s ? ? ? ? Humanity on Earth implies... ...what

I think, therefore I am I am, therefore... I do?

Humanity on Earth implies...

...what about the universe?

Tails Heads Amnesia Zzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzz... Zzzz... Zzzzzz... Sunday Monday Tuesday Zzzz... Zzzz...

Tails Heads Amnesia Zzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzz... Zzzz... Zzzzzz... Sunday Monday Tuesday Zzzz... Zzzz...

Upon awakening, what is the probability of Heads? Of Monday?

Tails Heads Room 1 Room 2 Room 1 Room 2

Upon awakening, what is the probability of Heads? Of Room1?

SSA prefers small universes (present and future)

SSA prefers small universes (present and future)

SSA prefers small universes (present and future)

SSA prefers small universes (present and future)

SSA prefers small universes (present and future)

SIA prefers large universes (present, not future)

SIA prefers large universes (present, not future)

SIA prefers large universes (present, not future)

SIA prefers large universes (present, not future)

SIA prefers large universes (present, not future)

SIA prefers large universes (present, not future)

I’ll bet you at odds

the trillion tjmes bigger universe

I’ll bet you at odds

the trillion tjmes bigger universe You can’t produce enough evidence to change my mind SIA prefers large universes (present, not future)

Tails Heads Room 1 Room 2 Room 1 Room 2 Psy-Kosh’s non-anthropic problem

Tails Heads Room 1 Room 2 Room 1 Room 2 Psy-Kosh’s non-anthropic problem

1 decider: gain if guess heads Tails Heads Room 1 Room 2 Room 1 Room 2 Psy-Kosh’s non-anthropic problem

1 decider: gain if guess heads 2 deciders: gain if both guess tails Tails Heads Room 1 Room 2 Room 1 Room 2 Psy-Kosh’s non-anthropic problem

1 decider: gain if guess heads 2 deciders: gain if both guess tails Tails Heads Room 1 Room 2 Room 1 Room 2 If I say tails, she says... Psy-Kosh’s non-anthropic problem

1 decider: gain if guess heads 2 deciders: gain if both guess tails Tails Heads Room 1 Room 2 Room 1 Room 2 If I say tails, she says...

...

Psy-Kosh’s non-anthropic problem

1 decider: gain if guess heads 2 deciders: gain if both guess tails Tails Heads Room 1 Room 2 Room 1 Room 2 If I say tails, she says...

...

Psy-Kosh’s non-anthropic problem Evidentjal Decision Theory Causal Decision Theory

1 decider: gain if guess heads 2 deciders: gain if both guess tails Tails Heads Room 1 Room 2 Room 1 Room 2 If I say tails, she says...

...

How much do I care about her, anyway? Psy-Kosh’s non-anthropic problem Evidentjal Decision Theory Causal Decision Theory

1 decider: gain if guess heads 2 deciders: gain if both guess tails Tails Heads Room 1 Room 2 Room 1 Room 2 If I say tails, she says...

...

How much do I care about her, anyway? Psy-Kosh’s non-anthropic problem Evidentjal Decision Theory Causal Decision Theory Altruistjc Selfjsh (precommit?)

1 decider: gain if guess heads 2 deciders: gain if both guess tails Tails Heads Room 1 Room 2 Room 1 Room 2 If I say tails, she says...

...

How much do I care about her, anyway? Do I do this,

together? Psy-Kosh’s non-anthropic problem Evidentjal Decision Theory Causal Decision Theory Altruistjc Selfjsh (precommit?)

1 decider: gain if guess heads 2 deciders: gain if both guess tails Tails Heads Room 1 Room 2 Room 1 Room 2 If I say tails, she says...

...

How much do I care about her, anyway? Do I do this,

together? Psy-Kosh’s non-anthropic problem Evidentjal Decision Theory Causal Decision Theory Altruistjc Selfjsh (precommit?) Total responsibility Partjal responsibility

1 decider: gain if guess heads 2 deciders: gain if both guess tails Tails Heads Room 1 Room 2 Room 1 Room 2 Psy-Kosh’s non-anthropic problem Evidentjal Decision Theory Causal Decision Theory Altruistjc Selfjsh (precommit?) Total responsibility Partjal responsibility

1 decider: gain if guess heads 2 deciders: gain if both guess tails Tails Heads Room 1 Room 2 Room 1 Room 2 Psy-Kosh’s non-anthropic problem Evidentjal Decision Theory Causal Decision Theory Altruistjc Selfjsh (precommit?) Total responsibility Partjal responsibility SIA SSA

... ... ...

... ... ...

1-x 1-x

1-x 1-x

1-x 1-x

Expected: 0.5(-X)+0.5(1-X)2

1-x 1-x

Expected: 0.5(-X)+0.5(1-X)2

1-x 1-x

Expected: 0.5(-X)+0.5(1-X)2

1-x 1-x

Expected: 0.5(-X)+0.5(1-X)1

SIA-ish SSA-ish Non-indexical utjlity Copy-altruistjc total utjlitarian Copy-altruistjc average utjlitarian

1-x 1-x

SIA-ish SSA-ish Non-indexical utjlity Copy-altruistjc total utjlitarian Copy-altruistjc average utjlitarian

1-x 1-x

SIA-ish SSA-ish Non-indexical utjlity Copy-altruistjc total utjlitarian Copy-altruistjc average utjlitarian

1-x 1-x

SIA-ish SSA-ish Non-indexical utjlity Copy-altruistjc total utjlitarian Copy-altruistjc average utjlitarian

SIA-ish SSA-ish Non-indexical utjlity Copy-altruistjc total utjlitarian Copy-altruistjc average utjlitarian

Expected: 0.5(-X)+0.5(1-X)1

SIA-ish SSA-ish Non-indexical utjlity Copy-altruistjc total utjlitarian Copy-altruistjc average utjlitarian

Expected: 0.5(-X)+0.5(1-X)1

SIA-ish SSA-ish Non-indexical utjlity Copy-altruistjc total utjlitarian Copy-altruistjc average utjlitarian Selfjsh (?)

SIA-ish SSA-ish Non-indexical utjlity Copy-altruistjc total utjlitarian Copy-altruistjc average utjlitarian Selfjsh (?)

Expected: 0.5(-X)/2+0.5(1-X)1

SIA-ish SSA-ish Non-indexical utjlity Copy-altruistjc total utjlitarian Copy-altruistjc average utjlitarian Selfjsh (strict???) Selfjsh (psychological approach)

Expected: 0.5(-X)/2+0.5(1-X)1

SSA: Probability of successful hunt is high.

SSA: Probability of successful hunt is high. Average utjlitarian: If average happiness is the same, disutjlity of failed hunt less if there are more people.

SSA: Probability of successful hunt is high. Average utjlitarian: If average happiness is the same, disutjlity of failed hunt less if there are more people. Selfjsh + precommitment + ignorance: In fjrst world, Adam and Eve sufger, but I’m unlikely to be them. In second world, Adam and Eve benefjt, and I’m certain to be one of them.

SSA: Probability of doom is high. No future generatjons.

SSA: Probability of doom is high. No future generatjons. What kind of bettjng behaviour are we looking for? Prefers to consume a windfall now rather than save future generatjons.

SSA: Probability of doom is high. No future generatjons. What kind of bettjng behaviour are we looking for? Prefers to consume a windfall now rather than save future generatjons. Average utjlitarian: if future generatjons are of similar average happiness, then betuer consume windfall ω today than let Ω more people exist.

SIA: The probability of the large universe is large.

SIA: The probability of the large universe is large.

Total utjlitarian: in a large universe, many philosophers win their bets, and I care about them.