SLIDE 1

1

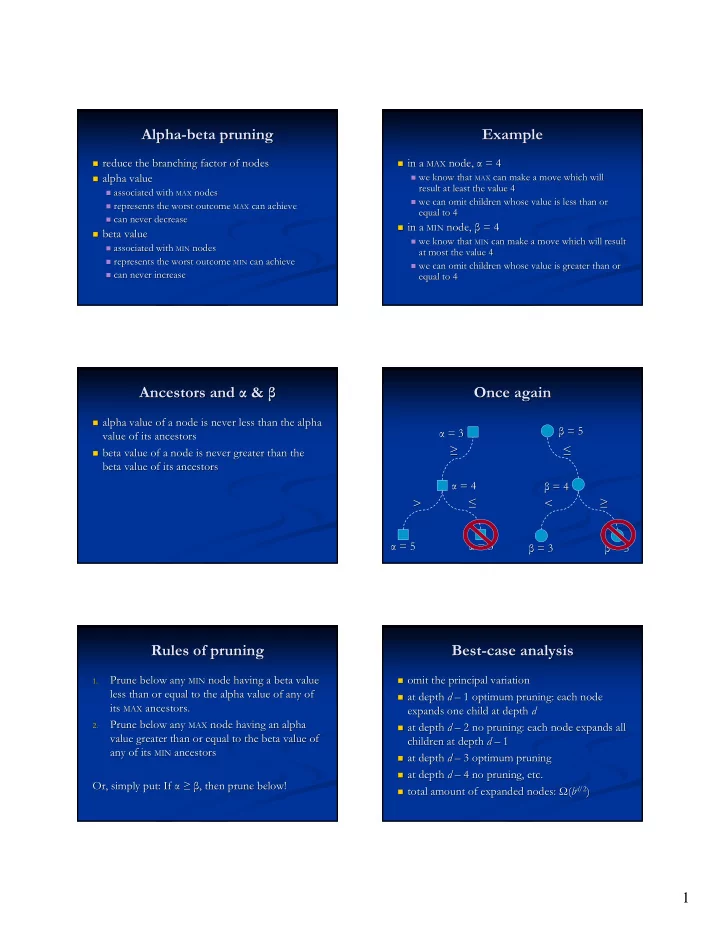

Alpha Alpha-

- beta pruning

beta pruning

- reduce the branching factor of nodes

reduce the branching factor of nodes

- alpha value

alpha value

- associated with

associated with MAX

MAX nodes

nodes

- represents the worst outcome

represents the worst outcome MAX

MAX can achieve

can achieve

- can never decrease

can never decrease

- beta value

beta value

- associated with

associated with MIN

MIN nodes

nodes

- represents the worst outcome

represents the worst outcome MIN

MIN can achieve

can achieve

- can never increase

can never increase

Example Example

- in a

in a MAX

MAX node,

node, α α = 4 = 4

- we know that

we know that MAX

MAX can make a move which will

can make a move which will result at least the value 4 result at least the value 4

- we can omit children whose value is less than or

we can omit children whose value is less than or equal to 4 equal to 4

- in a

in a MIN

MIN node,

node, β β = 4 = 4

- we know that

we know that MIN

MIN can make a move which will result

can make a move which will result at most the value 4 at most the value 4

- we can omit children whose value is greater than or

we can omit children whose value is greater than or equal to 4 equal to 4

Ancestors and Ancestors and α α & & β β

- alpha value of a node is never less than the alpha

alpha value of a node is never less than the alpha value of its ancestors value of its ancestors

- beta value of a node is never greater than the

beta value of a node is never greater than the beta value of its ancestors beta value of its ancestors

Once again Once again

α α = 4 = 4 β β = 4 = 4 α α = 3 = 3

≤ ≤ > >

α α = 5 = 5

≥ ≥

α α = 3 = 3 β β = 5 = 5

< < ≥ ≥

β β = 3 = 3

≤ ≤

β β = 5 = 5

Rules of pruning Rules of pruning

1. 1.

Prune below any Prune below any MIN

MIN node having a beta value

node having a beta value less than or equal to the alpha value of any of less than or equal to the alpha value of any of its its MAX

MAX ancestors.

ancestors.

2. 2.

Prune below any Prune below any MAX

MAX node having an alpha

node having an alpha value greater than or equal to the beta value of value greater than or equal to the beta value of any of its any of its MIN

MIN ancestors

ancestors Or, simply put: If Or, simply put: If α α ≥ ≥ β β, then prune below! , then prune below!

Best Best-

- case analysis

case analysis

- mit the principal variation

- mit the principal variation

- at depth

at depth d d – – 1 optimum pruning: each node 1 optimum pruning: each node expands one child at depth expands one child at depth d d

- at depth

at depth d d – – 2 no pruning: each node expands all 2 no pruning: each node expands all children at depth children at depth d d – – 1 1

- at depth

at depth d d – – 3 optimum pruning 3 optimum pruning

- at depth

at depth d d – – 4 no pruning, etc. 4 no pruning, etc.

- total amount of expanded nodes:

total amount of expanded nodes: Ω Ω( (b bd

d/2 /2)