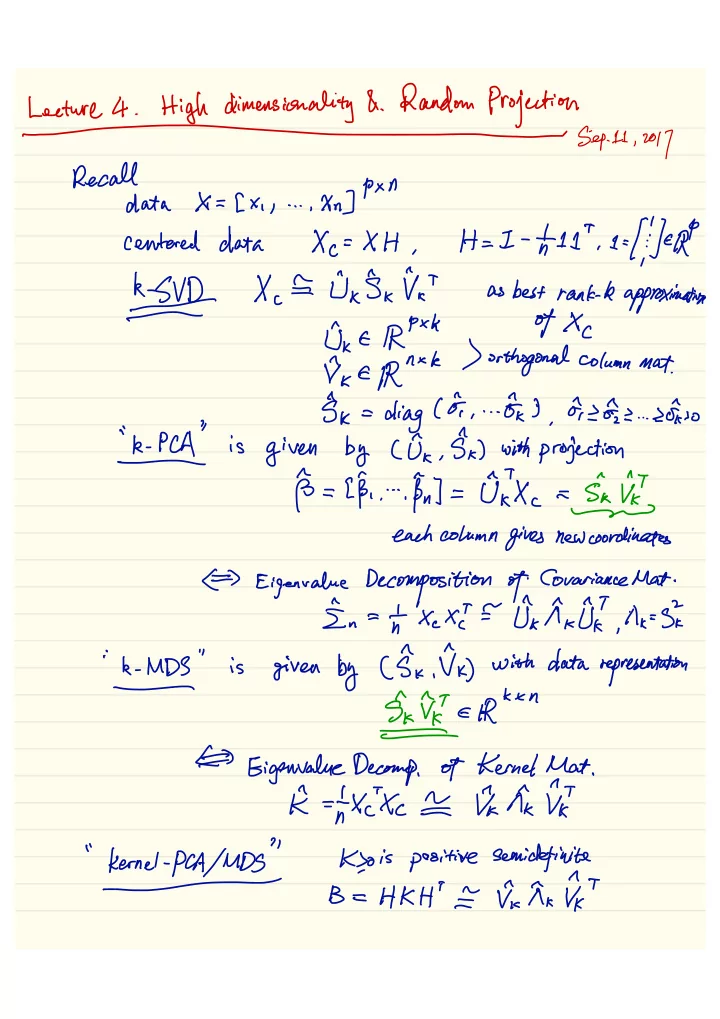

Lecture

4

.High

dimensionality &

.Random Projection

- Sep-11,2017

Recall

pxn

data

X=[

xi , ... , Xn ]centered

data Xc=

XH

,H=I

- nt11T

¥3

XCE

Uk§kVaT

asbest

rank . k approximation0k£ Rpxk

- f Xc

f ,<

€ prnxk) orthogonal

column mat . , ,§k= diagcoii

. .- FKI

disks

. . .sow

k

. PCA isgiven

by Cox ,§k )

with projection

- §=[ Fi

fikuiixc

a sieve each columngives

new coordinatesC⇒

Eigenvalue Decomposition of

Covariance Mat .In

antxex

'i=dkAk0I

' ,aeSI ik

.MDS

" isgiven

by

( §k ,V^⇒

withdata

representation- grate

- ⇐

Gigenvaluedecomp ,

- f

Kernel

Mat .K

.ntxixc

¥

write

"kernel.PH/iUD=Ksois

positive

semi definite BeHKH

'I

VIAKVIT