SLIDE 20

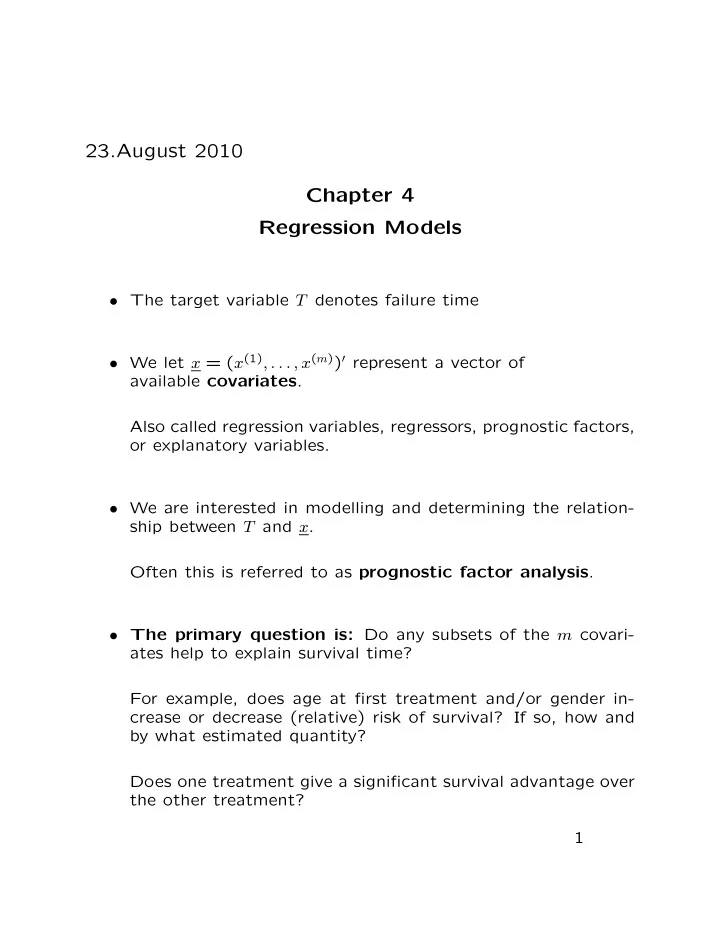

0.0 standard extreme value 5.5 6.0 6.5 7.0 7.5 8.0 8.5

quantiles Motorette data: Weibull with covariate x, different intercept and same slope x=2.256, c=170 x=2.159, c=190 x=2.028, c=220

standard logistic 5.5 6.0 6.5 7.0 7.5 8.0 8.5

quantiles Log-logistic with covariate x, different intercept and same slope

0.0 standard normal 5.5 6.0 6.5 7.0 7.5 8.0 8.5

quantiles Log-normal with covariate x, different intercept and same slope

Summary of some results:

- From summary(weib.fit), we learn that

σ = .36128, and ˜ µ = − log( ˜ λ) = β∗

0 +

β∗

1x = −11.89 + 9.04x.

The α = 1/.36128 = 2.768 and ˜ λ = exp(11.89 − 9.04 × 2.480159) = 0.0000268 at x = 2.480159. Note also that both the intercept and covariate x are highly significant with p -values 1.45×10−9 and 1.94×10−23, respec- tively. 20