11/8/2012 1

Compiler Design and Construction Semantic Analysis

Slides modified from Louden Book, Dr. Scherger, & Y Chung (NTHU), and Fischer, Leblanc

2

Any compiler must perform two major tasks

Analysi

ysis of the source program

Synthesis

sis of a machine-language program

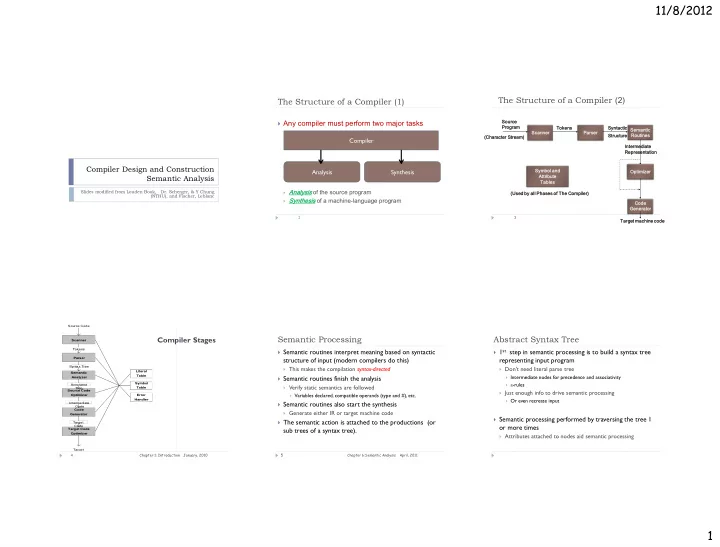

The Structure of a Compiler (1)

Compiler Analysis Synthesis

The Structure of a Compiler (2)

3

Scanner Parser Semantic Routines Code Genera rator Optimizer Sourc rce Progra ram Tokens Syntactic Structure re Symbol and Attri ribute Tables (Used by all Phases of The Compiler) r) (Chara racter r St Stre ream) Interm rmediate Representation Target machine code

Compiler Stages

January, 2010 Chapter 1: Introduction 4 Scanner Parser Semantic Analyzer Source Code Optimizer Code Generator Target Code Optimizer Source Code Target Tokens Syntax Tree Annotated Tree Intermediate Code Target Code Literal Table Symbol Table Error Handler

Semantic Processing

April, 2011 Chapter 6:Semantic Analysis 5

Semantic routines interpret meaning based on syntactic

structure of input (modern compilers do this)

This makes the compilation syntax-directed

Semantic routines finish the analysis

Verify static semantics are followed

Variables declared, compatible operands (type and #), etc.

Semantic routines also start the synthesis

Generate either IR or target machine code

The semantic action is attached to the productions (or

sub trees of a syntax tree).

Abstract Syntax Tree

1st step in semantic processing is to build a syntax tree

representing input program

Don't need literal parse tree

Intermediate nodes for precedence and associativity e-rules

Just enough info to drive semantic processing

Or even recreate input

Semantic processing performed by traversing the tree 1

- r more times

Attributes attached to nodes aid semantic processing