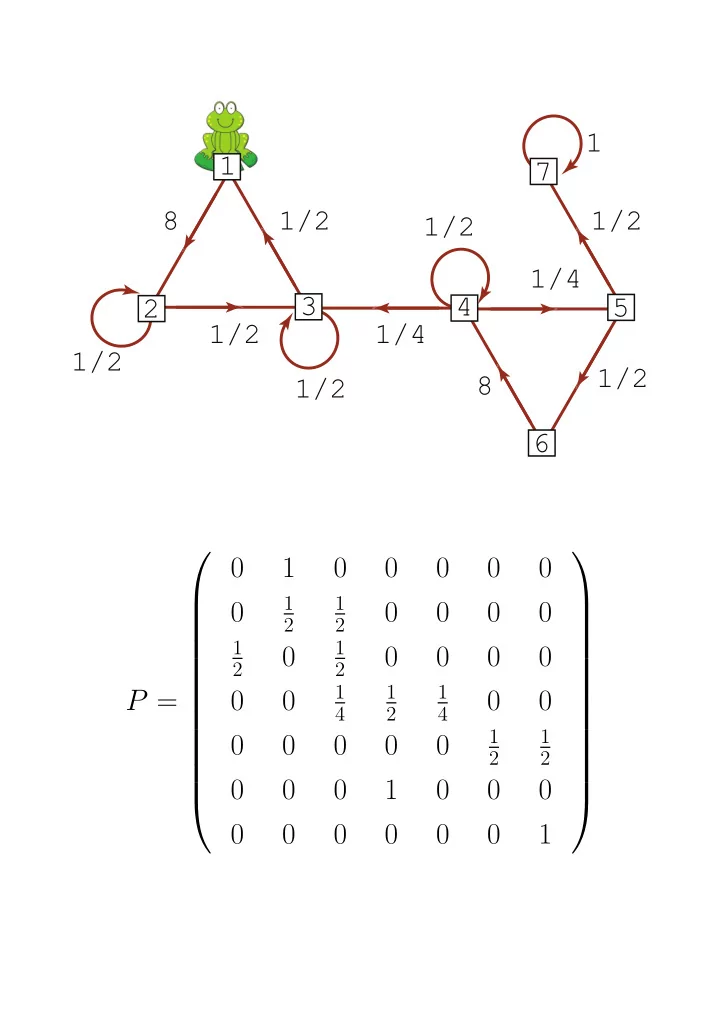

8 8 1/2 1/2 1/2 1/2 1 1/2 1/4 1/2 1/2 1/4 4 3 7 6 1 2 5 P = 1

1 2 1 2 1 2 1 2 1 4 1 2 1 4 1 2 1 2

0 1 0 0 0 0 0 1 1 0 0 0 0 0 2 2 1 1 - - PDF document

1 1 7 8 1/2 1/2 1/2 1/4 3 4 5 2 1/2 1/4 1/2 1/2 8 1/2 6 0 1 0 0 0 0 0 1 1 0 0 0 0 0 2 2 1 1 0 0 0 0 0 2 2 1 1 1 P = 0 0 0 0 4 2

1 2 1 2 1 2 1 2 1 4 1 2 1 4 1 2 1 2

1 2 1 2 1 2 1 2 1 4 1 2 1 4 1 2 1 2

n

1 5 2 5 2 5 1 5 2 5 2 5 1 5 2 5 2 5 2 15 4 15 4 15 1 3 1 15 2 15 2 15 2 3 2 15 4 15 4 15 1 3

1 2 1 2

1 3 1 3 1 3 1 2 1 2

1 2 1 4 1 4 1 3 1 3 1 3 1 3 2 3

2h1 + 1 4h2 + 1 4h3

3h1 + 1 3h3 + 1 3h4

3h3 + 2 3h5

1/2 1/4 1/4 1/3 1/3 1/3 1/3 2/3

1

1/2 1/4 1/4 1/3 1/3 1/3 1/3 2/3 1/2 1/4 1/4 1/3 1/3 1/3 1/3 2/3 1/2 1/4 1/4 1/3 1/3 1/3 1/3 2/3

3

2

1/2 1/4 1/4 1/3 1/3 1/3 1/3 2/3

1

3

1

1/2 1/4 1/4 1/3 1/3 1/3 1/3 2/3

X Y Z

α+β + α α+β(1 − α − β)n α α+β − α α+β(1 − α − β)n β α+β − β α+β(1 − α − β)n α α+β + β α+β(1 − α − β)n

α+β α α+β β α+β α α+β

1 2 1 2

1

1 2 1 2 1 3 1 3 1 3 1 3 1 3 1 3

1

1 3 1 3 1 3 1 2 1 2

+ (0.15)1 8 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

0.065 0.092 0.045 0.098 0.11 0.18 0.15 0.26 0.060 0.094 0.047 0.095 0.11 0.19 0.17 0.24 0.064 0.092 0.045 0.098 0.11 0.18 0.15 0.26 0.066 0.091 0.044 0.099 0.11 0.18 0.15 0.26 0.065 0.092 0.045 0.098 0.11 0.18 0.15 0.26 0.057 0.095 0.049 0.095 0.10 0.20 0.17 0.23 0.060 0.094 0.047 0.096 0.11 0.19 0.16 0.24 0.068 0.090 0.043 0.10 0.12 0.17 0.14 0.27

0.063 0.093 0.046 0.097 0.11 0.18 0.16 0.25 0.063 0.093 0.046 0.097 0.11 0.18 0.16 0.25 0.063 0.093 0.046 0.097 0.11 0.18 0.16 0.25 0.063 0.093 0.046 0.097 0.11 0.18 0.16 0.25 0.063 0.093 0.046 0.097 0.11 0.18 0.16 0.25 0.063 0.093 0.046 0.097 0.11 0.18 0.16 0.25 0.063 0.093 0.046 0.097 0.11 0.18 0.16 0.25 0.063 0.093 0.046 0.097 0.11 0.18 0.16 0.25

k = 1.

i : i ∈ I) satisfies γkP = γk.

i < 1 for all i.

1 2 1 4 1 4 1 4 0 1 2 1 4 1 4 1 4 0 1 2 1 2 1 4 1 4 0

11 ,p(n) 12 , p(n) 13 , p(n) 14

1i log2

1i

2 3 3 4 4 4 6 6 6 8 8 8 8 6 4 4

n=300 in a simulation of an

50 100 150 200 5 10 15 20 50 100 150 200 5 10 15 20

+