- 1-

Workshop 7.4a: Single factor ANOVA

Murray Logan

November 23, 2016

Table of contents

1 Revision 1 2 Anova Parameterization 2 3 Partitioning of variance (ANOVA) 10 4 Worked Examples 13

- 1. Revision

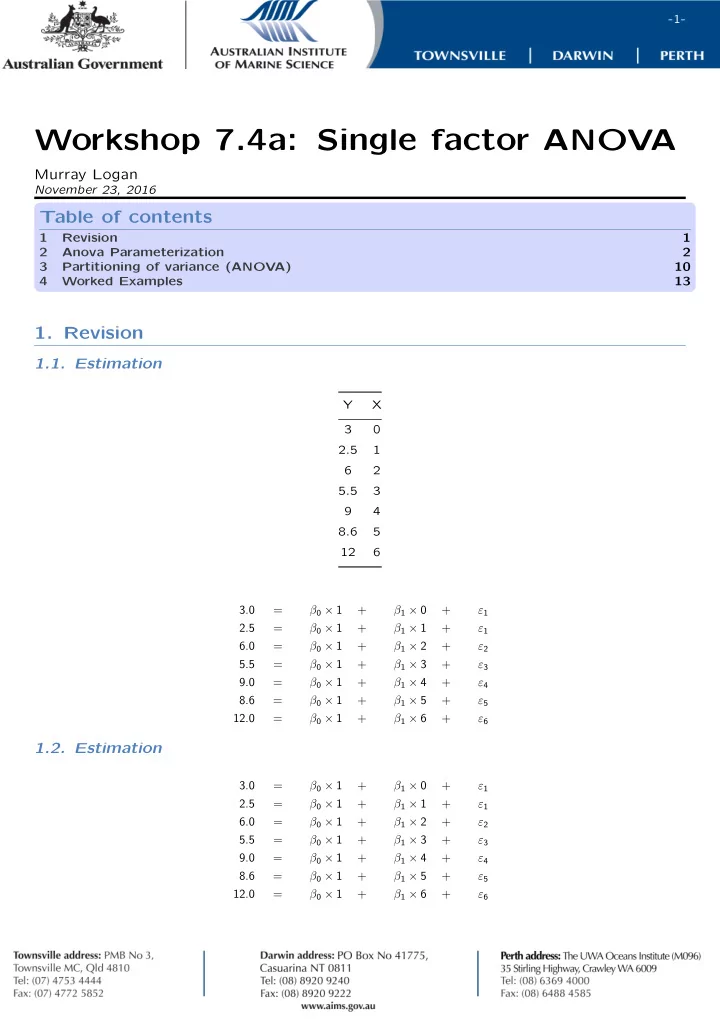

1.1. Estimation

Y X 3 2.5 1 6 2 5.5 3 9 4 8.6 5 12 6 3.0 = β0 × 1 + β1 × 0 + ε1 2.5 = β0 × 1 + β1 × 1 + ε1 6.0 = β0 × 1 + β1 × 2 + ε2 5.5 = β0 × 1 + β1 × 3 + ε3 9.0 = β0 × 1 + β1 × 4 + ε4 8.6 = β0 × 1 + β1 × 5 + ε5 12.0 = β0 × 1 + β1 × 6 + ε6

1.2. Estimation

3.0 = β0 × 1 + β1 × 0 + ε1 2.5 = β0 × 1 + β1 × 1 + ε1 6.0 = β0 × 1 + β1 × 2 + ε2 5.5 = β0 × 1 + β1 × 3 + ε3 9.0 = β0 × 1 + β1 × 4 + ε4 8.6 = β0 × 1 + β1 × 5 + ε5 12.0 = β0 × 1 + β1 × 6 + ε6