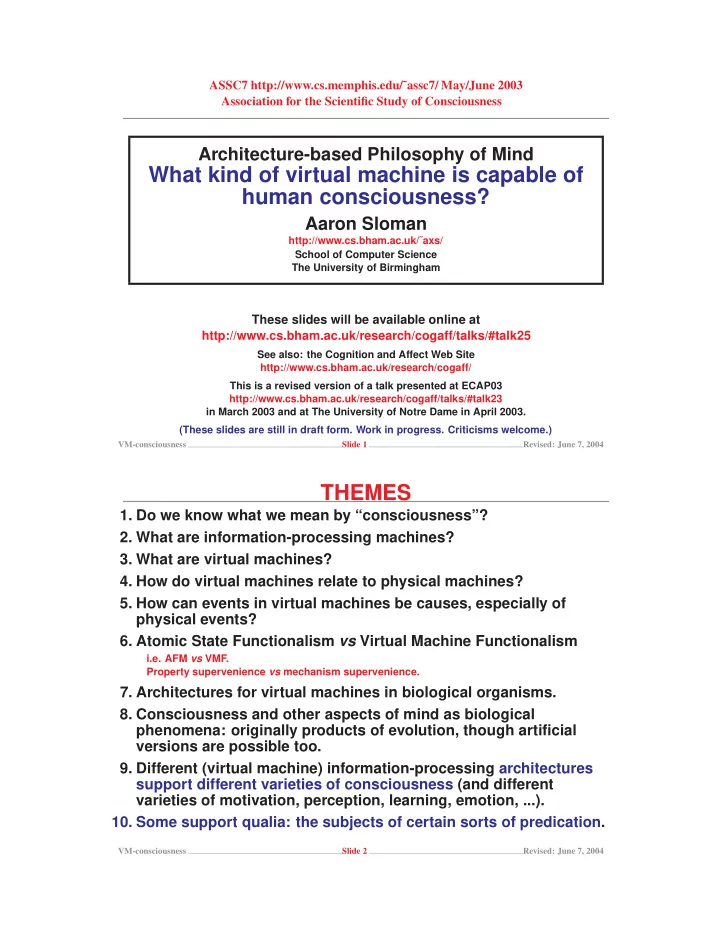

ASSC7 http://www.cs.memphis.edu/˜assc7/ May/June 2003 Association for the Scientific Study of Consciousness

Architecture-based Philosophy of Mind

What kind of virtual machine is capable of human consciousness?

Aaron Sloman

http://www.cs.bham.ac.uk/˜axs/ School of Computer Science The University of Birmingham

These slides will be available online at http://www.cs.bham.ac.uk/research/cogaff/talks/#talk25

See also: the Cognition and Affect Web Site http://www.cs.bham.ac.uk/research/cogaff/ This is a revised version of a talk presented at ECAP03 http://www.cs.bham.ac.uk/research/cogaff/talks/#talk23 in March 2003 and at The University of Notre Dame in April 2003. (These slides are still in draft form. Work in progress. Criticisms welcome.)

VM-consciousness Slide 1 Revised: June 7, 2004

THEMES

- 1. Do we know what we mean by “consciousness”?

- 2. What are information-processing machines?

- 3. What are virtual machines?

- 4. How do virtual machines relate to physical machines?

- 5. How can events in virtual machines be causes, especially of

physical events?

- 6. Atomic State Functionalism vs Virtual Machine Functionalism

i.e. AFM vs VMF. Property supervenience vs mechanism supervenience.

- 7. Architectures for virtual machines in biological organisms.

- 8. Consciousness and other aspects of mind as biological

phenomena: originally products of evolution, though artificial versions are possible too.

- 9. Different (virtual machine) information-processing architectures

support different varieties of consciousness (and different varieties of motivation, perception, learning, emotion, ...).

- 10. Some support qualia: the subjects of certain sorts of predication.

VM-consciousness Slide 2 Revised: June 7, 2004