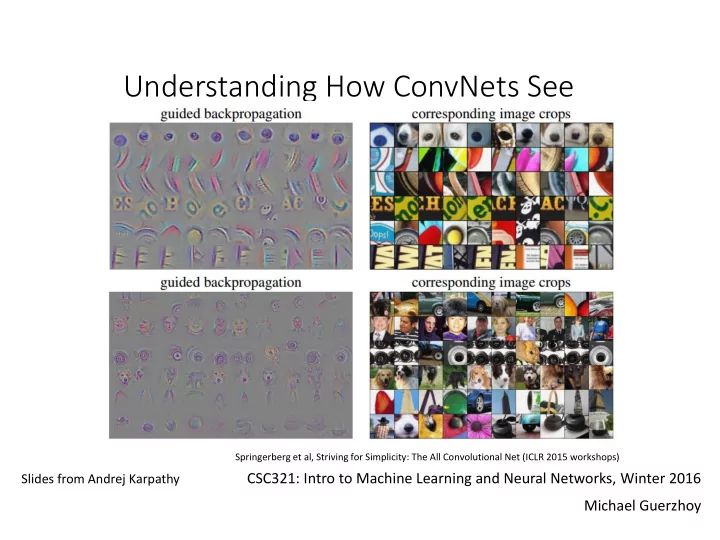

Understanding How ConvNets See

CSC321: Intro to Machine Learning and Neural Networks, Winter 2016 Michael Guerzhoy

Slides from Andrej Karpathy

Springerberg et al, Striving for Simplicity: The All Convolutional Net (ICLR 2015 workshops)

Understanding How ConvNets See Springerberg et al, Striving for - - PowerPoint PPT Presentation

Understanding How ConvNets See Springerberg et al, Striving for Simplicity: The All Convolutional Net (ICLR 2015 workshops) CSC321: Intro to Machine Learning and Neural Networks, Winter 2016 Slides from Andrej Karpathy Michael Guerzhoy What

CSC321: Intro to Machine Learning and Neural Networks, Winter 2016 Michael Guerzhoy

Slides from Andrej Karpathy

Springerberg et al, Striving for Simplicity: The All Convolutional Net (ICLR 2015 workshops)

Cybernetics 1980)

Example weights for fully- connected single-hidden layer network for faces, for one neuron Weights for 9 features in the first convolutional layer of a layer for classifying ImageNet images

Zeiler and Fergus, “Visualizing and Understanding Convolutional Networks”

Zeiler and Fergus, “Visualizing and Understanding Convolutional Networks”

For each feature, fine the 9 images that produce the highest activations for the neuron, and crop out the relevant patch

𝜖𝑜𝑓𝑣𝑠𝑝𝑜 𝜖𝑦𝑗

input x

Compute gradient, zero out negatives, backpropagate Compute gradient, zero out negatives, backpropagate Compute gradient, zero out negatives, backpropagate

Backprop Guided Backprop

Springerberg et al, Striving for Simplicity: The All Convolutional Net (ICLR 2015 workshops)

Yosinski et al, Understanding Neural Networks Through Deep Visualization (ICML 2015)