A Tale of Two Evaluat ions

Donna Harman

Sponsored by: NI ST, ARDA, DARPA

TREC and RI A

1992 1993 1994 1995 1996 1997 1998 1999 2000 2001 2002 2003

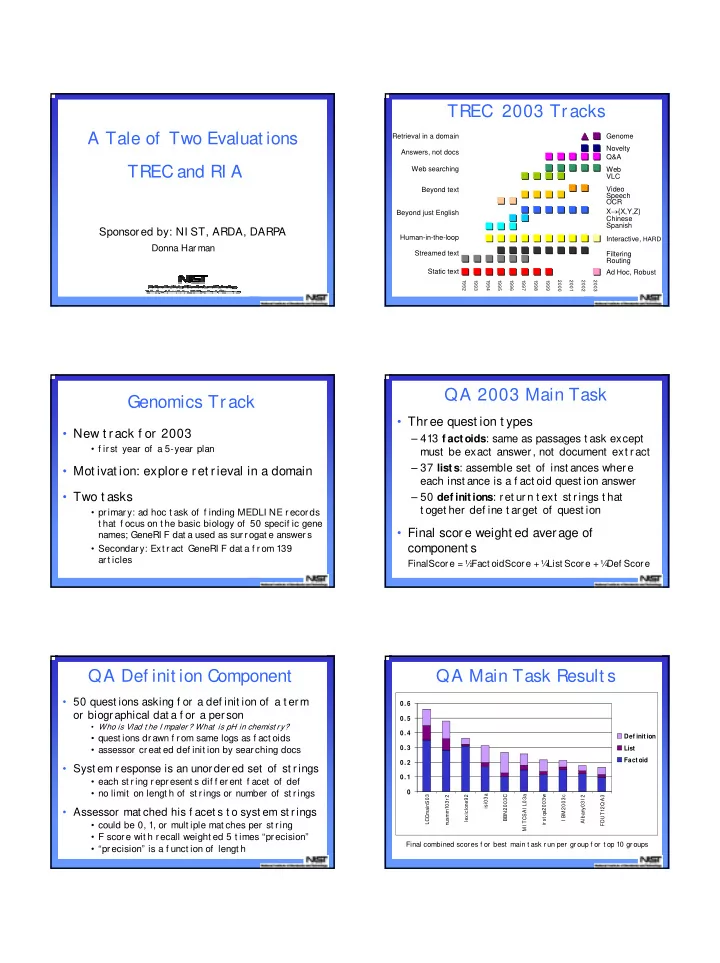

TREC 2003 Tracks

Retrieval in a domain Answers, not docs Web searching Beyond text Beyond just English Human-in-the-loop Streamed text Static text Ad Hoc, Robust Interactive, HARD X→{X,Y,Z} Chinese Spanish Video Speech OCR Web VLC Novelty Q&A Filtering Routing Genome

Genomics Track

- New t rack f or 2003

- f irst year of a 5-year plan

- Mot ivat ion: explore ret rieval in a domain

- Two t asks

- primary: ad hoc t ask of f inding MEDLI NE records

t hat f ocus on t he basic biology of 50 specif ic gene names; GeneRI F dat a used as surrogat e answers

- Secondary: Ext ract GeneRI F dat a f rom 139

art icles

QA 2003 Main Task

- Three quest ion t ypes

– 413 f actoids: same as passages t ask except must be exact answer, not document ext ract – 37 lists: assemble set of inst ances where each inst ance is a f act oid quest ion answer – 50 def initions: ret urn t ext st rings t hat t oget her def ine t arget of quest ion

- Final score weight ed average of

component s

FinalScore = ½ Fact oidScore + ¼ List Score + ¼ Def Score

QA Def init ion Component

- 50 quest ions asking f or a def init ion of a t erm

- r biographical dat a f or a person

- Who is Vlad t he I mpaler? What is pH in chemist ry?

- quest ions drawn f rom same logs as f act oids

- assessor creat ed def init ion by searching docs

- Syst em response is an unordered set of st rings

- each st ring represent s dif f erent f acet of def

- no limit on lengt h of st rings or number of st rings

- Assessor mat ched his f acet s t o syst em st rings

- could be 0, 1, or mult iple mat ches per st ring

- F score wit h recall weight ed 5 t imes “precision”

- “precision” is a f unct ion of lengt h

QA Main Task Result s

- 0. 1

- 0. 2

- 0. 3

- 0. 4

- 0. 5

- 0. 6

LCCmainS03 nusmm103r 2 lexiclone92 isi03a BBN2003C MI TCSAI L03a ir st qa2003w I BM2003c Albany03I 2 FDUT12Q A3

Def init ion List Fact oid Final combined scor es f or best main t ask r un per gr oup f or t op 10 gr oups