1

Data Mining for Knowledge Management

58

The K-Medoids Clustering Method

Find representative objects, called medoids, in clusters

PAM (Partitioning Around Medoids, 1987)

starts from an initial set of medoids and iteratively replaces one of the medoids by one of the non-medoids if it improves the total distance of the resulting clustering

PAM works effectively for small data sets, but does not scale well for large data sets

CLARA (Kaufmann & Rousseeuw, 1990)

CLARANS (Ng & Han, 1994): Randomized sampling

Focusing + spatial data structure (Ester et al., 1995)

Data Mining for Knowledge Management

59

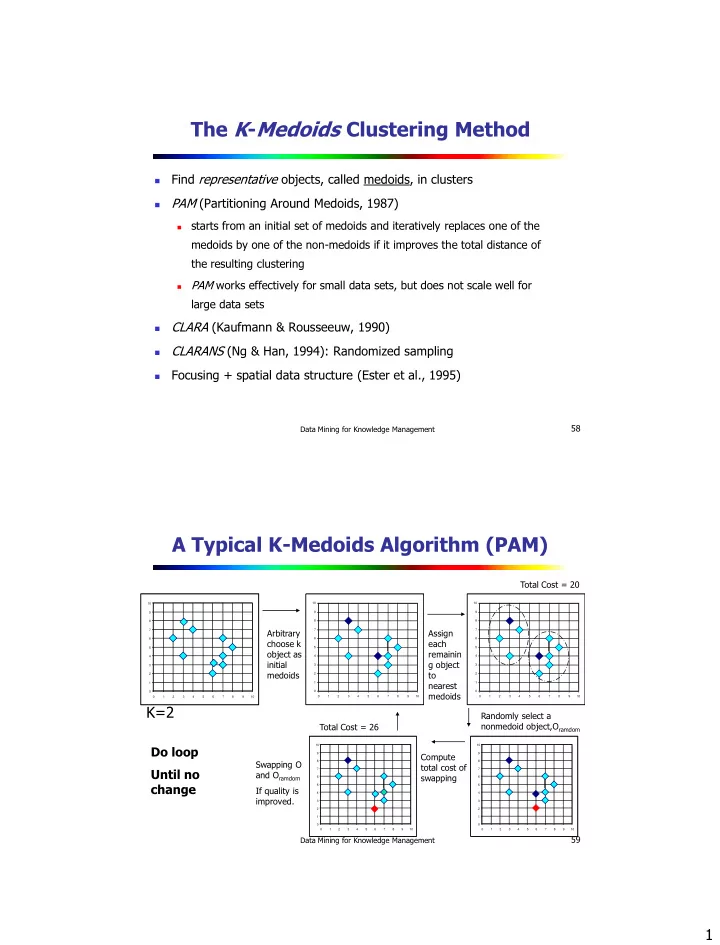

A Typical K-Medoids Algorithm (PAM)

1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10

Total Cost = 20

1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10

K=2

Arbitrary choose k

- bject as

initial medoids

1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10

Assign each remainin g object to nearest medoids Randomly select a nonmedoid object,Oramdom Compute total cost of swapping

1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10

Total Cost = 26 Swapping O and Oramdom If quality is improved.

Do loop Until no change

1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10