Introduction The DSM matrix

The DSM data matrix

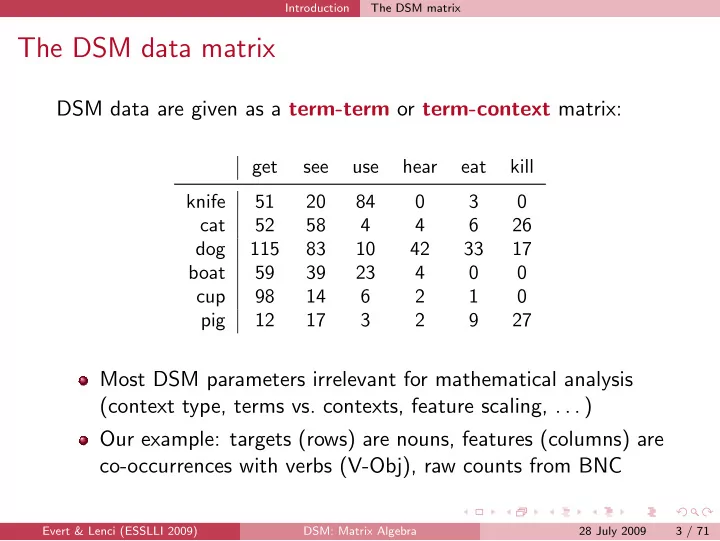

DSM data are given as a term-term or term-context matrix:

get see use hear eat kill knife 51 20 84 3 cat 52 58 4 4 6 26 dog 115 83 10 42 33 17 boat 59 39 23 4 cup 98 14 6 2 1 pig 12 17 3 2 9 27

Most DSM parameters irrelevant for mathematical analysis (context type, terms vs. contexts, feature scaling, . . . ) Our example: targets (rows) are nouns, features (columns) are co-occurrences with verbs (V-Obj), raw counts from BNC

Evert & Lenci (ESSLLI 2009) DSM: Matrix Algebra 28 July 2009 3 / 71