Tax axono

- nomy

my of f ge generativ erative e mo models dels

- Prof. Leal-Taixé and Prof. Niessner

1

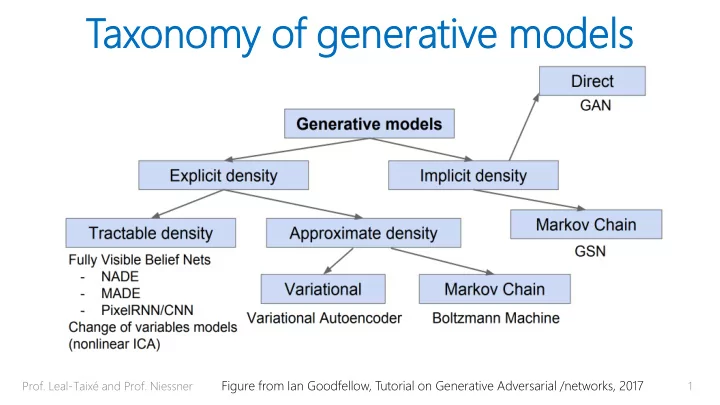

Figure from Ian Goodfellow, Tutorial on Generative Adversarial /networks, 2017

Tax axono onomy my of f ge generativ erative e mo models - - PowerPoint PPT Presentation

Tax axono onomy my of f ge generativ erative e mo models dels Prof. Leal-Taix and Prof. Niessner Figure from Ian Goodfellow, Tutorial on Generative Adversarial /networks, 2017 1 Tax axono onomy my of f ge generativ erative e mo

1

Figure from Ian Goodfellow, Tutorial on Generative Adversarial /networks, 2017

2

Figure from Ian Goodfellow, Tutorial on Generative Adversarial /networks, 2017

3

4

https://github.com/hindupuravinash/the-gan-zoo

Convolution no padding, no stride

https://github.com/vdumoulin/conv_arithmetic

Transposed convolution no padding, no stride Input Output Input Output

Conv Deconv

Conv Deconv Input Image Output Image

Reconstruction Loss (often L2)

Latent space z dim (z) < dim (x) Input x Reconstruction x’ Input images Reconstructed images

Latent space z dim (z) < dim (x) “Test time”:

‘random’ vector Output Image

Reconstruction Loss (often L2)

Interpolation between two chair models

[Dosovitsky et al. 14] Learning to Generate Chairs

[Dosovitsky et al. 14] Learning to Generate Chairs

Morphing between chair models

Latent space z dim (z) < dim (x) “Test time”:

‘random’ vector

Reconstruction Loss Often L2, i.e., sum of squared dist.

Instea ead d of L L2, , can we we “learn”a los

ction? ion?

[Goodfellow et al. 14] GANs (slide McGuinness)

13

𝑨 𝐻 𝐻(𝑨) 𝐸 𝐸(𝐻(𝑨))

[Goodfellow et al. 14] GANs (slide McGuinness)

14

𝑨 𝐻 𝐻(𝑨) 𝐸 𝑦 𝐸(𝑦) 𝐸(𝐻(𝑨))

[Goodfellow et al. 14/16] GANs

real data fake data

Discriminator loss Generator loss binary cross entropy

– G minimizes probability that D is correct – Equilibrium is saddle point of discriminator loss

[Goodfellow et al. 14/16] GANs

vides supervi visio ion n (i.e., e., gradi dients nts) ) for r G

– G maximizes the log-probability of D being mistaken – G can still learn even when D rejects all generator samples

Discriminator loss

[Goodfellow et al. 14/16] GANs

Generator loss

18

19 https://papers.nips.cc/paper/5423-generative-adversarial-nets

20

https://medium.com/ai-society/gans-from-scratch-1-a-deep-introduction-with-code-in-pytorch-and-tensorflow-cb03cdcdba0f

[Goodfellow et al. 14/16] GANs

Minimax Heuristic

DCGAN: https://github.com/carpedm20/DCGAN-tensorflow

Generator of Deep Convolutional GANs

DCGAN: https://github.com/carpedm20/DCGAN-tensorflow

Results on MNIST

23

Results on CelebA (200k relatively well aligned portrait photos)

DCGAN: https://github.com/carpedm20/DCGAN-tensorflow

DCGAN: https://github.com/carpedm20/DCGAN-tensorflow

Asian face dataset

25

DCGAN: https://github.com/carpedm20/DCGAN-tensorflow

26

DCGAN: https://github.com/carpedm20/DCGAN-tensorflow

27

Loss of D and G on custom dataset

28

https://stackoverflow.com/questions/44313306/dcgans-discriminator-getting-too-strong-too-quickly-to-allow-generator-to-learn

29

https://medium.com/ai-society/gans-from-scratch-1-a-deep-introduction-with-code-in-pytorch-and-tensorflow-cb03cdcdba0f

30

https://stackoverflow.com/questions/42690721/how-to-interpret-the-discriminators-loss-and-the-generators-loss-in-generative

while loss_discriminator > t_d: train discriminator while loss_generator > t_g: train generator

31

Need balance

– No good gradients (cannot get better than teacher…)

– Discriminator will always be right

32

𝐻 max 𝐸

𝑊 𝐻, 𝐸 ≠ max

𝐸

min

𝐻 𝑊(𝐻, 𝐸)

sample

33

[Metz et al. 16]

correlates with # of modes

34

Slide credit Ming-Yu Liu

GAN-results on specific domains (e.g., faces)

correlates with dim of manifold

35

Slide credit Ming-Yu Liu

more mode collapse

36

37

38

they are

will always look good, but how to quantify?

39

Human evaluation:

hyperparameters…

40

Incept ceptio ion n Score (IS)

generated images

41

Incept ceptio ion n Score (IS)

classified with high confidence (i.e., high scores only

classes

42

What t if w we only have ve one good d image ge per class?

– If we end up with a strong discriminator, then generator must also be good – Use D features, for classification network – Only fine-tune last layer – If high class accuracy -> we have a good D and G

43

Caveat: not sure if people do this... Couldn’t find paper

44

45 https://github.com/soumith/ganhacks

46

interpolation via a great circle, rather than a straight line from point A to point B

Networks ref code https://github.com/dribnet/plat has more details

batches for real and fake, i.e. each mini- batch needs to contain

all generated images.

47

48

signal to generator:

49

Salimans et al. 17 “Improved Techniques for Training GANs”

Some value smaller than 1; e.g.,0.9

𝝁

50

Srivastava et al. 17 “Learning from Simulated and Unsupervised Images through Adversarial Training”

Help stabilize discriminator training in early stage

51 [Shi et al. 16] https://arxiv.org/pdf/1609.05158.pdf

biased on last latest iterations (i.e., latest training samples),

averaged

52

53

“heuristic is standard…” EBGAN: “Energy-based Generative Adversarial Networks” BEGAN: “Boundary Equilibrium GAN” WGAN: “Wasserstein Generative Adversarial Networks” LSGAN: “Least Squares Generative Adversarial Networks” …. The loss function alone will not make it suddenly work!

54

D(x) for real images to be low.

the reconstruction error for generated images drops below a value m.

55

https://medium.com/@jonathan_hui/gan-energy-based-gan-ebgan-boundary-equilibrium-gan-began-4662cceb7824

loss, measure difference in data distribution of real and generated images

56

https://medium.com/@jonathan_hui/gan-energy-based-gan-ebgan-boundary-equilibrium-gan-began-4662cceb7824

57

Minimum amount of work to move earth from p(x) to q(x)

https://medium.com/@jonathan_hui/gan-wasserstein-gan-wgan-gp-6a1a2aa1b490

58

1-Lipschitz function: upper bound between densities

https://medium.com/@jonathan_hui/gan-wasserstein-gan-wgan-gp-6a1a2aa1b490

59

f is a critic function, defined by a neural network

weights of the discriminator must be within a certain range controlled by hyperparameters c

https://medium.com/@jonathan_hui/gan-wasserstein-gan-wgan-gp-6a1a2aa1b490

60 https://medium.com/@jonathan_hui/gan-wasserstein-gan-wgan-gp-6a1a2aa1b490

61 https://medium.com/@jonathan_hui/gan-wasserstein-gan-wgan-gp-6a1a2aa1b490

62 https://medium.com/@jonathan_hui/gan-wasserstein-gan-wgan-gp-6a1a2aa1b490

63 https://medium.com/@jonathan_hui/gan-wasserstein-gan-wgan-gp-6a1a2aa1b490

64 https://medium.com/@jonathan_hui/gan-wasserstein-gan-wgan-gp-6a1a2aa1b490

+ mitigates mode collapse + generator still learns when critic performs well + actual convergence

training

65

train the actual loss (i.e., D) to provide gradients for G

randomly shuffle loss around; always try easy things first (AE, VAE, ‘simple heuristic’ GAN)

66

67

Credit: Li/Karpathy/Johnson

68

Credit: Li/Karpathy/Johnson

69

https://github.com/tkarras/progressive_growing_of_gans [Karras et al. 17]

71

64×64 4×4

G

Latent 64×64 4×4

D

Real or fake Generated image

72

64×64 4×4

G

Latent 64×64 4×4

D

Real or fake Generated image

73

Generated image 4×4 4×4 1024×1024 1024×1024

G D

Latent Real or fake

74

4×4 4×4 1024×1024 1024×1024

G D

Latent Real or fake

There’s waves everywhere! But where’s the shore?

75

64×64 4×4

G

Latent 64×64 4×4

D

Real or fake 1024×1024 1024×1024

There it is!

76

4×4

G

Latent 4×4

D

Real or fake

77

4×4

G

Latent 4×4

D

Real or fake

78

4×4

G

Latent 4×4

D

Real or fake 8×8 8×8

79

4×4

G

Latent 4×4

D

Real or fake 1024×1024 1024×1024

80

2x

8×8 8×8

2x

16×16 16×16

2x

32×32 32×32 4×4 4×4

G

Nearest-neighbor upsampling 3×3 convolution Replicated block

81

2x

16×16 16×16

2x

32×32 32×32

2x

8×8 8×8

G

toRGB

1×1 convolution

4×4 4×4

82

toRGB toRGB 2x

16×16 16×16

2x

32×32 32×32 4×4 4×4

G

2x

8×8 8×8

83

toRGB toRGB 2x

16×16 16×16

2x

32×32 32×32 4×4 4×4

G

2x

8×8 8×8

+

Linear crossfade

84

2x

16×16 16×16

2x

32×32 32×32

2x

8×8 8×8 4×4 4×4 32×32 32×32

0.5x

16×16 16×16

0.5x

8×8 4×4

fromRGB

8×8

0.5x

4×4

G D

toRGB

85

https://github.com/tkarras/progressive_growing_of_gans [Karras et al. 17]

– > Mostly with different losses – > Extremely hard to train and evaluate

– Conditional itional GANs Ns (cGANs Ns)! )!

will send around asap.

88