1

(c) 2003 Thomas G. Dietterich and Devika Subramanian 1

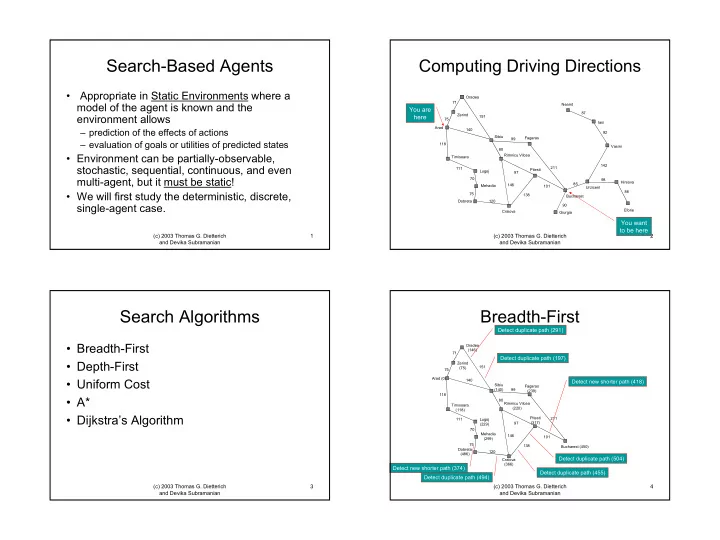

Search-Based Agents

- Appropriate in Static Environments where a

model of the agent is known and the environment allows

– prediction of the effects of actions – evaluation of goals or utilities of predicted states

- Environment can be partially-observable,

stochastic, sequential, continuous, and even multi-agent, but it must be static!

- We will first study the deterministic, discrete,

single-agent case.

(c) 2003 Thomas G. Dietterich and Devika Subramanian 2

Computing Driving Directions

Arad Oradea Zerind Timisoara Lugoj Dobreta Sibiu Rimnicu Vilcea Fagaras Pitesti Mehadia Craiova Bucharest Giurgia Urzicenl Neamt Iasi Vasini Hirsova Eforie 71 75 118 140 151 99 80 111 70 75 120 146 97 138 101 211 85 90 142 92 87 98 86

You are here You want to be here

(c) 2003 Thomas G. Dietterich and Devika Subramanian 3

Search Algorithms

- Breadth-First

- Depth-First

- Uniform Cost

- A*

- Dijkstra’s Algorithm

(c) 2003 Thomas G. Dietterich and Devika Subramanian 4

Oradea (146) 71

Breadth-First

Arad (0) 75 Zerind (75) 118 Timisoara (118) Lugoj (229) 111 Mehadia (299) 70 75 Dobreta (486) 120 140 Sibiu (140) 151 Rimnicu Vilcea (220) 80 Fagaras (239) 99 Craiova (366) 146 Pitesti (317) 97 138 Bucharest (450) 211 101

Detect duplicate path (291) Detect new shorter path (418) Detect duplicate path (455) Detect duplicate path (504) Detect new shorter path (374) Detect duplicate path (494) Detect duplicate path (197)