Jan S Hesthaven Brown University Jan.Hesthaven@Brown.edu

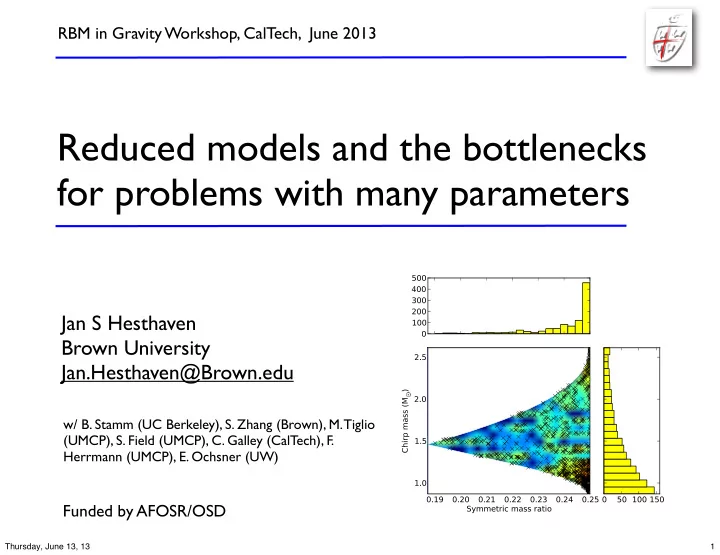

Reduced models and the bottlenecks for problems with many parameters

RBM in Gravity Workshop, CalTech, June 2013

w/ B. Stamm (UC Berkeley), S. Zhang (Brown), M. Tiglio (UMCP), S. Field (UMCP), C. Galley (CalTech), F. Herrmann (UMCP), E. Ochsner (UW)

Funded by AFOSR/OSD

1 Thursday, June 13, 13