Learning From Data Lecture 8 Linear Classification and Regression

Linear Classification Linear Regression

- M. Magdon-Ismail

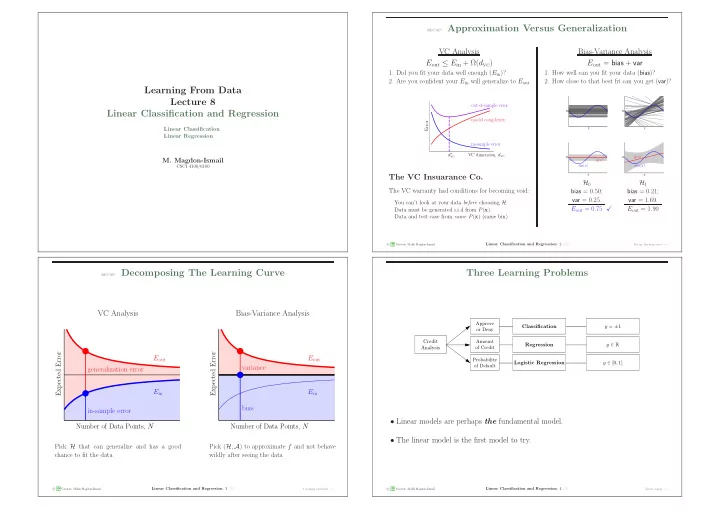

CSCI 4100/6100 recap: Approximation Versus Generalization

VC Analysis Bias-Variance Analysis Eout ≤ Ein + Ω(dvc) Eout = bias + var

- 1. Did you fit your data well enough (Ein)?

- 2. Are you confident your Ein will generalize to Eout

- 1. How well can you fit your data (bias)?

- 2. How close to that best fit can you get (var)?

in-sample error model complexity

- ut-of-sample error

VC dimension, dvc Error d∗

vc

The VC Insuarance Co.

The VC warranty had conditions for becoming void:

You can’t look at your data before choosing H. Data must be generated i.i.d from P(x). Data and test case from same P(x) (same bin).

x y x y x y ¯ g(x) sin(x) x y ¯ g(x) sin(x)

H0 bias = 0.50; var = 0.25. Eout = 0.75 H1 bias = 0.21; var = 1.69. Eout = 1.90

c A M L Creator: Malik Magdon-Ismail

Linear Classification and Regression: 2 /23

Recap: learning curve − →

recap: Decomposing The Learning Curve

VC Analysis Bias-Variance Analysis

Number of Data Points, N Expected Error in-sample error generalization error Eout Ein Number of Data Points, N Expected Error bias variance Eout Ein

Pick H that can generalize and has a good chance to fit the data Pick (H, A) to approximate f and not behave wildly after seeing the data

c A M L Creator: Malik Magdon-Ismail

Linear Classification and Regression: 3 /23

3 learning problems − →

Three Learning Problems

Logistic Regression Credit Analysis Approve

- r Deny

Amount

- f Credit

Probability

- f Default

y ∈ R y ∈ [0, 1] y = ±1 Classification Regression

- Linear models are perhaps the fundamental model.

- The linear model is the first model to try.

c A M L Creator: Malik Magdon-Ismail

Linear Classification and Regression: 4 /23

Linear signal − →