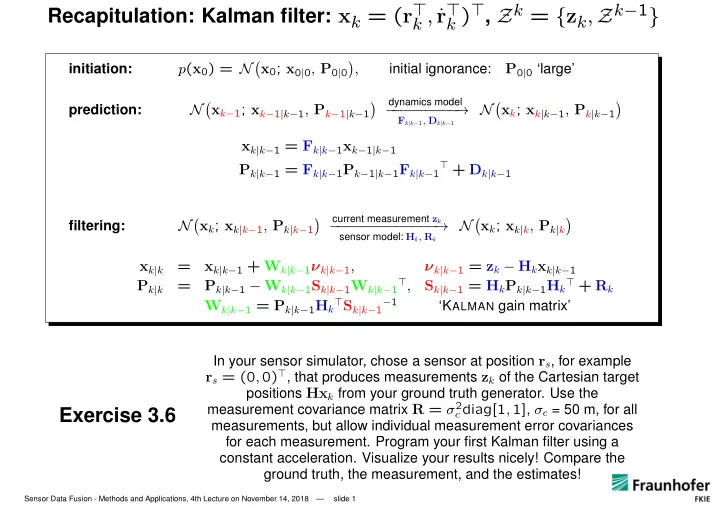

Recapitulation: Kalman filter: xk = (r⊤

k , ˙

r⊤

k )⊤, Zk = {zk, Zk−1}

initiation: p(x0) = N

- x0; x0|0, P0|0

- ,

initial ignorance:

P0|0 ‘large’

prediction: Nxk−1; xk−1|k−1, Pk−1|k−1

- dynamics model

− − − − − − − − − →

Fk|k−1, Dk|k−1

Nxk; xk|k−1, Pk|k−1

- xk|k−1 = Fk|k−1xk−1|k−1

Pk|k−1 = Fk|k−1Pk−1|k−1Fk|k−1

⊤ + Dk|k−1

filtering: N

- xk; xk|k−1, Pk|k−1

- current measurement zk

− − − − − − − − − − − − − →

sensor model: Hk, Rk

N

- xk; xk|k, Pk|k

- xk|k

=

xk|k−1 + Wk|k−1νk|k−1, νk|k−1 = zk − Hkxk|k−1 Pk|k

=

Pk|k−1 − Wk|k−1Sk|k−1Wk|k−1⊤, Sk|k−1 = HkPk|k−1Hk⊤ + Rk Wk|k−1 = Pk|k−1Hk⊤Sk|k−1−1

‘KALMAN gain matrix’

Exercise 3.6

In your sensor simulator, chose a sensor at position rs, for example

rs = (0, 0)⊤, that produces measurements zk of the Cartesian target

positions Hxk from your ground truth generator. Use the measurement covariance matrix R = σ2

c diag[1, 1], σc = 50 m, for all

measurements, but allow individual measurement error covariances for each measurement. Program your first Kalman filter using a constant acceleration. Visualize your results nicely! Compare the ground truth, the measurement, and the estimates!

Sensor Data Fusion - Methods and Applications, 4th Lecture on November 14, 2018 — slide 1