Provably Robust Boosted Decision Stumps and Trees against Adversarial Attacks

Maksym Andriushchenko (EPFL∗) Matthias Hein (University of T¨ ubingen)

∗Work done at the University of T¨

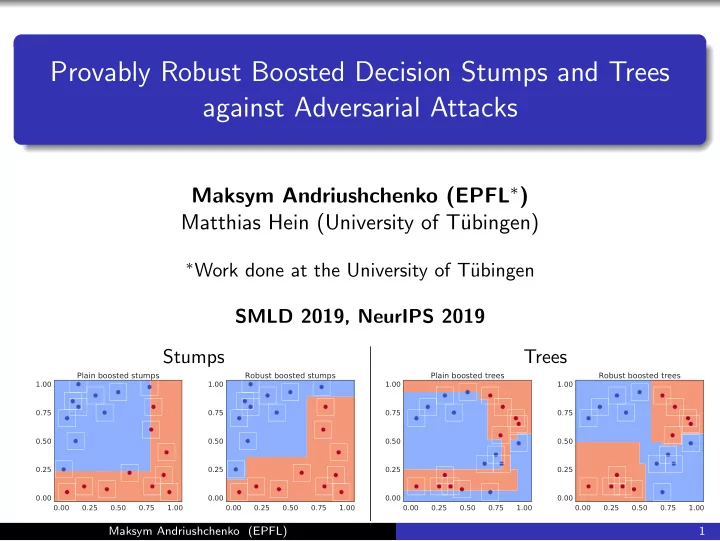

ubingen SMLD 2019, NeurIPS 2019 Stumps Trees

0.00 0.25 0.50 0.75 1.00 0.00 0.25 0.50 0.75 1.00 Plain boosted stumps 0.00 0.25 0.50 0.75 1.00 0.00 0.25 0.50 0.75 1.00 Robust boosted stumps 0.00 0.25 0.50 0.75 1.00 0.00 0.25 0.50 0.75 1.00 Plain boosted trees 0.00 0.25 0.50 0.75 1.00 0.00 0.25 0.50 0.75 1.00 Robust boosted trees

Maksym Andriushchenko (EPFL) 1