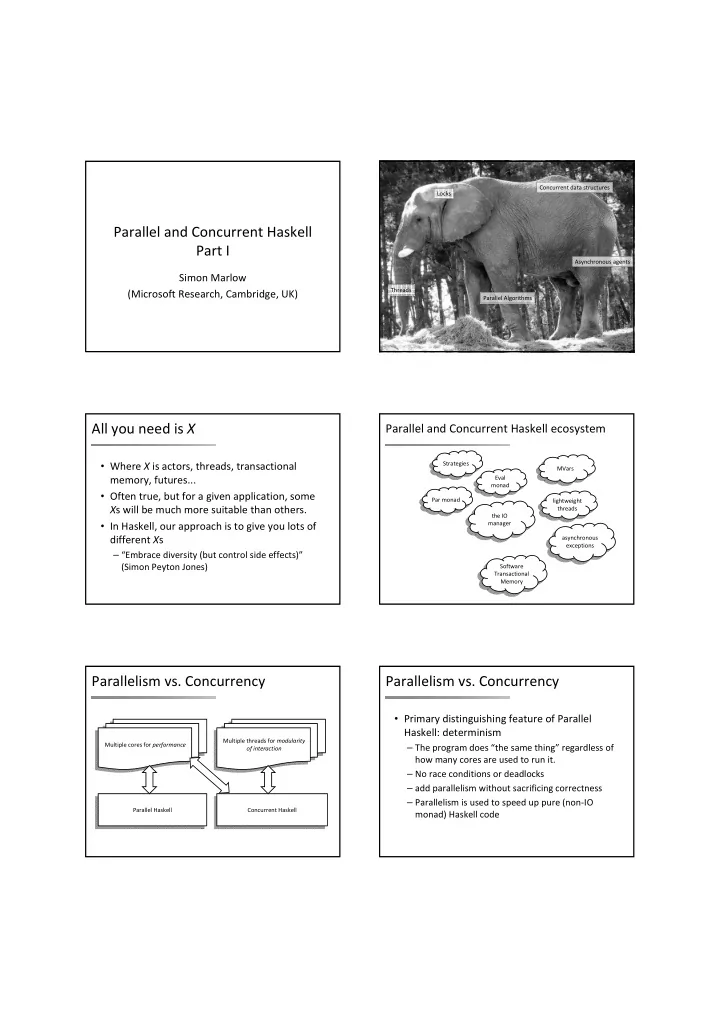

SLIDE 2 Parallelism vs. Concurrency

- Primary distinguishing feature of Concurrent

Haskell: threads of control

– Concurrent programming is done in the IO monad

- because threads have effects

- effects from multiple threads are interleaved

nondeterministically at runtime.

– Concurrent programming allows programs that interact with multiple external agents to be modular

- the interaction with each agent is programmed separately

- Allows programs to be structured as a collection of

interacting agents (actors)

- I. Parallel Haskell

- In this part of the course, you will learn how to:

– Do basic parallelism:

- compile and run a Haskell program, and measure its performance

- parallelise a simple Haskell program (a Sudoku solver)

- use ThreadScope to profile parallel execution

- do dynamic rather than static partitioning

- measure parallel speedup

– use Amdahl’s law to calculate possible speedup

– Work with Evaluation Strategies

- build simple Strategies

- parallelise a data‐mining problem: K‐Means

– Work with the Par Monad

- Use the Par monad for expressing dataflow parallelism

- Parallelise a type‐inference engine

Running example: solving Sudoku

– code from the Haskell wiki (brute force search with some intelligent pruning) – can solve all 49,000 problems in 2 mins – input: a line of text representing a problem

i m por t Sudoku sol ve : : St r i ng - > M aybe G r i d .......2143.......6........2.15..........637...........68...4.....23........7.... .......241..8.............3...4..5..7.....1......3.......51.6....2....5..3...7... .......24....1...........8.3.7...1..1..8..5.....2......2.4...6.5...7.3...........

Solving Sudoku problems

– divide the file into lines – call the solver for each line

i m por t Sudoku i m por t Cont r ol . Except i on i m por t Syst em . Envi r onm ent m ai n : : I O ( ) m ai n = do [ f ] <- get Ar gs gr i ds <- f m ap l i nes $ r eadFi l e f m apM ( eval uat e . sol ve) gr i ds

eval uat e : : a - > I O a

Compile the program...

$ ghc - O 2 sudoku1. hs - r t sopt s [ 1 of 2] Com pi l i ng Sudoku ( Sudoku. hs, Sudoku. o ) [ 2 of 2] Com pi l i ng M ai n ( sudoku1. hs, sudoku1. o ) Li nki ng sudoku1 . . . $

Run the program...

$ . / sudoku1 sudoku17. 1000. t xt +RTS - s 2, 392, 127, 440 byt es al l ocat ed i n t he heap 36, 829, 592 byt es copi ed dur i ng G C 191, 168 byt es m axi m um r esi dency ( 11 sam pl e( s) ) 82, 256 byt es m axi m um sl op 2 M B t ot al m em

B l ost due t o f r agm ent at i on) G ener at i on 0: 4570 col l ect i ons, 0 par al l el , 0. 14s, 0. 13s el apsed G ener at i on 1: 11 col l ect i ons, 0 par al l el , 0. 00s, 0. 00s el apsed . . . I NI T t i m e 0. 00s ( 0. 00s el apsed) M UT t i m e 2. 92s ( 2. 92s el apsed) G C t i m e 0. 14s ( 0. 14s el apsed) EXI T t i m e 0. 00s ( 0. 00s el apsed) Tot al t i m e 3. 06s ( 3. 06s el apsed) . . .