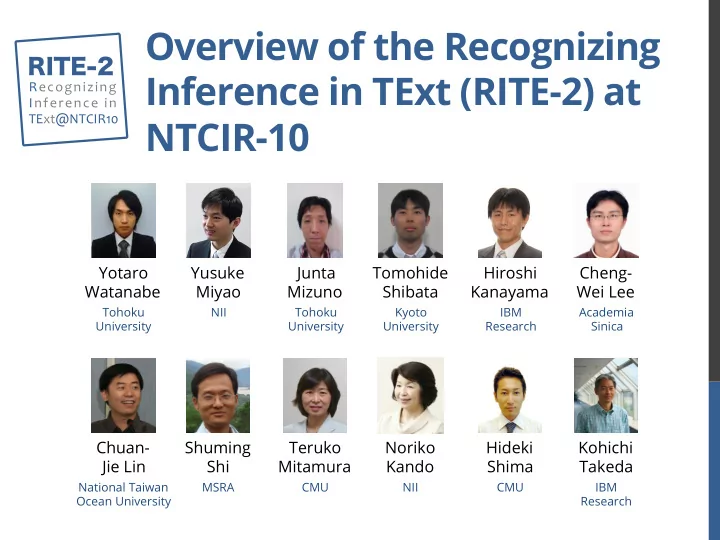

Overview of the Recognizing Inference in TExt (RITE-2) at NTCIR-10

Yotaro Watanabe Yusuke Miyao Junta Mizuno Tomohide Shibata Cheng- Wei Lee Chuan- Jie Lin Teruko Mitamura

Tohoku University NII Kyoto University CMU Academia Sinica National Taiwan Ocean University

Hideki Shima Hiroshi Kanayama Kohichi Takeda

IBM Research

Shuming Shi

MSRA

RITE-2

Recognizing ¡ Inference ¡in ¡ TExt@NTCIR10 ¡

Tohoku University IBM Research CMU

Noriko Kando

NII