Density Estimation Classification Regression

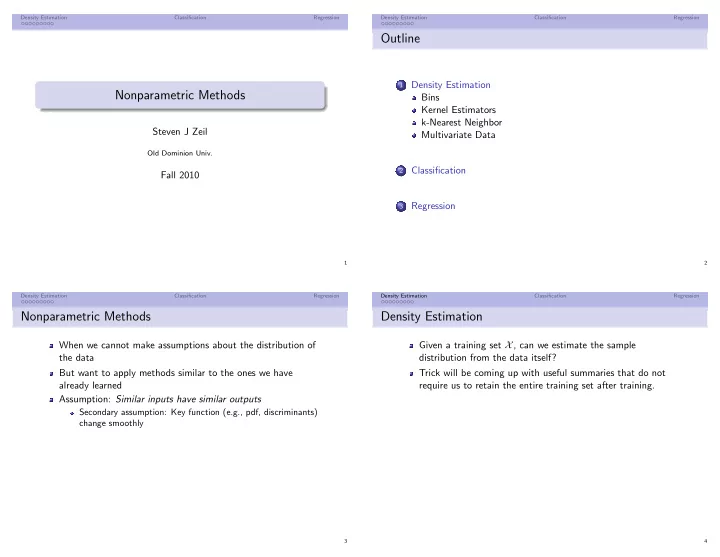

Nonparametric Methods

Steven J Zeil

Old Dominion Univ.

Fall 2010

1 Density Estimation Classification Regression

Outline

1

Density Estimation Bins Kernel Estimators k-Nearest Neighbor Multivariate Data

2

Classification

3

Regression

2 Density Estimation Classification Regression

Nonparametric Methods

When we cannot make assumptions about the distribution of the data But want to apply methods similar to the ones we have already learned Assumption: Similar inputs have similar outputs

Secondary assumption: Key function (e.g., pdf, discriminants) change smoothly

3 Density Estimation Classification Regression

Density Estimation

Given a training set X, can we estimate the sample distribution from the data itself? Trick will be coming up with useful summaries that do not require us to retain the entire training set after training.

4