DM63 HEURISTICS FOR COMBINATORIAL OPTIMIZATION

Lecture 14

Other Metaheuristics

Marco Chiarandini

Outline

- 1. Implementation Contest

- 2. Other Metaheuristics

Evolutionary Algorithm Extensions Model Based Metaheuristics

- 3. Particle swarm optimization (PSO)

- 4. Resume

Capacited Vehicle Routing

DM63 – Heuristics for Combinatorial Optimization Problems 2

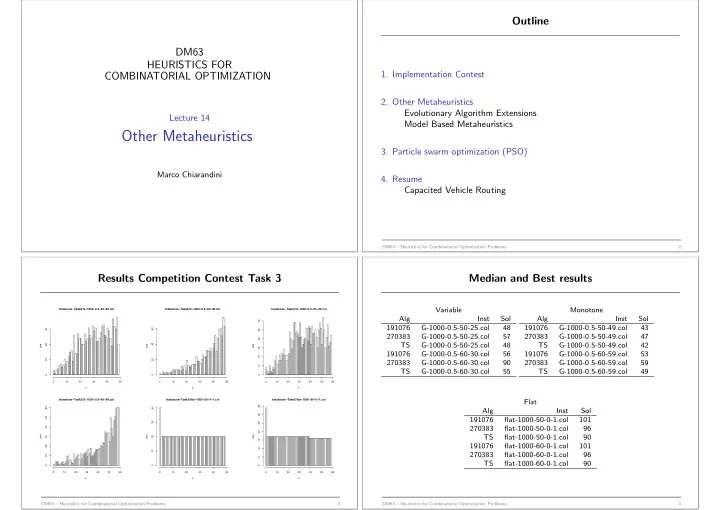

Results Competition Contest Task 3

Instances−Task3/G−1000−0.5−50−25.col k size 10 20 30 40 50 10 20 30 Instances−Task3/G−1000−0.5−50−49.col k size 10 20 30 40 50 20 40 60 Instances−Task3/G−1000−0.5−60−30.col k size 10 20 30 40 50 60 5 10 15 20 25 30 Instances−Task3/G−1000−0.5−60−59.col k size 10 20 30 40 50 60 10 20 30 40 50 60 Instances−Task3/flat−1000−50−0−1.col k size 10 20 30 40 50 10 20 30 40 Instances−Task3/flat−1000−60−0−1.col k size 10 20 30 40 50 60 5 10 15 20 25 30 35

DM63 – Heuristics for Combinatorial Optimization Problems 3

Median and Best results

Variable Alg Inst Sol 191076 G-1000-0.5-50-25.col 48 270383 G-1000-0.5-50-25.col 57 TS G-1000-0.5-50-25.col 48 191076 G-1000-0.5-60-30.col 56 270383 G-1000-0.5-60-30.col 90 TS G-1000-0.5-60-30.col 55 Monotone Alg Inst Sol 191076 G-1000-0.5-50-49.col 43 270383 G-1000-0.5-50-49.col 47 TS G-1000-0.5-50-49.col 42 191076 G-1000-0.5-60-59.col 53 270383 G-1000-0.5-60-59.col 59 TS G-1000-0.5-60-59.col 49 Flat Alg Inst Sol 191076 flat-1000-50-0-1.col 101 270383 flat-1000-50-0-1.col 96 TS flat-1000-50-0-1.col 90 191076 flat-1000-60-0-1.col 101 270383 flat-1000-60-0-1.col 96 TS flat-1000-60-0-1.col 90

DM63 – Heuristics for Combinatorial Optimization Problems 4