SLIDE 9 343

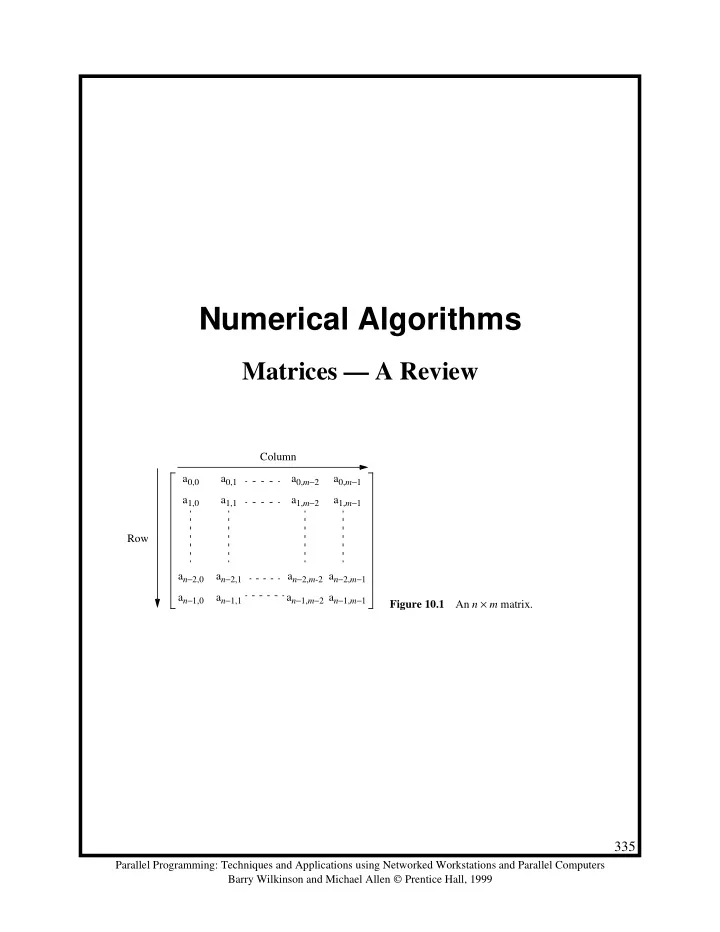

Parallel Programming: Techniques and Applications using Networked Workstations and Parallel Computers Barry Wilkinson and Michael Allen Prentice Hall, 1999 a0,0 a0,1 a0,2 a0,3 a1,0 a2,0 a3,0 a1,2 a1,1 a2,1 a3,1 a2,2 a3,2 a3,3 a1,3 a2,3 b0,0 b0,1 b0,2 b0,3 b1,0 b2,0 b3,0 b1,2 b1,1 b2,1 b3,1 b2,2 b3,2 b3,3 b1,3 b2,3 a0,0 a0,1 a1,0 a1,1 b0,0 b0,1 b1,0 b1,1 a0,2 a0,3 a1,2 a1,3 b2,0 b2,1 b3,0 b3,1 (a) Matrices (b) Multiplying A0,0 × B0,0 to obtain C0,0 a0,0b0,0+a0,1b1,0 a0,0b0,1+a0,1b1,1 a1,0b0,0+a1,1b1,0 a1,0b0,1+a1,1b1,1 A0,0 B0,0 A0,1 B1,0 a0,2b2,0+a0,3b3,0 a0,2b2,1+a0,3b3,1 a1,2b2,0+a1,3b3,0 a1,2b2,1+a1,3b3,1

+ × + × = =

a0,0b0,0+a0,1b1,0+a0,2b2,0+a0,3b3,0 a0,0b0,1+a0,1b1,1+a0,2b2,1+a0,3b3,1 a1,0b0,0+a1,1b1,0+a1,2b2,0+a1,3b3,0 a1,0b0,1+a1,1b1,1+a1,2b2,1+a1,3b3,1 = C0,0 Figure 10.5 Submatrix multiplication.

×